Fabric Eventstream - overview

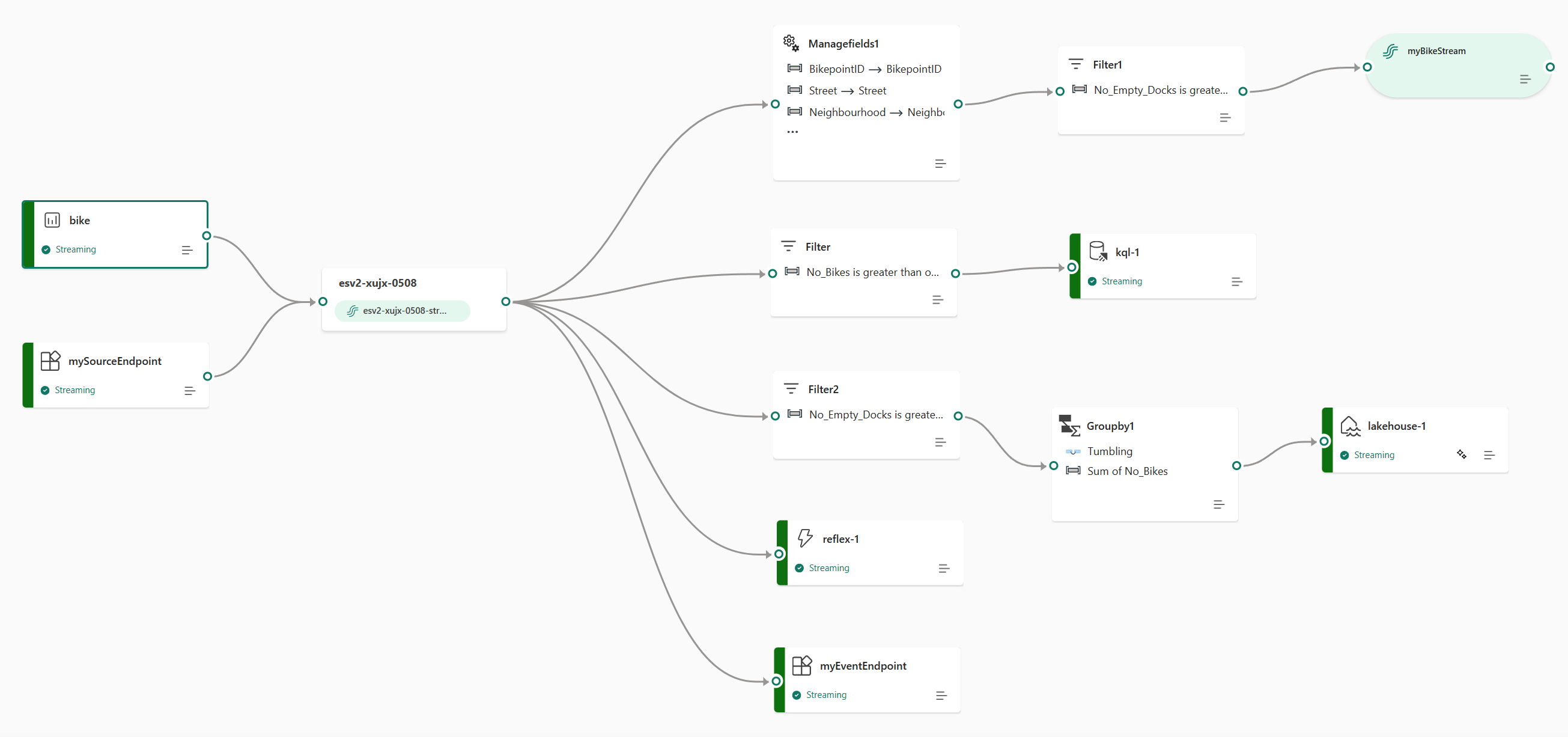

The eventstreams feature in the Microsoft Fabric Real-Time Intelligence experience lets you bring real-time events into Fabric, transform them, and then route them to various destinations without writing any code (no-code). You create an eventstream, which is an instance of the Eventstream item in Fabric, add event data sources to the stream, optionally add transformations to transform the event data, and then route the data to supported destinations. Additionally, with Apache Kafka endpoints available on the Eventstream item, you can send or consume real-time events using the Kafka protocol.

Bring events into Fabric

The eventstreams feature provides you with various source connectors to fetch event data from the various sources. There are more sources available when you enable Enhanced capabilities at the time of creating an eventstream.

| Sources | Description |

|---|---|

| Azure Event Hubs | If you have an Azure event hub, you can ingest event hub data into Microsoft Fabric using Eventstream. |

| Azure IoT Hub | If you have an Azure IoT hub, you can ingest IoT data into Microsoft Fabric using Eventstream. |

| Azure SQL Database Change Data Capture (CDC) | The Azure SQL Database CDC source connector allows you to capture a snapshot of the current data in an Azure SQL database. The connector then monitors and records any future row-level changes to this data. |

| PostgreSQL Database CDC | The PostgreSQL Database Change Data Capture (CDC) source connector allows you to capture a snapshot of the current data in a PostgreSQL database. The connector then monitors and records any future row-level changes to this data. |

| MySQL Database CDC | The Azure MySQL Database Change Data Capture (CDC) Source connector allows you to capture a snapshot of the current data in an Azure Database for MySQL database. You can specify the tables to monitor, and the eventstream records any future row-level changes to the tables. |

| Azure Cosmos DB CDC | The Azure Cosmos DB Change Data Capture (CDC) source connector for Microsoft Fabric event streams lets you capture a snapshot of the current data in an Azure Cosmos DB database. The connector then monitors and records any future row-level changes to this data. |

| SQL Server on virtual machine (VM) Database (DB) CDC | The SQL Server on VM DB (CDC) source connector for Fabric event streams allows you to capture a snapshot of the current data in a SQL Server database on VM. The connector then monitors and records any future row-level changes to the data. |

| Azure SQL Managed Instance CDC | The Azure SQL Managed Instance CDC source connector for Microsoft Fabric event streams allows you to capture a snapshot of the current data in a SQL Managed Instance database. The connector then monitors and records any future row-level changes to this data. |

| Google Cloud Pub/Sub | Google Pub/Sub is a messaging service that enables you to publish and subscribe to streams of events. You can add Google Pub/Sub as a source to your eventstream to capture, transform, and route real-time events to various destinations in Fabric. |

| Amazon Kinesis Data Streams | Amazon Kinesis Data Streams is a massively scalable, highly durable data ingestion, and processing service optimized for streaming data. By integrating Amazon Kinesis Data Streams as a source within your eventstream, you can seamlessly process real-time data streams before routing them to multiple destinations within Fabric. |

| Confluent Cloud Kafka | Confluent Cloud Kafka is a streaming platform offering powerful data streaming and processing functionalities using Apache Kafka. By integrating Confluent Cloud Kafka as a source within your eventstream, you can seamlessly process real-time data streams before routing them to multiple destinations within Fabric. |

| Amazon MSK Kafka | Amazon MSK Kafka is a fully managed Kafka service that simplifies the setup, scaling, and management. By integrating Amazon MSK Kafka as a source within your eventstream, you can seamlessly bring the real-time events from your MSK Kafka and process them before routing them to multiple destinations within Fabric. |

| Sample data | You can choose Bicycles, Yellow Taxi, or Stock Market events as a sample data source to test the data ingestion while setting up an eventstream. |

| Custom endpoint (that is, Custom App in standard capability) | The custom endpoint feature allows your applications or Kafka clients to connect to Eventstream using a connection string, enabling the smooth ingestion of streaming data into Eventstream. |

| Azure Service Bus (preview) | You can ingest data from an Azure Service Bus queue or a topic's subscription into Microsoft Fabric using Eventstream. |

| Apache Kafka (preview) | Apache Kafka is an open-source, distributed platform for building scalable, real-time data systems. By integrating Apache Kafka as a source within your eventstream, you can seamlessly bring real-time events from your Apache Kafka and process them before routing them to multiple destinations within Fabric. |

| Azure Blob Storage events (preview) | Azure Blob Storage events are triggered when a client creates, replaces, or deletes a blob. The connector allows you to link Blob Storage events to Fabric events in Real-Time hub. You can convert these events into continuous data streams and transform them before routing them to various destinations in Fabric. |

| Fabric Workspace Item events (preview) | Fabric Workspace Item events are discrete Fabric events that occur when changes are made to your Fabric Workspace. These changes include creating, updating, or deleting a Fabric item. With Fabric event streams, you can capture these Fabric workspace events, transform them, and route them to various destinations in Fabric for further analysis. |

| Fabric OneLake events (preview) | OneLake events allow you to subscribe to changes in files and folders in OneLake, and then react to those changes in real-time. With Fabric event streams, you can capture these OneLake events, transform them, and route them to various destinations in Fabric for further analysis. This seamless integration of OneLake events within Fabric event streams gives you greater flexibility for monitoring and analyzing activities in your OneLake. |

| Fabric Job events (preview) | Job events allow you to subscribe to changes produced when Fabric runs a job. For example, you can react to changes when refreshing a semantic model, running a scheduled pipeline, or running a notebook. Each of these activities can generate a corresponding job, which in turn generates a set of corresponding job events. With Fabric event streams, you can capture these Job events, transform them, and route them to various destinations in Fabric for further analysis. This seamless integration of Job events within Fabric event streams gives you greater flexibility for monitoring and analyzing activities in your Job. |

Process events using no-code experience

The drag and drop experience gives you an intuitive and easy way to create your event data processing, transforming, and routing logic without writing any code. An end-to-end data flow diagram in an eventstream can provide you with a comprehensive understanding of the data flow and organization. The event processor editor is a no-code experience that allows you to drag and drop to design the event data processing logic.

| Transformation | Description |

|---|---|

| Filter | Use the Filter transformation to filter events based on the value of a field in the input. Depending on the data type (number or text), the transformation keeps the values that match the selected condition, such as is null or is not null. |

| Manage fields | The Manage fields transformation allows you to add, remove, change data type, or rename fields coming in from an input or another transformation. |

| Aggregate | Use the Aggregate transformation to calculate an aggregation (Sum, Minimum, Maximum, or Average) every time a new event occurs over a period of time. This operation also allows for the renaming of these calculated columns, and filtering or slicing the aggregation based on other dimensions in your data. You can have one or more aggregations in the same transformation. |

| Group by | Use the Group by transformation to calculate aggregations across all events within a certain time window. You can group by the values in one or more fields. It's like the Aggregate transformation allows for the renaming of columns, but provides more options for aggregation and includes more complex options for time windows. Like Aggregate, you can add more than one aggregation per transformation. |

| Union | Use the Union transformation to connect two or more nodes and add events with shared fields (with the same name and data type) into one table. Fields that don't match are dropped and not included in the output. |

| Expand | Use the Expand array transformation to create a new row for each value within an array. |

| Join | Use the Join transformation to combine data from two streams based on a matching condition between them. |

If you enabled Enhanced capabilities while creating an eventstream, the transformation operations are supported for all destinations (with derived stream acting as an intermediate bridge for some destinations, like Custom endpoint, Fabric Activator). If you didn't, the transformation operations are available only for the Lakehouse and Eventhouse (event processing before ingestion) destinations.

Route events to destinations

The Fabric event streams feature supports sending data to the following supported destinations.

| Destination | Description |

|---|---|

| Custom endpoint (i.e., Custom App in standard capability) | With this destination, you can easily route your real-time events to a custom endpoint. You can connect your own applications to the eventstream and consume the event data in real time. This destination is useful when you want to egress real-time data to an external system outside Microsoft Fabric. |

| Eventhouse | This destination lets you ingest your real-time event data into an Eventhouse, where you can use the powerful Kusto Query Language (KQL) to query and analyze the data. With the data in the Eventhouse, you can gain deeper insights into your event data and create rich reports and dashboards. You can choose between two ingestion modes: Direct ingestion and Event processing before ingestion. |

| Lakehouse | This destination gives you the ability to transform your real-time events before ingesting them into your lakehouse. Real-time events convert into Delta Lake format and then store in the designated lakehouse tables. This destination supports data warehousing scenarios. |

| Derived stream | Derived stream is a specialized type of destination that you can create after adding stream operations, such as Filter or Manage Fields, to an eventstream. The derived stream represents the transformed default stream following stream processing. You can route the derived stream to multiple destinations in Fabric, and view the derived stream in the Real-Time hub. |

| Fabric Activator (preview) | This destination lets you directly connect your real-time event data to a Fabric Activator. Activator is a type of intelligent agent that contains all the information necessary to connect to data, monitor for conditions, and act. When the data reaches certain thresholds or matches other patterns, Activator automatically takes appropriate action such as alerting users or kicking off Power Automate workflows. |

You can attach multiple destinations in an eventstream to simultaneously receive data from your eventstreams without interfering with each other.

Note

We recommend that you use the Microsoft Fabric event streams feature with at least four capacity units (SKU: F4)

Apache Kafka on Fabric event streams

The Fabric event streams feature offers an Apache Kafka endpoint on the Eventstream item, enabling users to connect and consume streaming events through the Kafka protocol. If your application already uses the Apache Kafka protocol to send or receive streaming events with specific topics, you can easily connect it to your Eventstream. Just update your connection settings to use the Kafka endpoint provided in your Eventstream.

Fabric event streams feature is powered by Azure Event Hubs, a fully managed cloud-native service. When an eventstream is created, an event hub namespace is automatically provisioned, and an event hub is allocated to the default stream without requiring any provisioning configurations. To learn more about the Kafka-compatible features in Azure Event Hubs service, see Azure Event Hubs for Apache Kafka.

To learn more about how to obtain the Kafka endpoint details for sending events to eventstream, see Add custom endpoint source to an eventstream; and for consuming events from eventstream, see Add a custom endpoint destination to an eventstream.

Limitations

Fabric Eventstream has the following general limitations. Before working with Eventstream, review these limitations to ensure they align with your requirements.

| Limit | Size |

|---|---|

| Maximum message size | 1 MB |

| Maximum retention period of event data | 90 days |