Copy data from FTP server using Azure Data Factory or Synapse Analytics

APPLIES TO:  Azure Data Factory

Azure Data Factory  Azure Synapse Analytics

Azure Synapse Analytics

Tip

Try out Data Factory in Microsoft Fabric, an all-in-one analytics solution for enterprises. Microsoft Fabric covers everything from data movement to data science, real-time analytics, business intelligence, and reporting. Learn how to start a new trial for free!

This article outlines how to copy data from FTP server. To learn about more, read the introductory articles for Azure Data Factory and Synapse Analytics.

Supported capabilities

This FTP connector is supported for the following capabilities:

| Supported capabilities | IR |

|---|---|

| Copy activity (source/-) | ① ② |

| Lookup activity | ① ② |

| GetMetadata activity | ① ② |

| Delete activity | ① ② |

① Azure integration runtime ② Self-hosted integration runtime

Specifically, this FTP connector supports:

- Copying files using Basic or Anonymous authentication.

- Copying files as-is or parsing files with the supported file formats and compression codecs.

The FTP connector support FTP server running in passive mode. Active mode is not supported.

Prerequisites

If your data store is located inside an on-premises network, an Azure virtual network, or Amazon Virtual Private Cloud, you need to configure a self-hosted integration runtime to connect to it.

If your data store is a managed cloud data service, you can use the Azure Integration Runtime. If the access is restricted to IPs that are approved in the firewall rules, you can add Azure Integration Runtime IPs to the allow list.

You can also use the managed virtual network integration runtime feature in Azure Data Factory to access the on-premises network without installing and configuring a self-hosted integration runtime.

For more information about the network security mechanisms and options supported by Data Factory, see Data access strategies.

Get started

To perform the Copy activity with a pipeline, you can use one of the following tools or SDKs:

- The Copy Data tool

- The Azure portal

- The .NET SDK

- The Python SDK

- Azure PowerShell

- The REST API

- The Azure Resource Manager template

Create a linked service to an FTP server using UI

Use the following steps to create a linked service to an FTP server in the Azure portal UI.

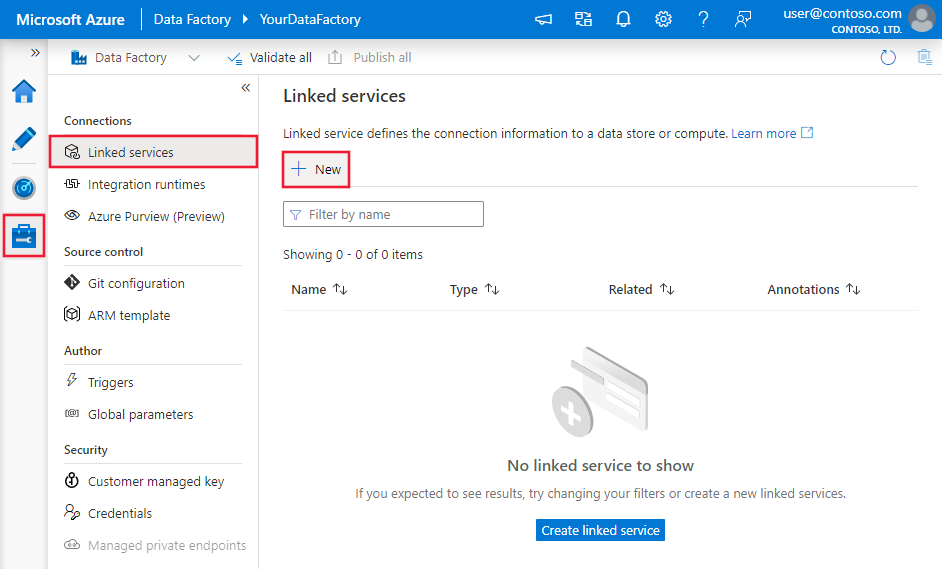

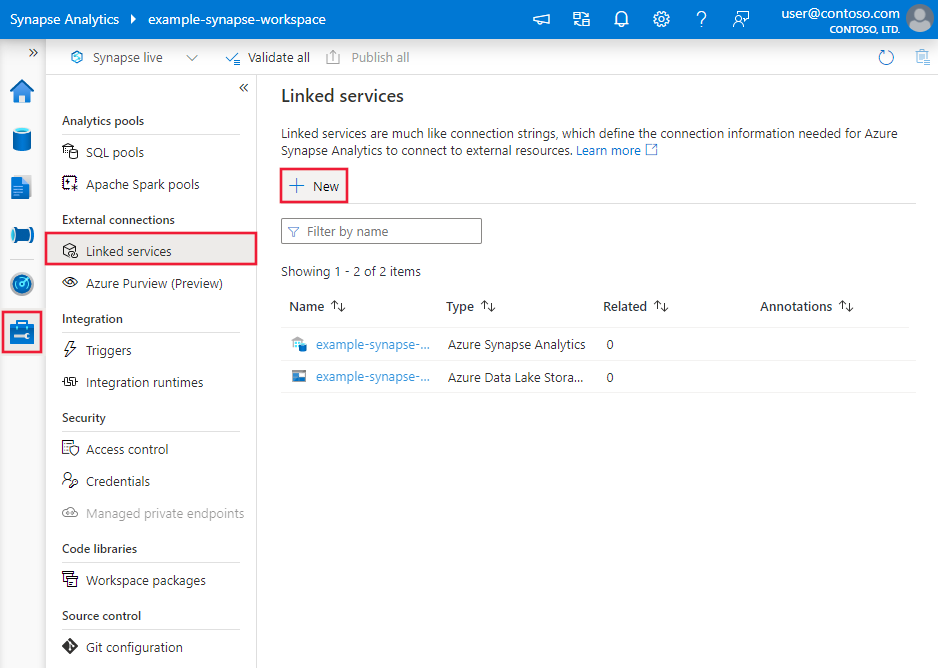

Browse to the Manage tab in your Azure Data Factory or Synapse workspace and select Linked Services, then click New:

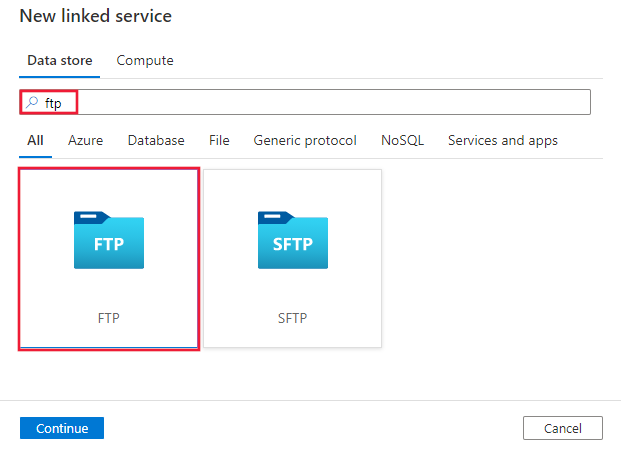

Search for FTP and select the FTP connector.

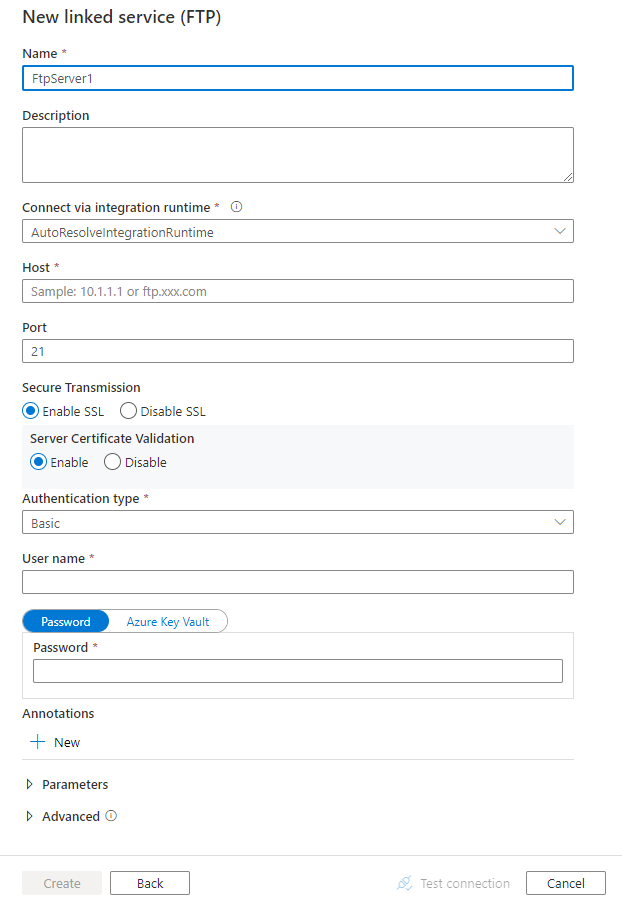

Configure the service details, test the connection, and create the new linked service.

Connector configuration details

The following sections provide details about properties that are used to define entities specific to FTP.

Linked service properties

The following properties are supported for FTP linked service:

| Property | Description | Required |

|---|---|---|

| type | The type property must be set to: FtpServer. | Yes |

| host | Specify the name or IP address of the FTP server. | Yes |

| port | Specify the port on which the FTP server is listening. Allowed values are: integer, default value is 21. |

No |

| enableSsl | Specify whether to use FTP over an SSL/TLS channel. Allowed values are: true (default), false. |

No |

| enableServerCertificateValidation | Specify whether to enable server TLS/SSL certificate validation when you are using FTP over SSL/TLS channel. Allowed values are: true (default), false. |

No |

| authenticationType | Specify the authentication type. Allowed values are: Basic, Anonymous |

Yes |

| userName | Specify the user who has access to the FTP server. | No |

| password | Specify the password for the user (userName). Mark this field as a SecureString to store it securely, or reference a secret stored in Azure Key Vault. | No |

| connectVia | The Integration Runtime to be used to connect to the data store. Learn more from Prerequisites section. If not specified, it uses the default Azure Integration Runtime. | No |

Note

The FTP connector supports accessing FTP server with either no encryption or explicit SSL/TLS encryption; it doesn’t support implicit SSL/TLS encryption.

Example 1: using Anonymous authentication

{

"name": "FTPLinkedService",

"properties": {

"type": "FtpServer",

"typeProperties": {

"host": "<ftp server>",

"port": 21,

"enableSsl": true,

"enableServerCertificateValidation": true,

"authenticationType": "Anonymous"

},

"connectVia": {

"referenceName": "<name of Integration Runtime>",

"type": "IntegrationRuntimeReference"

}

}

}

Example 2: using Basic authentication

{

"name": "FTPLinkedService",

"properties": {

"type": "FtpServer",

"typeProperties": {

"host": "<ftp server>",

"port": 21,

"enableSsl": true,

"enableServerCertificateValidation": true,

"authenticationType": "Basic",

"userName": "<username>",

"password": {

"type": "SecureString",

"value": "<password>"

}

},

"connectVia": {

"referenceName": "<name of Integration Runtime>",

"type": "IntegrationRuntimeReference"

}

}

}

Dataset properties

For a full list of sections and properties available for defining datasets, see the Datasets article.

Azure Data Factory supports the following file formats. Refer to each article for format-based settings.

- Avro format

- Binary format

- Delimited text format

- Excel format

- JSON format

- ORC format

- Parquet format

- XML format

The following properties are supported for FTP under location settings in format-based dataset:

| Property | Description | Required |

|---|---|---|

| type | The type property under location in dataset must be set to FtpServerLocation. |

Yes |

| folderPath | The path to folder. If you want to use wildcard to filter folder, skip this setting and specify in activity source settings. | No |

| fileName | The file name under the given folderPath. If you want to use wildcard to filter files, skip this setting and specify in activity source settings. | No |

Example:

{

"name": "DelimitedTextDataset",

"properties": {

"type": "DelimitedText",

"linkedServiceName": {

"referenceName": "<FTP linked service name>",

"type": "LinkedServiceReference"

},

"schema": [ < physical schema, optional, auto retrieved during authoring > ],

"typeProperties": {

"location": {

"type": "FtpServerLocation",

"folderPath": "root/folder/subfolder"

},

"columnDelimiter": ",",

"quoteChar": "\"",

"firstRowAsHeader": true,

"compressionCodec": "gzip"

}

}

}

Copy activity properties

For a full list of sections and properties available for defining activities, see the Pipelines article. This section provides a list of properties supported by FTP source.

FTP as source

Azure Data Factory supports the following file formats. Refer to each article for format-based settings.

- Avro format

- Binary format

- Delimited text format

- Excel format

- JSON format

- ORC format

- Parquet format

- XML format

The following properties are supported for FTP under storeSettings settings in format-based copy source:

| Property | Description | Required |

|---|---|---|

| type | The type property under storeSettings must be set to FtpReadSettings. |

Yes |

| Locate the files to copy: | ||

| OPTION 1: static path |

Copy from the given folder/file path specified in the dataset. If you want to copy all files from a folder, additionally specify wildcardFileName as *. |

|

| OPTION 2: wildcard - wildcardFolderPath |

The folder path with wildcard characters to filter source folders. Allowed wildcards are: * (matches zero or more characters) and ? (matches zero or single character); use ^ to escape if your actual folder name has wildcard or this escape char inside. See more examples in Folder and file filter examples. |

No |

| OPTION 2: wildcard - wildcardFileName |

The file name with wildcard characters under the given folderPath/wildcardFolderPath to filter source files. Allowed wildcards are: * (matches zero or more characters) and ? (matches zero or single character); use ^ to escape if your actual file name has wildcard or this escape char inside. See more examples in Folder and file filter examples. |

Yes |

| OPTION 3: a list of files - fileListPath |

Indicates to copy a given file set. Point to a text file that includes a list of files you want to copy, one file per line, which is the relative path to the path configured in the dataset. When using this option, do not specify file name in dataset. See more examples in File list examples. |

No |

| Additional settings: | ||

| recursive | Indicates whether the data is read recursively from the subfolders or only from the specified folder. Note that when recursive is set to true and the sink is a file-based store, an empty folder or subfolder isn't copied or created at the sink. Allowed values are true (default) and false. This property doesn't apply when you configure fileListPath. |

No |

| deleteFilesAfterCompletion | Indicates whether the binary files will be deleted from source store after successfully moving to the destination store. The file deletion is per file, so when copy activity fails, you will see some files have already been copied to the destination and deleted from source, while others are still remaining on source store. This property is only valid in binary files copy scenario. The default value: false. |

No |

| useBinaryTransfer | Specify whether to use the binary transfer mode. The values are true for binary mode (default), and false for ASCII. | No |

| enablePartitionDiscovery | For files that are partitioned, specify whether to parse the partitions from the file path and add them as additional source columns. Allowed values are false (default) and true. |

No |

| partitionRootPath | When partition discovery is enabled, specify the absolute root path in order to read partitioned folders as data columns. If it is not specified, by default, - When you use file path in dataset or list of files on source, partition root path is the path configured in dataset. - When you use wildcard folder filter, partition root path is the sub-path before the first wildcard. For example, assuming you configure the path in dataset as "root/folder/year=2020/month=08/day=27": - If you specify partition root path as "root/folder/year=2020", copy activity will generate two more columns month and day with value "08" and "27" respectively, in addition to the columns inside the files.- If partition root path is not specified, no extra column will be generated. |

No |

| maxConcurrentConnections | The upper limit of concurrent connections established to the data store during the activity run. Specify a value only when you want to limit concurrent connections. | No |

| disableChunking | When copying data from FTP, the service tries to get the file length first, then divide the file into multiple parts and read them in parallel. Specify whether your FTP server supports getting file length or seeking to read from a certain offset. Allowed values are false (default), true. |

No |

Example:

"activities":[

{

"name": "CopyFromFTP",

"type": "Copy",

"inputs": [

{

"referenceName": "<Delimited text input dataset name>",

"type": "DatasetReference"

}

],

"outputs": [

{

"referenceName": "<output dataset name>",

"type": "DatasetReference"

}

],

"typeProperties": {

"source": {

"type": "DelimitedTextSource",

"formatSettings":{

"type": "DelimitedTextReadSettings",

"skipLineCount": 10

},

"storeSettings":{

"type": "FtpReadSettings",

"recursive": true,

"wildcardFolderPath": "myfolder*A",

"wildcardFileName": "*.csv",

"disableChunking": false

}

},

"sink": {

"type": "<sink type>"

}

}

}

]

Folder and file filter examples

This section describes the resulting behavior of the folder path and file name with wildcard filters.

| folderPath | fileName | recursive | Source folder structure and filter result (files in bold are retrieved) |

|---|---|---|---|

Folder* |

(empty, use default) | false | FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv AnotherFolderB File6.csv |

Folder* |

(empty, use default) | true | FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv AnotherFolderB File6.csv |

Folder* |

*.csv |

false | FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv AnotherFolderB File6.csv |

Folder* |

*.csv |

true | FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv AnotherFolderB File6.csv |

File list examples

This section describes the resulting behavior of using file list path in copy activity source.

Assuming you have the following source folder structure and want to copy the files in bold:

| Sample source structure | Content in FileListToCopy.txt | Configuration |

|---|---|---|

| root FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv Metadata FileListToCopy.txt |

File1.csv Subfolder1/File3.csv Subfolder1/File5.csv |

In dataset: - Folder path: root/FolderAIn copy activity source: - File list path: root/Metadata/FileListToCopy.txt The file list path points to a text file in the same data store that includes a list of files you want to copy, one file per line with the relative path to the path configured in the dataset. |

Lookup activity properties

To learn details about the properties, check Lookup activity.

GetMetadata activity properties

To learn details about the properties, check GetMetadata activity

Delete activity properties

To learn details about the properties, check Delete activity

Legacy models

Note

The following models are still supported as-is for backward compatibility. You are suggested to use the new model mentioned in above sections going forward, and the authoring UI has switched to generating the new model.

Legacy dataset model

| Property | Description | Required |

|---|---|---|

| type | The type property of the dataset must be set to: FileShare | Yes |

| folderPath | Path to the folder. Wildcard filter is supported, allowed wildcards are: * (matches zero or more characters) and ? (matches zero or single character); use ^ to escape if your actual folder name has wildcard or this escape char inside. Examples: rootfolder/subfolder/, see more examples in Folder and file filter examples. |

Yes |

| fileName | Name or wildcard filter for the file(s) under the specified "folderPath". If you don't specify a value for this property, the dataset points to all files in the folder. For filter, allowed wildcards are: * (matches zero or more characters) and ? (matches zero or single character).- Example 1: "fileName": "*.csv"- Example 2: "fileName": "???20180427.txt"Use ^ to escape if your actual file name has wildcard or this escape char inside. |

No |

| format | If you want to copy files as-is between file-based stores (binary copy), skip the format section in both input and output dataset definitions. If you want to parse files with a specific format, the following file format types are supported: TextFormat, JsonFormat, AvroFormat, OrcFormat, ParquetFormat. Set the type property under format to one of these values. For more information, see Text Format, Json Format, Avro Format, Orc Format, and Parquet Format sections. |

No (only for binary copy scenario) |

| compression | Specify the type and level of compression for the data. For more information, see Supported file formats and compression codecs. Supported types are: GZip, Deflate, BZip2, and ZipDeflate. Supported levels are: Optimal and Fastest. |

No |

| useBinaryTransfer | Specify whether to use the binary transfer mode. The values are true for binary mode (default), and false for ASCII. | No |

Tip

To copy all files under a folder, specify folderPath only.

To copy a single file with a given name, specify folderPath with folder part and fileName with file name.

To copy a subset of files under a folder, specify folderPath with folder part and fileName with wildcard filter.

Note

If you were using "fileFilter" property for file filter, it is still supported as-is, while you are suggested to use the new filter capability added to "fileName" going forward.

Example:

{

"name": "FTPDataset",

"properties": {

"type": "FileShare",

"linkedServiceName":{

"referenceName": "<FTP linked service name>",

"type": "LinkedServiceReference"

},

"typeProperties": {

"folderPath": "folder/subfolder/",

"fileName": "myfile.csv.gz",

"format": {

"type": "TextFormat",

"columnDelimiter": ",",

"rowDelimiter": "\n"

},

"compression": {

"type": "GZip",

"level": "Optimal"

}

}

}

}

Legacy copy activity source model

| Property | Description | Required |

|---|---|---|

| type | The type property of the copy activity source must be set to: FileSystemSource | Yes |

| recursive | Indicates whether the data is read recursively from the sub folders or only from the specified folder. Note when recursive is set to true and sink is file-based store, empty folder/sub-folder will not be copied/created at sink. Allowed values are: true (default), false |

No |

| maxConcurrentConnections | The upper limit of concurrent connections established to the data store during the activity run. Specify a value only when you want to limit concurrent connections. | No |

Example:

"activities":[

{

"name": "CopyFromFTP",

"type": "Copy",

"inputs": [

{

"referenceName": "<FTP input dataset name>",

"type": "DatasetReference"

}

],

"outputs": [

{

"referenceName": "<output dataset name>",

"type": "DatasetReference"

}

],

"typeProperties": {

"source": {

"type": "FileSystemSource",

"recursive": true

},

"sink": {

"type": "<sink type>"

}

}

}

]

Related content

For a list of data stores supported as sources and sinks by the copy activity, see supported data stores.