期望值建議和進階模式

本文包含大規模實作預期的建議,以及預期支持的進階模式範例。 這些模式會搭配預期使用多個數據集,並要求使用者瞭解具體化檢視、串流數據表和期望的語法和語意。

如需預期行為和語法的基本概觀,請參閱 使用管線預期管理數據品質。

可攜式且可重複使用的期望

Databricks 在實作預期以改善可移植性並減少維護負擔時,建議下列最佳做法:

| 建議 | 衝擊 |

|---|---|

| 將期望定義與管線邏輯分開儲存。 | 輕鬆地將期望套用至多個數據集或管線。 更新、稽核和維護預期,而不需修改管線原始程式碼。 |

| 新增自訂標籤以建立相關期望的群組。 | 根據標籤篩選期望。 |

| 將一致的預期標準套用至相似的數據集。 | 跨多個數據集和管線使用相同的預期來評估相同的邏輯。 |

下列範例示範如何使用 Delta 數據表或字典來建立中央期望存放庫。 然後,自定義 Python 函式會將這些期望套用至範例管線中的數據集:

Delta 數據表

下列範例會建立名為 rules 的數據表,以維護規則:

CREATE OR REPLACE TABLE

rules

AS SELECT

col1 AS name,

col2 AS constraint,

col3 AS tag

FROM (

VALUES

("website_not_null","Website IS NOT NULL","validity"),

("fresh_data","to_date(updateTime,'M/d/yyyy h:m:s a') > '2010-01-01'","maintained"),

("social_media_access","NOT(Facebook IS NULL AND Twitter IS NULL AND Youtube IS NULL)","maintained")

)

下列 Python 範例會根據 rules 數據表中的規則定義數據質量預期。

get_rules() 函式會從 rules 數據表讀取規則,並傳回 Python 字典,其中包含符合傳遞至函式 tag 自變數的規則。

在此範例中,字典使用 @dlt.expect_all_or_drop() 裝飾器來施加,以強制執行資料品質約束。

例如,任何不符合 validity 標記規則的記錄,都會從 raw_farmers_market 表中刪除:

import dlt

from pyspark.sql.functions import expr, col

def get_rules(tag):

"""

loads data quality rules from a table

:param tag: tag to match

:return: dictionary of rules that matched the tag

"""

df = spark.read.table("rules").filter(col("tag") == tag).collect()

return {

row['name']: row['constraint']

for row in df

}

@dlt.table

@dlt.expect_all_or_drop(get_rules('validity'))

def raw_farmers_market():

return (

spark.read.format('csv').option("header", "true")

.load('/databricks-datasets/data.gov/farmers_markets_geographic_data/data-001/')

)

@dlt.table

@dlt.expect_all_or_drop(get_rules('maintained'))

def organic_farmers_market():

return (

dlt.read("raw_farmers_market")

.filter(expr("Organic = 'Y'"))

)

Python 模組

下列範例會建立 Python 模組來維護規則。 在此範例中,將此程式代碼儲存在名為 rules_module.py 的檔案中,與做為管線原始程式碼的筆記本相同資料夾中:

def get_rules_as_list_of_dict():

return [

{

"name": "website_not_null",

"constraint": "Website IS NOT NULL",

"tag": "validity"

},

{

"name": "fresh_data",

"constraint": "to_date(updateTime,'M/d/yyyy h:m:s a') > '2010-01-01'",

"tag": "maintained"

},

{

"name": "social_media_access",

"constraint": "NOT(Facebook IS NULL AND Twitter IS NULL AND Youtube IS NULL)",

"tag": "maintained"

}

]

下列 Python 範例會根據 rules_module.py 檔案中定義的規則定義數據質量預期。

get_rules() 函式會傳回 Python 字典,其中包含符合傳遞給它的 tag 自變數的規則。

在此範例中,字典使用 @dlt.expect_all_or_drop() 裝飾器來施加,以強制執行資料品質約束。

例如,任何不符合 validity 標記規則的記錄,都會從 raw_farmers_market 表中刪除:

import dlt

from rules_module import *

from pyspark.sql.functions import expr, col

def get_rules(tag):

"""

loads data quality rules from a table

:param tag: tag to match

:return: dictionary of rules that matched the tag

"""

return {

row['name']: row['constraint']

for row in get_rules_as_list_of_dict()

if row['tag'] == tag

}

@dlt.table

@dlt.expect_all_or_drop(get_rules('validity'))

def raw_farmers_market():

return (

spark.read.format('csv').option("header", "true")

.load('/databricks-datasets/data.gov/farmers_markets_geographic_data/data-001/')

)

@dlt.table

@dlt.expect_all_or_drop(get_rules('maintained'))

def organic_farmers_market():

return (

dlt.read("raw_farmers_market")

.filter(expr("Organic = 'Y'"))

)

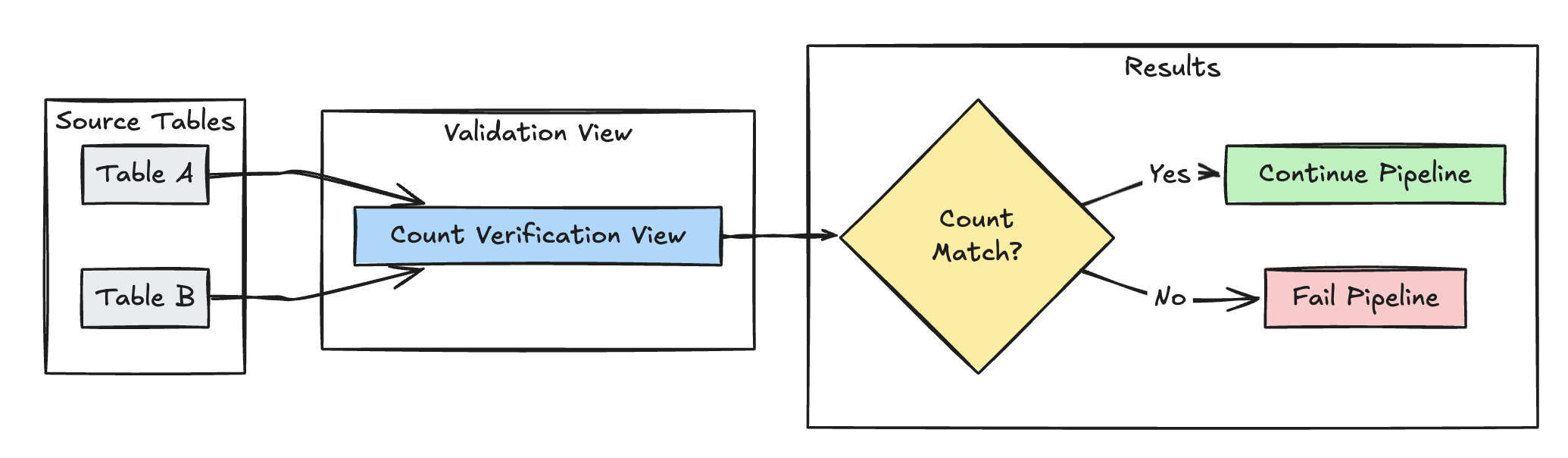

列數驗證

下列範例會驗證 table_a 與 table_b 之間的數據列計數相等,以確認轉換期間不會遺失任何數據:

Python

@dlt.view(

name="count_verification",

comment="Validates equal row counts between tables"

)

@dlt.expect_or_fail("no_rows_dropped", "a_count == b_count")

def validate_row_counts():

return spark.sql("""

SELECT * FROM

(SELECT COUNT(*) AS a_count FROM table_a),

(SELECT COUNT(*) AS b_count FROM table_b)""")

SQL

CREATE OR REFRESH MATERIALIZED VIEW count_verification(

CONSTRAINT no_rows_dropped EXPECT (a_count == b_count)

) AS SELECT * FROM

(SELECT COUNT(*) AS a_count FROM table_a),

(SELECT COUNT(*) AS b_count FROM table_b)

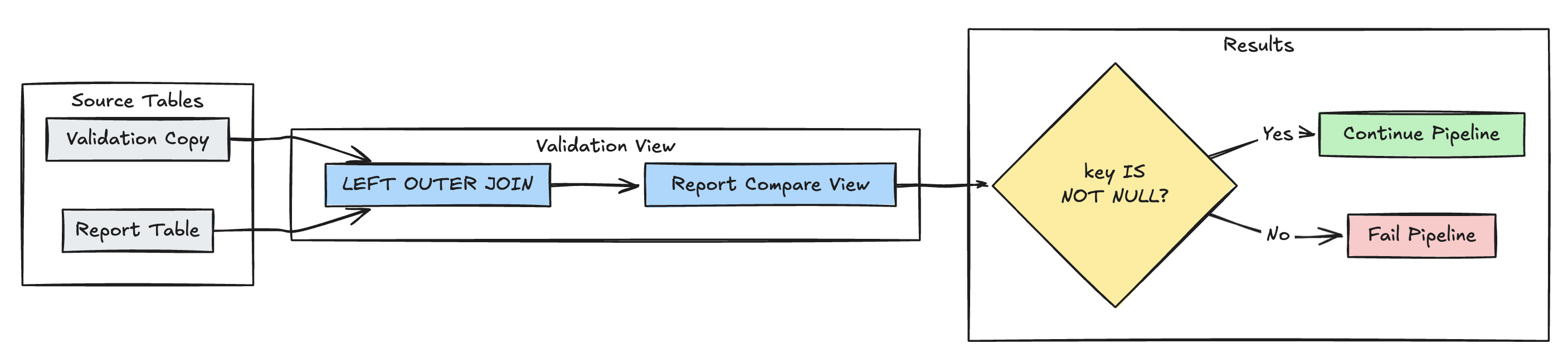

遺漏記錄偵測

下列範例會驗證 report 資料表中是否有所有預期的記錄:

Python

@dlt.view(

name="report_compare_tests",

comment="Validates no records are missing after joining"

)

@dlt.expect_or_fail("no_missing_records", "r_key IS NOT NULL")

def validate_report_completeness():

return (

dlt.read("validation_copy").alias("v")

.join(

dlt.read("report").alias("r"),

on="key",

how="left_outer"

)

.select(

"v.*",

"r.key as r_key"

)

)

SQL

CREATE OR REFRESH MATERIALIZED VIEW report_compare_tests(

CONSTRAINT no_missing_records EXPECT (r_key IS NOT NULL)

)

AS SELECT v.*, r.key as r_key FROM validation_copy v

LEFT OUTER JOIN report r ON v.key = r.key

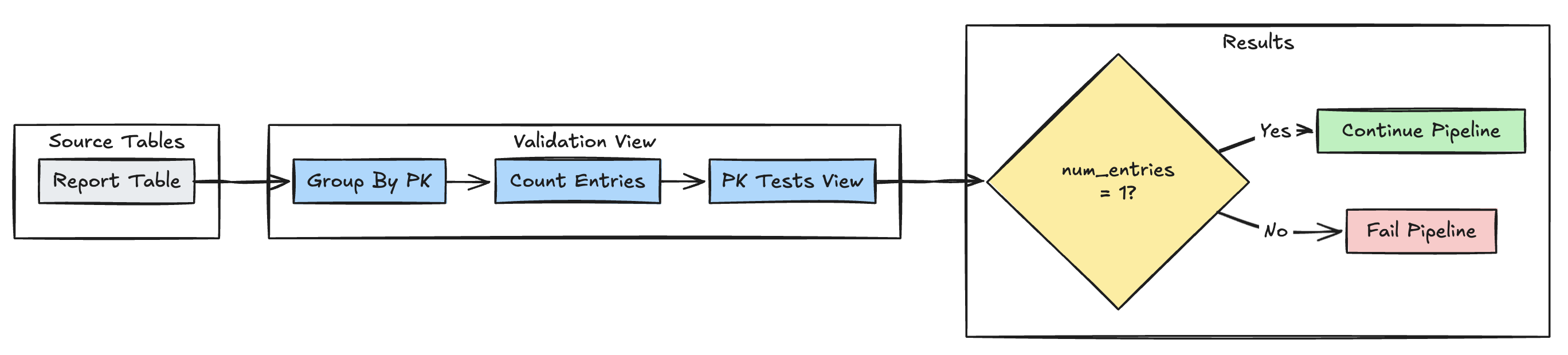

主鍵唯一性

以下範例會在各資料表間驗證主鍵約束:

Python

@dlt.view(

name="report_pk_tests",

comment="Validates primary key uniqueness"

)

@dlt.expect_or_fail("unique_pk", "num_entries = 1")

def validate_pk_uniqueness():

return (

dlt.read("report")

.groupBy("pk")

.count()

.withColumnRenamed("count", "num_entries")

)

SQL

CREATE OR REFRESH MATERIALIZED VIEW report_pk_tests(

CONSTRAINT unique_pk EXPECT (num_entries = 1)

)

AS SELECT pk, count(*) as num_entries

FROM report

GROUP BY pk

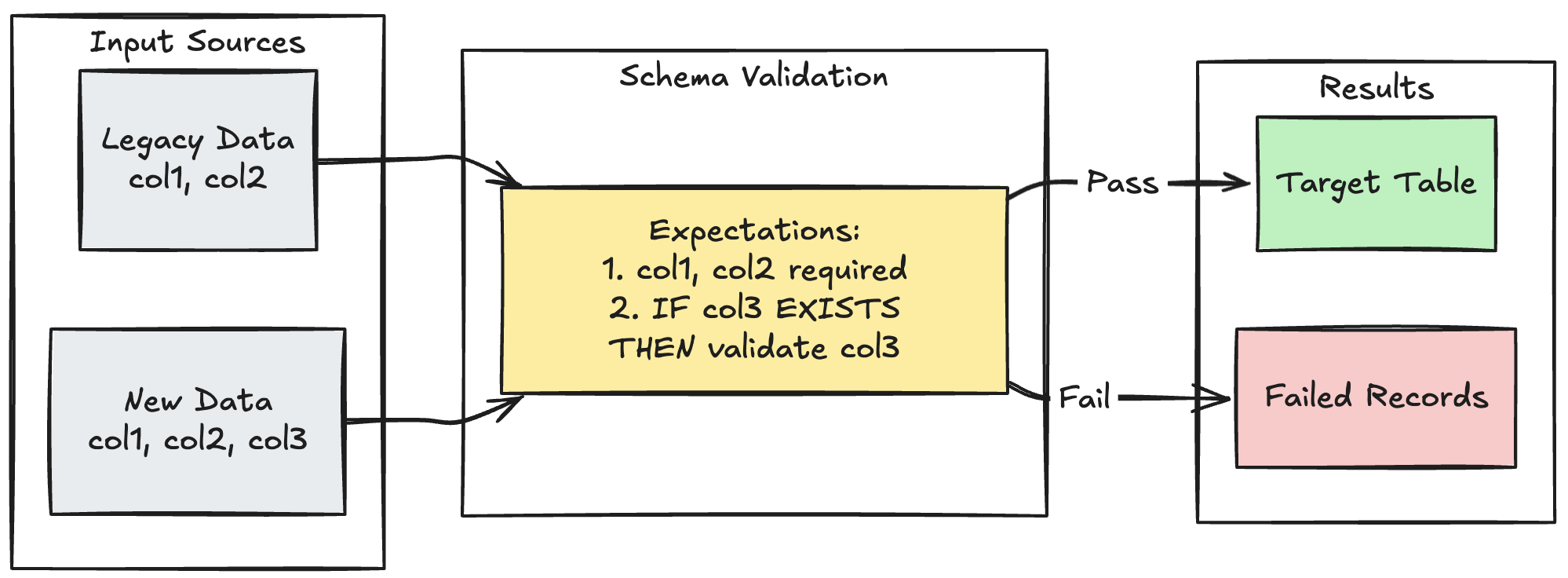

架構演進模式

下列範例示範如何處理其他數據行的架構演進。 當您移轉數據源或處理多個上游數據版本時,請使用此模式,確保回溯相容性,同時強制執行數據品質:

Python

@dlt.table

@dlt.expect_all_or_fail({

"required_columns": "col1 IS NOT NULL AND col2 IS NOT NULL",

"valid_col3": "CASE WHEN col3 IS NOT NULL THEN col3 > 0 ELSE TRUE END"

})

def evolving_table():

# Legacy data (V1 schema)

legacy_data = spark.read.table("legacy_source")

# New data (V2 schema)

new_data = spark.read.table("new_source")

# Combine both sources

return legacy_data.unionByName(new_data, allowMissingColumns=True)

SQL

CREATE OR REFRESH MATERIALIZED VIEW evolving_table(

-- Merging multiple constraints into one as expect_all is Python-specific API

CONSTRAINT valid_migrated_data EXPECT (

(col1 IS NOT NULL AND col2 IS NOT NULL) AND (CASE WHEN col3 IS NOT NULL THEN col3 > 0 ELSE TRUE END)

) ON VIOLATION FAIL UPDATE

) AS

SELECT * FROM new_source

UNION

SELECT *, NULL as col3 FROM legacy_source;

範圍型驗證模式

下列範例示範如何根據歷史統計範圍驗證新的數據點,協助識別數據流中的極端值和異常:

Delta Live Tables 使用預期的範圍型驗證

Python

@dlt.view

def stats_validation_view():

# Calculate statistical bounds from historical data

bounds = spark.sql("""

SELECT

avg(amount) - 3 * stddev(amount) as lower_bound,

avg(amount) + 3 * stddev(amount) as upper_bound

FROM historical_stats

WHERE

date >= CURRENT_DATE() - INTERVAL 30 DAYS

""")

# Join with new data and apply bounds

return spark.read.table("new_data").crossJoin(bounds)

@dlt.table

@dlt.expect_or_drop(

"within_statistical_range",

"amount BETWEEN lower_bound AND upper_bound"

)

def validated_amounts():

return dlt.read("stats_validation_view")

SQL

CREATE OR REFRESH MATERIALIZED VIEW stats_validation_view AS

WITH bounds AS (

SELECT

avg(amount) - 3 * stddev(amount) as lower_bound,

avg(amount) + 3 * stddev(amount) as upper_bound

FROM historical_stats

WHERE date >= CURRENT_DATE() - INTERVAL 30 DAYS

)

SELECT

new_data.*,

bounds.*

FROM new_data

CROSS JOIN bounds;

CREATE OR REFRESH MATERIALIZED VIEW validated_amounts (

CONSTRAINT within_statistical_range EXPECT (amount BETWEEN lower_bound AND upper_bound)

)

AS SELECT * FROM stats_validation_view;

隔離無效的記錄

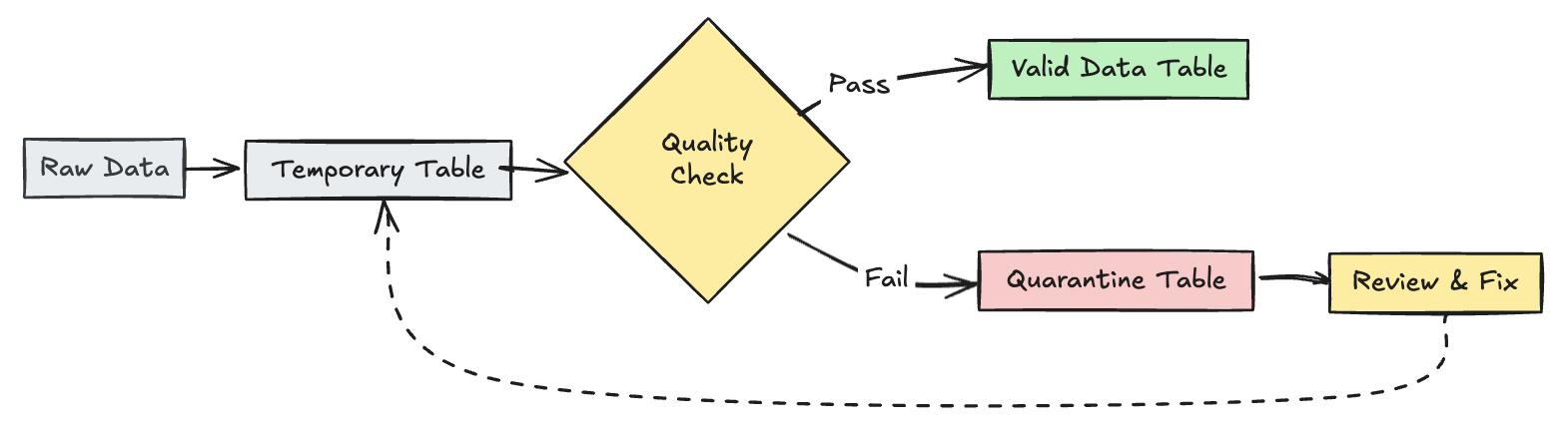

此模式結合了預期與臨時表和檢視,以在管線更新期間追蹤數據品質計量,並針對下游作業中的有效和無效記錄啟用個別的處理路徑。

Python

import dlt

from pyspark.sql.functions import expr

rules = {

"valid_pickup_zip": "(pickup_zip IS NOT NULL)",

"valid_dropoff_zip": "(dropoff_zip IS NOT NULL)",

}

quarantine_rules = "NOT({0})".format(" AND ".join(rules.values()))

@dlt.view

def raw_trips_data():

return spark.readStream.table("samples.nyctaxi.trips")

@dlt.table(

temporary=True,

partition_cols=["is_quarantined"],

)

@dlt.expect_all(rules)

def trips_data_quarantine():

return (

dlt.readStream("raw_trips_data").withColumn("is_quarantined", expr(quarantine_rules))

)

@dlt.view

def valid_trips_data():

return dlt.read("trips_data_quarantine").filter("is_quarantined=false")

@dlt.view

def invalid_trips_data():

return dlt.read("trips_data_quarantine").filter("is_quarantined=true")

SQL

CREATE TEMPORARY STREAMING LIVE VIEW raw_trips_data AS

SELECT * FROM STREAM(samples.nyctaxi.trips);

CREATE OR REFRESH TEMPORARY STREAMING TABLE trips_data_quarantine(

-- Option 1 - merge all expectations to have a single name in the pipeline event log

CONSTRAINT quarantined_row EXPECT (pickup_zip IS NOT NULL OR dropoff_zip IS NOT NULL),

-- Option 2 - Keep the expectations separate, resulting in multiple entries under different names

CONSTRAINT invalid_pickup_zip EXPECT (pickup_zip IS NOT NULL),

CONSTRAINT invalid_dropoff_zip EXPECT (dropoff_zip IS NOT NULL)

)

PARTITIONED BY (is_quarantined)

AS

SELECT

*,

NOT ((pickup_zip IS NOT NULL) and (dropoff_zip IS NOT NULL)) as is_quarantined

FROM STREAM(raw_trips_data);

CREATE TEMPORARY LIVE VIEW valid_trips_data AS

SELECT * FROM trips_data_quarantine WHERE is_quarantined=FALSE;

CREATE TEMPORARY LIVE VIEW invalid_trips_data AS

SELECT * FROM trips_data_quarantine WHERE is_quarantined=TRUE;