Appendix 1: HPC Cluster Networking

Microsoft HPC Pack supports five cluster topologies designed to meet a wide range of user needs and performance, scalability, manageability, and access requirements. These topologies are distinguished by how the nodes in the cluster are connected to each other and to the enterprise network. Depending on the network topology that you choose for your cluster, certain network services, such as Dynamic Host Configuration Protocol (DHCP) and network address translation (NAT), can be provided by the head node to the nodes.

You must choose the network topology that you will use for your cluster well in advance of setting up an HPC cluster.

This section includes the following topics:

HPC cluster networks

The following table lists and describes the networks to which an HPC cluster can be connected.

| Network name | Description |

|---|---|

| Enterprise network | An organizational network connected to the head node and, in some cases, to other nodes in the cluster. The enterprise network is often the public or organization network that most users log on to perform their work. All intra-cluster management and deployment traffic is carried on the enterprise network unless a private network, and optionally an application network, also connect the cluster nodes. |

| Private network | A dedicated network that carries intra-cluster communication between nodes. This network, if it exists, carries management, deployment, and application traffic if no application network exists. |

| Application network | A dedicated network, preferably with high bandwidth and low latency. This network is normally used for parallel Message Passing Interface (MPI) application communication between cluster nodes. |

Supported HPC cluster topologies

There are five cluster topologies supported by HPC Pack:

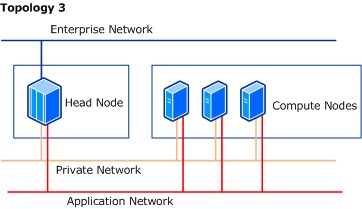

Topology 3: Compute nodes isolated on private and application networks

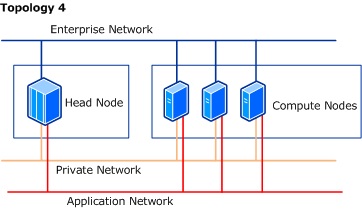

Topology 4: All nodes on enterprise, private, and application networks

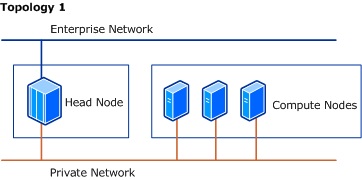

Topology 1: Compute nodes isolated on a private network

The following image illustrates how the head node and the compute nodes are connected to the cluster networks in this topology.

The following table lists and describes details about the different components in this topology.

| Component | Description |

|---|---|

| Network adapters | - The head node has two network adapters. - Each compute node has one network adapter. - The head node is connected to both an enterprise network and to a private network. - The compute nodes are connected only to the private network. |

| Traffic | - The private network carries all communication between the head node and the compute nodes, including deployment, management, and application traffic (for example, MPI communication). |

| Network services | - The default configuration for this topology is NAT-enabled on the private network to provide the compute nodes with address translation and access to services and resources on the enterprise network. - DHCP is enabled by default on the private network to assign IP addresses to compute nodes. - If a DHCP server is already installed on the private network, then both NAT and DHCP will be disabled by default. |

| Security | - The default configuration on the cluster has the firewall turned ON for the enterprise network and turned OFF for the private network. |

| Considerations when selecting this topology | - Cluster performance is more consistent because intra-cluster communication is routed onto the private network. - Network traffic between compute nodes and resources on the enterprise network (such as databases and file servers) pass through the head node. For this reason, and depending on the amount of traffic, this might impact cluster performance. - Compute nodes are not directly accessible by users on the enterprise network. This has implications when developing and debugging parallel applications for use on the cluster. - If you want to add workstation nodes to the cluster, and those nodes are connected to the enterprise network, communication between workstation nodes and compute nodes is not possible. |

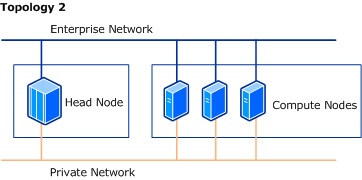

Topology 2: All nodes on enterprise and private networks

The following image illustrates how the head node and the compute nodes are connected to the cluster networks in this topology.

The following table lists and describes details about the different components in this topology.

| Component | Description |

|---|---|

| Network adapters | - The head node has two network adapters. - Each additional cluster node has two network adapters. - All nodes in the cluster are connected to both the enterprise network and to a dedicated private cluster network. |

| Traffic | - Communication between nodes, including deployment, management, and application traffic, is carried on the private network. - Traffic from the enterprise network can be routed directly to a compute node. |

| Network services | - The default configuration for this topology has DHCPenabled on the private network, to provide IP addresses to the compute nodes. - NAT is not required in this topology because the compute nodes are connected to the enterprise network, so this option is disabled by default. |

| Security | - The default configuration on the cluster has the firewall turned ON for the enterprise network and turned OFF for the private network. |

| Considerations when selecting this topology | - This topology offers more consistent cluster performance because intra-cluster communication is routed onto a private network. - This topology is well suited for developing and debugging applications because all compute nodes are connected to the enterprise network. - This topology provides easy access to compute nodes by users on the enterprise network. - This topology provides compute nodes with direct access to enterprise network resources. |

Topology 3: Compute nodes isolated on private and application networks

The following image illustrates how the head node and the compute nodes are connected to the cluster networks in this topology.

The following table lists and describes details about the different components in this topology.

| Component | Description |

|---|---|

| Network adapters | - The head node has three network adapters: one for the enterprise network, one for the private network, and a high-speed adapter that is connected to the application network for high performance. - Each compute node has two network adapters, one for the private network and another for the application network. |

| Traffic | - The private network carries deployment and management communication between the head node and the compute nodes. - MPI jobs running on the cluster use the high-performance application network for cross-node communication. |

| Network services | - The default configuration for this topology has both DHCP and NAT enabled for the private network, to provide IP addressing and address translation for compute nodes. DHCP is enabled by default on the application network, but not NAT. - If a DHCP server is already installed on the private network, then both NAT and DHCP will be disabled by default. |

| Security | - The default configuration on the cluster has the firewall turned ON for the enterprise network and turned OFF on the private and application networks. |

| Considerations when selecting this topology | - This topology offers more consistent cluster performance because intra-cluster communication is routed onto the private network, while application communication is routed on a separate, isolated network. - Compute nodes are not directly accessible by users on the enterprise network in this topology. This has implications when developing and debugging parallel applications for use on the cluster. - If you want to add workstation nodes in the cluster, and those nodes are connected to the enterprise network, communication between workstation nodes and compute nodes is not possible. |

Topology 4: All nodes on enterprise, private, and application networks

The following image illustrates how the head node and the compute nodes are connected to the cluster networks in this topology.

The following table lists and describes details about the different components in this topology.

| Component | Description |

|---|---|

| Network adapters | - The head node and each compute node have three network adapters: one for the enterprise network, one for the private network, and a high-speed adapter that is connected to the application network for high performance. |

| Traffic | - The private cluster network carries only deployment and management traffic. - The application network carries latency-sensitive traffic, such as MPI communication between nodes. - Network traffic from the enterprise network reaches the compute nodes directly. |

| Network services | - The default configuration for this topology has DHCP enabled for the private and application networks to provide IP addresses to the compute nodes on both networks. - NAT is disabled for the private and application networks because the compute nodes are connected to the enterprise network. |

| Security | - The default configuration on the cluster has the firewall turned ON for the enterprise network and turned OFF on the private and application networks. |

| Considerations when selecting this topology | - This topology offers more consistent cluster performance because intra-cluster communication is routed onto a private network, while application communication is routed on a separate, isolated network. - This topology is well suited for developing and debugging applications because all cluster nodes are connected to the enterprise network. - This topology provides users on the enterprise network with direct access to compute nodes. - This topology provides compute nodes with direct access to enterprise network resources. |

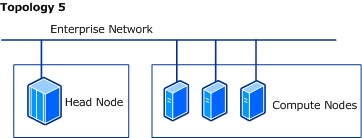

Topology 5: All nodes only on an enterprise network

The following image illustrates how the head node and the compute nodes are connected to the cluster networks in this topology.

The following table lists and describes details about the different components in this topology.

| Component | Description |

|---|---|

| Network adapters | - The head node has one network adapter. - All compute nodes have one network adapter. - All nodes are on the enterprise network. |

| Traffic | - All traffic, including intra-cluster, application, and enterprise traffic, is carried over the enterprise network. This maximizes access to the compute nodes by users and developers on the enterprise network. |

| Network services | - This topology does not require NAT or DHCP because the compute nodes are connected to the enterprise network. |

| Security | - The default configuration on the cluster has the firewall turned ON for the enterprise network. |

| Considerations when selecting this topology | - This topology offers users on the enterprise network with direct access to compute nodes. - Access to resources on the enterprise network by individual compute nodes is faster. - This topology, like topologies 2 and 4, is well suited for developing and debugging applications because all cluster nodes are connected to the enterprise network. - This topology provides compute nodes with direct access to enterprise network resources. - Because all nodes are connected only to the enterprise network, you cannot use the deployment tools in HPC Pack to deploy nodes from bare metal or over iSCSI. - This topology is recommended for adding workstation nodes (which are usually already connected to the enterprise network), because the workstation nodes are able to communicate with all other types of nodes in the cluster. |

Internet Protocol version support

To support HPC networking, network adapters on the cluster nodes must be enabled for IPv4. Both IPv4-only and dual-stack (IPv4 and IPv6) configurations are supported. Network adapters cannot be enabled only for IPv6.

HPC network services

Depending on the network topology that you have chosen for your HPC cluster, network address translation (NAT) or Dynamic Host Configuration Protocol (DHCP) can be provided by the head node to the nodes connected to the different cluster networks.

Network address translation (NAT)

Network address translation (NAT) provides a method for translating IPv4 addresses of computers on one network into IPv4 addresses of computers on a different network.

Enabling NAT on the head node enables nodes on the private or application networks to access resources on the enterprise network. You do not need to enable NAT if you have another server providing NAT or routing services on the private or application networks. Also, you do not need NAT if all nodes are connected to the enterprise network.

DHCP server

A DHCP server assigns IP addresses to network clients. Depending on the detected configuration of your HPC cluster and the network topology that you choose for your cluster, the nodes will receive IP addresses from the head node running DHCP, from a dedicated DHCP server on the private network, or via DHCP services coming from a server on the enterprise network.

Note

If you enable DHCP server on a HPC Pack 2012 head node, the DHCP management tools are not installed by default. If you need the DHCP management tools, you can install them on the head node by using the Add Roles and Feature Wizard GUI or a command-line tool. For more information, see Install or Uninstall Roles, Role Services, or Features.

Windows Firewall configuration

When HPC Pack is installed on a node in the cluster, by default two groups of HPC-specific Windows Firewall rules are configured and enabled:

HPC Private Rules that enable the Windows HPC cluster services on the head node and the cluster nodes to communicate with each other.

HPC Public Rules that enable external clients to communicate with Windows HPC cluster services.

Depending on the role of a node in the cluster (for example, head node versus compute node), the rules that make up the HPC Private and the HPC Public groups will differ.

To further manage firewall settings, you can configure whether HPC Pack includes or excludes individual network adapters from Windows Firewall on the cluster nodes. If a network adapter is excluded from Windows Firewall, communication to and from the node is completely open through that adapter, independent of the Windows Firewall rules that are enabled or disabled on the node.

The Firewall Setup page in the Network Configuration Wizard helps you to specify which network adapters will be included or excluded from Windows Firewall, based on the HPC network to which those network adapters are connected. You can also specify that you do not want HPC Pack to manage network adapter exclusions at all.

Note

If you let HPC Pack manage network adapter exclusions for you, HPC Pack constantly monitors the network adapter exclusions on the nodes and attempts to restore them to the settings that you have selected.

If you configure HPC Pack to manage network adapter exclusions, you select one of the following settings for each cluster network.

| Firewall selection | Description |

|---|---|

| ON | Do not exclude from Windows Firewall the network adapter that is connected to that HPC network. Affects all nodes (except for workstation nodes) on that network. |

| OFF | Exclude from Windows Firewall the network adapter that is connected to that HPC network. Affects all nodes (except for workstation nodes) on that network. |

Caution

You must use a Windows Firewall configuration that complies with the security policies of your organization.

Additional considerations

When you use the Network Configuration Wizard to make changes to the firewall settings, these changes are propagated to all existing nodes in the cluster (except for workstation nodes and unmanaged server nodes), but it can take up to 5 minutes for the changes to take effect on the nodes.

If an HPC cluster includes a private or an application network, the default selection is to create network adapter exclusions on those networks (that is, the default setting for those HPC networks is OFF). This provides the best performance and manageability experience. If you are using private and application networks, and intra-node security is important to you, isolate the private and application networks behind the head node.

The administrative tools in Windows Firewall with Advanced Security or the Group Policy settings in Active Directory can override some firewall settings that are configured by HPC Pack. This can adversely affect the functionality of your Windows HPC cluster.

If you uninstall HPC Pack on the head node, Windows Firewall will by default be enabled (or disabled) on the connections to the cluster networks according to the way you configured Windows Firewall for the HPC cluster networks. If you need to, you can manually reconfigure the protected connections in Windows Firewall after uninstalling HPC Pack.

If you have applications that require access to the head node or to the cluster nodes on specific ports, you will have to manually configure the rules for those applications in Windows Firewall.

You can use the hpcfwutil command-line tool to detect, clear, or repair HPC-specific Windows Firewall rules in your Windows HPC cluster. For more information, see hpcfwutil.

Firewall ports that are required for cluster services

The following table summarizes the firewall ports that are required by the services on the HPC cluster nodes. By default these ports are opened in Windows Firewall during the installation of HPC Pack. (See details in the tables of Windows Firewall rules that follow this table.)

Note

If you use a non-Microsoft firewall, configure the necessary ports and rules manually for proper operation of the HPC cluster.

| Port number | Protocol | Direction | Used for |

|---|---|---|---|

| 80 | TCP | Inbound | Any |

| 443 | TCP | Inbound | Any |

| 1856 | TCP | Inbound | HpcNodeManager.exe |

| 5800, 5801, 5802, 5969, 5970 | TCP | Inbound | HpcScheduler.exe |

| 5974 | TCP | Inbound | HpcDiagnostics.exe |

| 5999 | TCP | Inbound | HpcScheduler.exe |

| 6729, 6730 | TCP | Inbound | HpcManagement.exe |

| 7997 Note: This port is configured starting in HPC Pack 2008 R2 Service Pack 1. | TCP | Inbound | HpcScheduler.exe |

| 8677 | TCP | Inbound | Msmpisvc.exe |

| 9087 | TCP | Inbound | HpcBroker.exe |

| 9090 | TCP | Inbound | HpcSession.exe |

| 9091 | TCP | Inbound | SMSvcHost.exe |

| 9092 | TCP | Inbound | HpcSession.exe |

| 9094 Note: This port is configured starting in HPC Pack 2012. | TCP | Inbound | HpcSession.exe |

| 9095 Note: This port is configured starting in HPC Pack 2012. | TCP | Inbound | HpcBroker.exe |

| 9096 Note: This port is configured starting in HPC Pack 2012. | TCP | Inbound | HpcSoaDiagMon.exe |

| 9100 - 9163 Note: These ports are not configured in HPC Pack 2012. | TCP | Inbound | HpcServiceHost.exe, HpcServiceHost32.exe |

| 9200 - 9263 Note: These ports are not configured in HPC Pack 2012. | TCP | Inbound | HpcServiceHost.exe, HpcServiceHost32.exe |

| 9794 | TCP | Inbound | HpcManagement.exe |

| 9892, 9893 | TCP, UDP Note: This port is configured only for TCP starting in HPC Pack 2012. | Inbound | HpcSdm.exe |

| 9894 Note: This port is configured starting in HPC Pack 2012. | TCP, UDP | Inbound | HpcMonitoringServer.exe |

Windows Firewall rules configured by Microsoft HPC Pack

The Windows Firewall rules in the following two tables are configured during the installation of HPC Pack. Not all rules are configured on all cluster nodes.

Note

In the following tables, “workstation nodes” refers to both workstation nodes and unmanaged server nodes. Unmanaged server nodes can be configured starting in HPC Pack 2008 R2 Service Pack 3 (SP3).

HPC private rules

| Rule name | Direction | Cluster node | Used for | Protocol | Local port |

|---|---|---|---|---|---|

| HPC Deployment Server (TCP-In) | Inbound | Head node | HpcManagement.exe | TCP | 9794 |

| HPC Job Scheduler Service (TCP-In, private) | Inbound | Head node | HpcScheduler.exe | TCP | 5970 |

| HPC MPI clock sync (TCP-In) | Inbound | Head node, broker node, compute node, workstation node | Mpisync.exe | TCP | Any |

| HPC MPI Etl to clog conversion (TCP-In) | Inbound | Head node, broker node, compute node, workstation node | Etl2clog.exe | TCP | Any |

| HPC MPI Etl to OTF conversion (TCP-In) | Inbound | Head node, broker node, compute node, workstation node | Etl2otf.exe | TCP | Any |

| HPC MPI PingPong Diagnostic (TCP-In) | Inbound | Head node, broker node, compute node, workstation node | Mpipingpong.exe | TCP | Any |

| HPC MPI Service (TCP-In) | Inbound | Head node, broker node, compute node, workstation node | Msmpisvc.exe | TCP | 8677 |

| HPC Node Manager Service (TCP-In) | Inbound | Head node, broker node, compute node, workstation node | HpcNodeManager.exe | TCP | 1856 |

| HPC Session (TCP-In, private) | Inbound | Head node | HpcSession.exe | TCP | 9092 |

| HPC SMPD (TCP-In) | Inbound | Head node, broker node, compute node, workstation node | HpcSmpd.exe | TCP | Any |

| HPC Application Integration port sharing (TCP-Out) | Outbound | Head node, broker node | SMSvcHost.exe | TCP | Any |

| HPC MPI clock sync (TCP-Out) | Outbound | Head node, broker node, compute node, workstation node | Mpisync.exe | TCP | Any |

| HPC MPI Etl to clog conversion (TCP-Out) | Outbound | Head node, broker node, compute node, workstation node | Etl2clog.exe | TCP | Any |

| HPC MPI Etl to OTF conversion (TCP-Out) | Outbound | Head node, broker node, compute node, workstation node | Etl2otf.exe | TCP | Any |

| HPC MPI PingPong Diagnostic (TCP-Out) | Outbound | Head node, broker node, compute node, workstation node | Mpipingpong.exe | TCP | Any |

| HPC Mpiexec (TCP-Out) | Outbound | Head node, broker node, compute node, workstation node | Mpiexec.exe | TCP | Any |

| HPC Node Manager Service (TCP-Out) | Outbound | Head node, broker node, compute node, workstation node | HpcNodeManager.exe | TCP | Any |

HPC public rules

| Rule name | Direction | Cluster node | Used for | Protocol | Local port |

|---|---|---|---|---|---|

| HPC Application Integration port sharing (TCP-In) | Inbound | Head node, broker node | SMSvcHost.exe | TCP | 9091 |

| HPC Broker (TCP-In) | Inbound | Head node, broker node | HpcBroker.exe | TCP | 9087, 9095 |

| HPC Broker Worker (HTTP-In) Note: This rule is configured starting in HPC Pack 2008 R2 with Service Pack 1. | Inbound | Head node, broker node | Any | TCP | 80, 443 |

| HPC Diagnostic Service (TCP-In) | Inbound | Head node | HpcDiagnostics.exe | TCP | 5974 |

| HPC File Staging Proxy Service (TCP-In) Note: This rule is configured starting in HPC Pack 2008 R2 with Service Pack 1. | Inbound | Head node | HpcScheduler.exe | TCP | 7997 |

| HPC File Staging Worker Service (TCP-In) Note: This rule is configured starting in HPC Pack 2008 R2 with Service Pack 1. | Inbound | Head node, broker node, compute node, workstation node | HpcNodeManager.exe | TCP | 7998 |

| HPC Host (TCP-In) | Inbound | Head node, broker node, compute node, workstation node | HpcServiceHost.exe | TCP | - HPC Pack 2012: any - HPC Pack 2008 R2: 9100 - 9163 |

| HPC Host for controller (TCP-In) | Inbound | Head node, broker node, compute node, workstation node | HpcServiceHost.exe | TCP | - HPC Pack 2012: any - HPC Pack 2008 R2: 9200 - 9263 |

| HPC Host x32 (TCP-In) | Inbound | Head node, broker node, compute node, workstation node | HpcServiceHost32.exe | TCP | - HPC Pack 2012: any - HPC Pack 2008 R2: 9100 - 9163 |

| HPC Host x32 for controller (TCP-In) | Inbound | Head node, broker node, compute node, workstation node | HpcServiceHost32.exe | TCP | - HPC Pack 2012: any - HPC Pack 2008 R2: 9200 - 9263 |

| HPC Job Scheduler Service (TCP-In) | Inbound | Head node | HpcScheduler.exe | TCP | 5800, 5801, 5969, 5999 |

| HPC Management Service (TCP-In) | Inbound | Head node, broker node, compute node, workstation node | HpcManagement.exe | TCP | 6729, 6730 |

| HPC Monitoring Server Service (TCP-In) Note: This rule is configured starting in HPC Pack 2012. | Inbound | Head node, broker node, compute node, workstation node | HpcMonitoringServer.exe | TCP | 9894 |

| HPC Monitoring Server (UDP-In) Note: This rule is configured starting in HPC Pack 2012. | Head node | HpcMonitoring Server.exe | UDP | 9894 | |

| HPC Reporting Database (In) | Inbound | Head node | Sqlservr.exe | Any | Any |

| HPC SDM Store Service (TCP-In) | Inbound | Head node | HpcSdm.exe | TCP | 9892, 9893 |

| HPC SDM Store Service (UDP-In) Note: This rule is not configured by HPC Pack 2012. | Inbound | Head node | HpcSdm.exe | UDP | 9893 |

| HPC Session (HTTPS-In) | Inbound | Head node | Any | TCP | 443 |

| HPC Session (TCP-In) | Inbound | Head node | HpcSession.exe | TCP | 9090, 9094 |

| HPC SOA Diag Monitor Service (TCP-in) Note: This rule is configured starting in HPC Pack 2012. | Inbound | Head node | HpcSoaDiagMon.exe | 9096 | |

| SQL Browser (In) | Inbound | Head node | Sqlbrowser.exe | Any | Any |

| HPC Broker (Out) | Outbound | Head node, broker node | HpcBroker.exe | Any | Any |

| HPC Broker Worker (TCP-Out) | Outbound | Head node, broker node | HpcBrokerWorker.exe | TCP | Any |

| HPC Job Scheduler Service (TCP-Out) | Outbound | Head node | HpcScheduler.exe | TCP | Any |

| HPC Management Service (TCP-Out) | Outbound | Head node, broker node, compute node, workstation node | HpcManagement.exe | TCP | Any |

| HPC Session (Out) | Outbound | Head node | HpcSession.exe | Any | Any |

| HPC SOA Diag Mon Service (TCP-Out) Note: This rule is configured starting in HPC Pack 2012. | Outbound | Head node, broker node, compute node, workstation node | HPCSoaDiagMon.exe | TCP | Any |

Firewall ports used for communication with Windows Azure nodes

The following table lists the ports in any internal or external firewalls that must be open by default for the deployment and operation of Windows Azure nodes by using HPC Pack 2008 R2 with Service Pack 3 or Service Pack 4, or HPC Pack 2012.

| Protocol | Direction | Port | Purpose |

|---|---|---|---|

| TCP | Outbound | 443 | HTTPS - Node deployment - Communication with Windows Azure storage - Communication with REST management APIs Non-HTTPS TCP traffic - Service-oriented architecture (SOA) services - File staging - Job scheduling |

| TCP | Outbound | 3389 | RDP - Remote desktop connections to the nodes |

The following table lists the ports in any internal or external firewalls that must be open for the deployment and operation of Windows Azure nodes by using HPC Pack 2008 R2 with Service Pack 1 or Service Pack 2.

| Protocol | Direction | Port | Purpose |

|---|---|---|---|

| TCP | Outbound | 80 | HTTP - Node deployment |

| TCP | Outbound | 443 | HTTPS - Node deployment - Communication with Windows Azure storage - Communication with REST management APIs |

| TCP | Outbound | 3389 | RDP - Remote desktop connections to the nodes |

| TCP | Outbound | 5901 | Service-oriented architecture (SOA) services - SOA broker |

| TCP | Outbound | 5902 | SOA services - SOA proxy control |

| TCP | Outbound | 7998 | File staging |

| TCP | Outbound | 7999 | Job scheduling |

Registry setting to revert to earlier Windows Azure firewall port configuration

If your cluster is running at least HPC Pack 2008 R2 with SP3, you can configure the head node to communicate with Windows Azure by using the network firewall ports that are required for HPC Pack 2008 R2 with SP1 or SP2 instead of the default port 443. To change the required ports, you configure a registry setting under HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\HPC. Configure the DWORD named WindowsAzurePortsSetting, and set data to zero (0). After configuring this registry setting, you must restart the head node to restart the HPC services. You must also ensure that any internal or external firewall ports are configured properly and redeploy any Windows Azure nodes that were deployed by using the port settings that were previously configured.

Caution

Incorrectly editing the registry may severely damage your system. Before making changes to the registry, you should back up any valued data on the computer.