Create a connector AI action (preview)

[This topic is prerelease documentation and is subject to change.]

You can create new or extend existing certified Power Platform connectors with AI action capability that allows users to add them as part of Microsoft 365 Chat. When you use Microsoft 365 Copilot, these AI actions help connect and interact your users to the data sources through AI.

Actions in this article are formerly known as plugins. You can use a newly created action directly in an agent. Your existing actions were auto-promoted to agents.

Important

- This is a preview feature.

- Preview features aren’t meant for production use and may have restricted functionality. These features are available before an official release so that customers can get early access and provide feedback.

In this article, you learn how to enable AI action capability in connectors, know the best practices, and apply general recommendations enabling your connectors as AI actions in Microsoft 365 Chat.

Before you begin

Before you start creating new connectors or extending existing connectors with the AI action capability, ensure you understand the design principles and the user audience. Also make sure you know the system's capabilities.

Understand the AI action user

As a connector-based AI action developer or maker, you should know the persona that will be invoking action operations. Depending on the persona, you should select appropriate connector actions to be enabled as AI actions.

Note

The connectors' audience is generally makers and developers; however, connector AI actions need to be consumable by line of business workers.

Enable only appropriate connector actions

Given the understanding of the user persona, you need to know what information they're likely to have when they type their requests in Copilot. What they can provide is relayed when the corresponding action operation is invoked. Hence, only those actions should be selected where the Copilot user provides the required inputs.

For examples, go to Supported queries for certified connectors.

Connector certification

Any connector with Copilot AI action extensibility must be published as a certified connector before users can use the AI action through Microsoft 365 Copilot. You can't use custom connectors as AI actions at this time; certification is a mandatory requirement for AI action publishing.

To learn more about connector certification, go to Get your connector certified.

Create or extend connectors with AI action capability

Providing AI action capability in a connector and making it available for users to use as an AI action within Microsoft 365 Copilot (such as through a Microsoft 365 Chat experience) includes the following steps:

- Create a new connector, or edit an existing connector by adding Copilot capability. More information: Extend Microsoft 365 Copilot or Copilot agents with connector actions (preview)

- Certify the new or edited connector with the Copilot capability. More information: Get your connector certified

Supported and unsupported capabilities

As AI orchestrator and AI actions capabilities are part of a fast evolving landscape, we expect some limitations at first, and these are overcome. However, it's critical to know what is supported and what's not.

Read-only connector action is supported as an AI action operation

At this point, only queries (read-only) scenarios are supported. These are typically exposed by GET methods in REST API swagger definitions.

Atomic connector action is supported as an AI action operation

Only atomic connector actions (single underlying API action) can be invoked as part of AI action invocation currently.

Multi-step orchestration across the connector actions is limited

This is an issue when you would like to combine data across connector actions. Let's consider a scenario where you have two API paths that are exposed as two distinct connector actions.

ListTickets: API that returns multiple ticket records scoped to the user making the request with the following fields:

Ticket\_Id,Ticket\_Title,Ticket\_Priority, andTicket\_Status.DisplayTicket DetailsByTicketId: API returns comprehensive ticket data including

Ticket\_Id,Ticket\_Title,Ticket\_Priority,Ticket\_Status,Ticket\_Assigned\_To,Ticket\_Opened\_By,Ticket\_Expected\_Close\_Date,Ticket\_Close\_Date,Ticket\_Open Date, among other details.

When a user issues a query in Copilot, Show me details of ticket System Send Error, the orchestrator today isn't always able to orchestrate a plan across two API actions: 1) List tickets to retrieve tickets and to identify the Ticket_Id of the ticket by matching the name "System Send Error", and 2) Invoke ticket details action based on the Id from Step 1.

In this case, we need to either rely on the user to ask the AI action to first list tickets, retrieve the ticket id, and then ask for details of said ticket or the API developer needs to create a new API to display ticket details based on the ticket title. This API can then be exposed as a new connector action and enabled as an action operation. We strongly encourage API developers to expose APIs that can be directly consumed by Copilot users.

Limitations of Copilot to guess required inputs

Don't assume that the Copilot can guess required inputs. While thinking about user prompts, expect that required inputs need to be part of their instruction to Copilot.

There are limited scenarios where LLMs (large language models) are able to guess an input. However, this was the exception rather than the rule. As an example, for a weather connector that required two inputs (1) Location (2) Unit of Temperature when the user asked, Show me the weather in Seattle, WA, and didn't provide the unit of measure, the Copilot added Fahrenheit as a unit of measure when invoking the AI action. It's possible that Copilot assumed that since the user is asking a question about a location in the United States where Fahrenheit is the commonly used temperature unit, that the Fahrenheit measurement should be used.

However, in some scenarios the Copilot was unable to guess the input or guessed it incorrectly. This is where robust testing against Copilot is highly recommended to ensure the behavior is as expected.

Limitations for reasoning over response from connector

It's important to validate what operations Copilot can perform in terms of reasoning over the AI action response.

Some basic capabilities in reasoning over the output are possible. This requires validation based on your scenario. As an example, in a sales app AI action, Show me opportunities that have high probability of closing, the LLM was able to filter the returned opportunities and displayed ones with >50% chance of closing. It was able to utilize the data field probability of closing to filter the data. It isn't always the case that a field in the response is used by the LLM. The choice of 50% was also arbitrary by the LLM, although reasonable given lack of user input. However, the LLM also displayed closed opportunities in this scenario that had 100% probability of closing, which might not be what the user wanted. For this scenario, the user would likely modify their request to Show me open opportunities that have high probability of closing.

Recommendations and best practices

- Simplicity and accuracy are critical to ensure the LLM knows when and how to invoke your AI action. For example:

- An AI action manifest description like This is the Sales app action that brings back opportunities, leads, and contacts from Sales app is better than just Sales app action.

- An AI action description like This is the Dynamics 365 Sales action that can be used to retrieve data from important Sales entities is misleading. Instead, consider This is the Dynamics 365 Sales action that brings back opportunities, leads, and contacts from Sales app.

- AI action action parameter description: Each of the connector action descriptions should describe the specific action. For example, This action brings back Dynamics 365 Sales leads based on Lead creation date range in MM/DD/YYYY format. Providing input details helps the LLM know possible ways to interrogate the action. It also helps it to map data fields from user input to those needed by underlying AI action and API action that it's exposing. Providing formatting information for a parameter helps the Copilot conform the input it submits when invoking the API.

- Stay away from using generic descriptions for the AI action, action operations, and parameters.

- As an AI action author, you should be precise in the description of what the action does and doesn't do. While it's tempting to add extra information to substantiate the likelihood of an AI action being used by Copilot (similar to search engine optimization for a web page), this can result in the following critical issues.

- A generic action description can prevent the appropriate AI action for the job from getting picked up. If this happens frequently, the user can turn off such action.

- When selected incorrectly, it might cause AI action execution or the response to fail. Copilots cant surface AI actions that have high error rates. End users can also downvote responses from Copilot. Over time, this data can be used to identify offending actions for admins and Copilot to take actions.

- As an AI action author, you should be precise in the description of what the action does and doesn't do. While it's tempting to add extra information to substantiate the likelihood of an AI action being used by Copilot (similar to search engine optimization for a web page), this can result in the following critical issues.

Test your AI action

Validation is critical to ensure the LLM can create a proper execution plan for the intended prompt. In some cases, only a modified version of the prompt may succeed.

Ensure you review the supported and unsupported capabilities. Also review the considerations described in this article previously.

Use the action-validator tool to allow users to validate that the action-related changes made in the custom connector UI are sufficient for the target use cases. The tool, built using Semantic Kernel (SK), allows users to input their swaggers containing action-level annotations. It also does a sanity test of prompts that they would expect the AI to use their action to answer.

Prerequisites

A download of the action-validator tool.

Use Azure OpenAI service or an OpenAI subscription. More information: Welcome to the AI Platform

You need the following details afterwards:

- OpenAI key

- OpenAI organizationId

- OpenAI model to use. We recommend gpt-3.5-turbo-16k.

Swagger json file for the connector (which is now populated with action-level annotations). This can be retrieved by selecting to download the connector on the custom connectors page. The path to the swagger file is used as an input to the tool.

Important

Different orchestration engines are in use today. While there could be differences and your experience may vary, testing with any AI orchestrator always provides more value than not testing copilot-enabled connectors.

Run the action-validator tool

Follow these steps to run the action-validator tool and test the copilot-enabled connector.

Run the tool with following arguments:

- Type:

OpenAIorAzureOpenAI(use OpenAI) - OpenAI key:

{insert key} - OpenAI org ID:

{insert orgId} - Model to use:

{gpt-3.5-turbo-16k} - Swagger file path:

"C:\\Users\\abe\\OneDrive - Microsoft\\Documents\\apiDefinition.swagger.json"

Example:

action-validator.exe OpenAI id-abc org-abc gpt-3.5-turbo-16k "C:\\Users\\abe\\OneDrive - Microsoft\\Documents\\apiDefinition.swagger.json"

The tool reads the swagger passed in. Using the action-related annotations in the swagger, it creates the final action swagger file in the original swagger file's directory. It uses this directory for the prompt testing. The new file is called action-swagger.json.

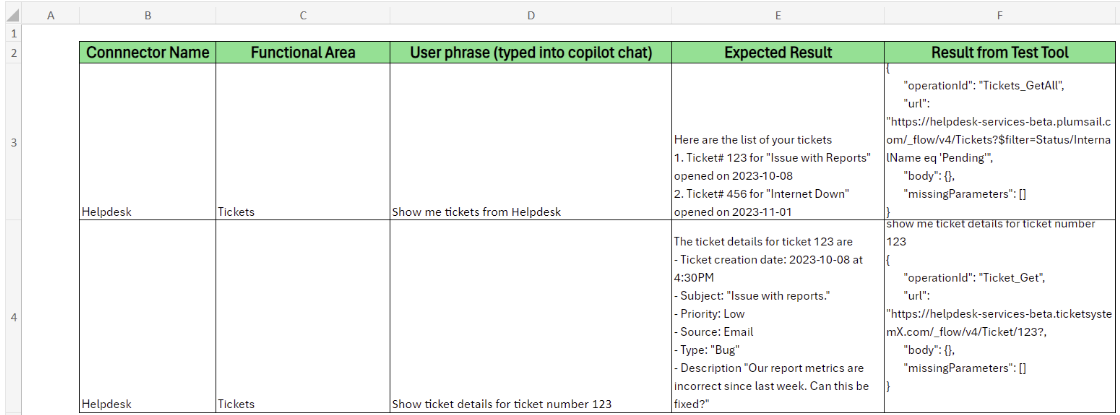

The tool does the following actions in a loop:

- The tool asks the user to test out a prompt.

- The user inputs a prompt (example: show me my top 10 sales app opportunities).

- The tool outputs the following JSON structure:

- OperationId: operationId of the action used to answer prompt.

- Url: URL that would be used to get the data, including any query parameters.

- Body: Body parameters that would be used in the request.

- Headers: Headers that would be used in the request.

- MissingParameters: Parameters that are required for the action, but the user didn't specify in the query.

The following example is the SK tool. It uses the current version, which might change soon.

Enter prompt to test action (Example: show me open opportunities)

show me tickets that are pending

{

"operationId": "Tickets_GetAll",

"url": "https://helpdesk-services-beta.plumsail.com/_flow/v4/Tickets?$filter=Status/InternalName eq 'Pending'",

"body": {},

"missingParameters": []

}

Enter prompt to test action (Example: show me open opportunities)

show me planner tasks in buckat b123

{

"operationId": "ListTasks_V2",

"url": "https://graph.microsoft.com/v1.0/planner/buckets/b123/tasks",

"body": {},

"missingParameters": []

}

Enter prompt to test action (Example: show me open opportunities)

get group plans

{

"operationId": "ListGroupPlans",

"url": "https://graph.microsoft.com/v1.0/groups/{groupId}/planner/plans",

"body": {},

"missingParameters": ["groupId"]

Validate and verify results

Following are the steps for interpreting results and validating if there are changes needed to the swagger.

Validate that the

operationIdthat was chosen matches the action in the swagger definition file that you'd expect to be used to answer the prompt. If it doesn't, perhaps the descriptions in the actions aren't detailed enough to allow the model to decipher which action it needs to use.Update the descriptions following best practices and re-attempt.

Validate the URL information. The main check is to discover which request parameters are being used, and how the parameters and values are formatted. Based on your understanding of the prompt and the inner working of the API, you want to decide if a URL like this would succeed or fail. Check the best practices section to understand how to update parameter information to have the model more accurately determine the parameters and formatting needed.

For example, suppose I have an API route

GET /ticketsthat takes in query filter parameters ofstatusandcategory. If I test the prompt, show me tickets categorized as a problem, I'd expect the tool to include?category=problemas the variable used.Validate the body. Perform similar validations as you do in the URL query section to ensure if there are body parameters needed, they're being included, and are formatted properly.

Validate the headers section. Typically, this doesn't need validation. However, there are cases where there's a parameter that the user includes in the prompt that needs to be in the header section. If that isn't the case, skip this section.

Ensure that the

missingParametersfield is empty. If there's a value there, it means there's a required parameter in the route that the model wasn't able to figure out. Either that data wasn't included in the prompt, or it's unable to determine where in the prompt it was. This likely means you need to tweak the prompt or tweak the parameter description to better match what the user would input.If the results from all of these steps look good, the information in the swagger is likely good enough to answer prompts, like the one you tried. If not, check the best practices section.

Tool considerations

Each run of the tool (even with the same prompt) might result in different formatting of the parameters based on the determination the LLM makes. This is expected, and it might be useful to run a given prompt a few times to understand the differences that might occur. This can ensure they're still valid.

Each run of the tool starts from a clean state and is unable to refer to a previous prompt or get data between runs.

The action-validator tool has following limitations:

- The tool is biased towards returning an action, even when the prompt has nothing to do with the action. For example, if the swagger has 1 route /tickets, and the prompt is get me things, it might still return /tickets as the route to use. Sometimes putting in gibberish (or nonsense) results in a route returned. This isn't the behavior of the AI copilots themselves, and is a limitation of this test tool.

- The current date context of the tool might not be the correct date. A prompt of show me tickets created last week shows the OData filter value being created ge '2021-02-01', which isn't correct. This likely works as expected in other orchestrators, but is showing like this in the tool.

- If you followed best practices for a particular situation and are providing context in the descriptions, yet the tool is returning an unexpected result, this might be an underlying limitation of the tool.

Troubleshooting tips

Here are some examples for updating the action/parameter/responses in the swagger based on scenarios:

Update the action/route descriptions. Tweak if the test tool is unable to determine which action to use for prompts.

Here's an example of an old route description as compared to a new route description for clarity for a route that retrieves courses with a subject parameter:

- Old: Get courses

- New: Gets a list of courses for a specified subject

Update the parameter description. Tweak it if the test tool isn't including an expected parameter, leaving out a parameter, or isn't formatting the parameter as expected:

- If the wrong parameter is being used, perhaps the description for the parameters needs to be more explicit, or the prompt needs to be more explicit. For example, if there's a /tickets endpoint that takes in a creator and owner parameter, and the prompt is get tickets for james, it might not know which parameter to use. The prompt might need to be more detailed.

- If a parameter is being added when it isn't supposed to, or isn't being added when it needs to, then perhaps the description is too vague or is incorrect. Update the parameter description to provide more context as to when it should be used. Also, perhaps include a default value.

- If parameter expects the value to be in quotes (for example, for an ODATA

$filter):- An ODATA

$filterquery option to filter the entries returned. Filter values are required to be enclosed in single-quotes.

- An ODATA

- If your ODATA

$filteronly supports limited functionality, call that out:- An ODATA

$filterquery option to filter the entries returned. Only supports the following filter options:<,>, and=.

- An ODATA

- If a date parameter needs to be in a particular format (example: for a

created\_atparameter):- Returns data created on a certain date. Format must be in following format:

yyyy-mm-dd.

- Returns data created on a certain date. Format must be in following format:

- If specific capitalization is required for a parameter called fields_to_return:

- Returns the subset of fields that were input. Field names are required to be snake_cased (for example,

created\_at).

- Returns the subset of fields that were input. Field names are required to be snake_cased (for example,

- If there are only a few values that are possible for a filter. For example, for a parameter called

'status'allowing filtering on status:- Returns data for the chosen status. Supported values are new, in-progress, and closed.

Update the response of the swagger when there are parameters that need to be filtered and are unable to be found:

- Ensure that if the user has the ability to ask for certain values to filter on, that those are included in the response as an enum. This is especially helpful for an ODATA `$filter``, where the user can filter on any field and value.

- If there's a status value in the response, ensure to include the enum values supported.

- Example:

"enum": \[ "New", "InProgress", "Pending", "Solved"\]

- Ensure that if the user has the ability to ask for certain values to filter on, that those are included in the response as an enum. This is especially helpful for an ODATA `$filter``, where the user can filter on any field and value.

If you encounter the following error when running the tool, This model's maximum context length is 16385 tokens. However, our messages resulted in {} tokens. Please reduce the length of the messages, do the following. Choose a GPT-4 model as a parameter when running the command (instead of GPT-3.5-Turbo-16k).

- If using Azure OpenAI service, you can learn about setting rate limits in Manage Azure OpenAI Service quota to increase your quotas.

- If you're using OpenAI ChatGPT, you can find other models to use in Models.

- You can evaluate price considerations when choosing a different model in OpenAI Pricing.

Submit the AI action-enabled connector for certification

After you tested the AI action, submit the connector for certification.

The following additional information is required when submitting a copilot-enabled connector:

Personas and scenarios these prompts are useful for.

List of prompts (phrases a user types in the chat) and expected responses to the prompts.

Screenshots of test results utilizing prompts in the test tool.

Ensure you review the following information before submission:

- Review the AI action description to ensure that it's accurate and acceptable.

- Validate the expected results.

- Review other security, content, and be sure the connector follows responsible AI guidelines.

Supported queries for certified connectors

This section provides example queries for currently supported first-party connector AI actions.

Freshdesk

- Show me my freshdesk products

- Show freshdesk ticket details for ticket 6

- Get freshdesk agents

- Get my freshdesk contacts

- Show me my freshdesk tickets created since march 2023

Salesforce

- Get opportunities from Salesforce

- Show me my Salesforce opportunities

- Show me details about Salesforce opportunity called Smith Mobile Generators

- Show me my Salesforce opportunities that have a high likelihood with probability of greater than 75.0 of closing in this quarter

- Show me my Salesforce leads

- Show me details of Salesforce lead <First_name Last_name>

- Show me details of my Salesforce qualified leads created in last 120 days

- Show me Salesforce accounts

- Show me my Salesforce account details for Burlington Any Corp of America

- Show me Salesforce accounts that have a sales annual revenue greater than $500000000

- Show me the Salesforce cases for accountId 001Hp00002eQ3O1IAK that are not closed

- What are the Salesforce cases where subject contains Generators?

- Show me my Salesforce cases that aren't closed

Zendesk

- Show me the Tickets in my Zendesk account

- Show zendesk Ticket details for ticket 8?

- What is the status of zendesk Ticket 2?

- Who is the zendesk Ticket 2 assigned to?

- Show me details about zendesk user 55555555555555

- Show me the comments on zendesk ticket 13 submitted by 55555555555555

- Show me zendesk groups that aren't deleted

ServiceNow

- Show me the list of servicenow incidents

- Show details for servicenow incident "INC0010003"

- Who is the servicenow Incident "INC0010007" assigned to?

- Show servicenow incidents created in october

- Show servicenow incidents created by xxx.creator

- Get a list of servicenow tasks

- Show details for servicenow task INC0010006

- Show servicenow tasks created before october 6, 2023

- Get a list of servicenow users

- Get me details of servicenow user with email john.smith@example.com

Twilio

- Show me all twilio messages in my Network

- Show me twilio messages to this phone number "+18445554360"

- Show me twilio messages that came from 6785555306

- Show me twilio messages sent on October 6, 2023

GitHub

- Show me details about issue 200 for repository Space owned by Sublime

- Show details for pull request 150 for repository Space owned by Sublime

- list issues for repository Space owned by Sublime

- Get the pull requests for repository Space owned by Sublime

- Get github repos owned by user <user GitHub alias>

- List public github repos for org Microsoft

MailChimp

- Get my mailchimp lists

- List my mailchimp campaigns

MSN Weather

- Get current weather for Westport, WA

- Get weather forecast for today in Athens, GA

- Get weather forecast for tomorrow in Atlanta, GA

Microsoft Shifts

- What are the open shifts for this week?

- What shifts are available for this week? List the team names of the shifts.

- Who is on shift?

- What are the shifts for this week? Show in a table.

- When are the time offs this week?