Конечная точка вывода модели в Службах искусственного интеллекта Azure

Вывод модели искусственного интеллекта Azure в службах ИИ Azure позволяет клиентам использовать самые мощные модели от флагманных поставщиков моделей с помощью одной конечной точки и учетных данных. Это означает, что можно переключаться между моделями и использовать их из приложения, не изменяя одну строку кода.

В этой статье объясняется, как модели организованы внутри службы и как использовать конечную точку вывода для их вызова.

Развертывания

Вывод модели искусственного интеллекта Azure делает модели доступными с помощью концепции развертывания . Развертывания — это способ предоставления модели имени в определенных конфигурациях. Затем можно вызвать такую конфигурацию модели, указав ее имя в запросах.

Сбор развертываний:

- Имя модели

- Версия модели

- Тип подготовки и емкости1

- Конфигурацияфильтрации содержимого 1

- Ограничение скорости конфигурации1

1 Конфигурации могут отличаться в зависимости от выбранной модели.

Ресурс служб искусственного интеллекта Azure может иметь столько развертываний моделей, сколько необходимо, и они не несут затрат, если вывод не выполняется для этих моделей. Развертывания — это ресурсы Azure, поэтому они применяются к политикам Azure.

Дополнительные сведения о создании развертываний см. в статье "Добавление и настройка развертываний моделей".

Конечная точка вывода искусственного интеллекта Azure

Конечная точка вывода искусственного интеллекта Azure позволяет клиентам использовать одну конечную точку с той же проверкой подлинности и схемой для создания вывода для развернутых моделей в ресурсе. Эта конечная точка следует API вывода модели ИИ Azure, которая поддерживает все модели в модели искусственного интеллекта Azure. Она поддерживает следующие модалидности:

- Внедрение текста

- Внедрение изображений

- Завершение чата

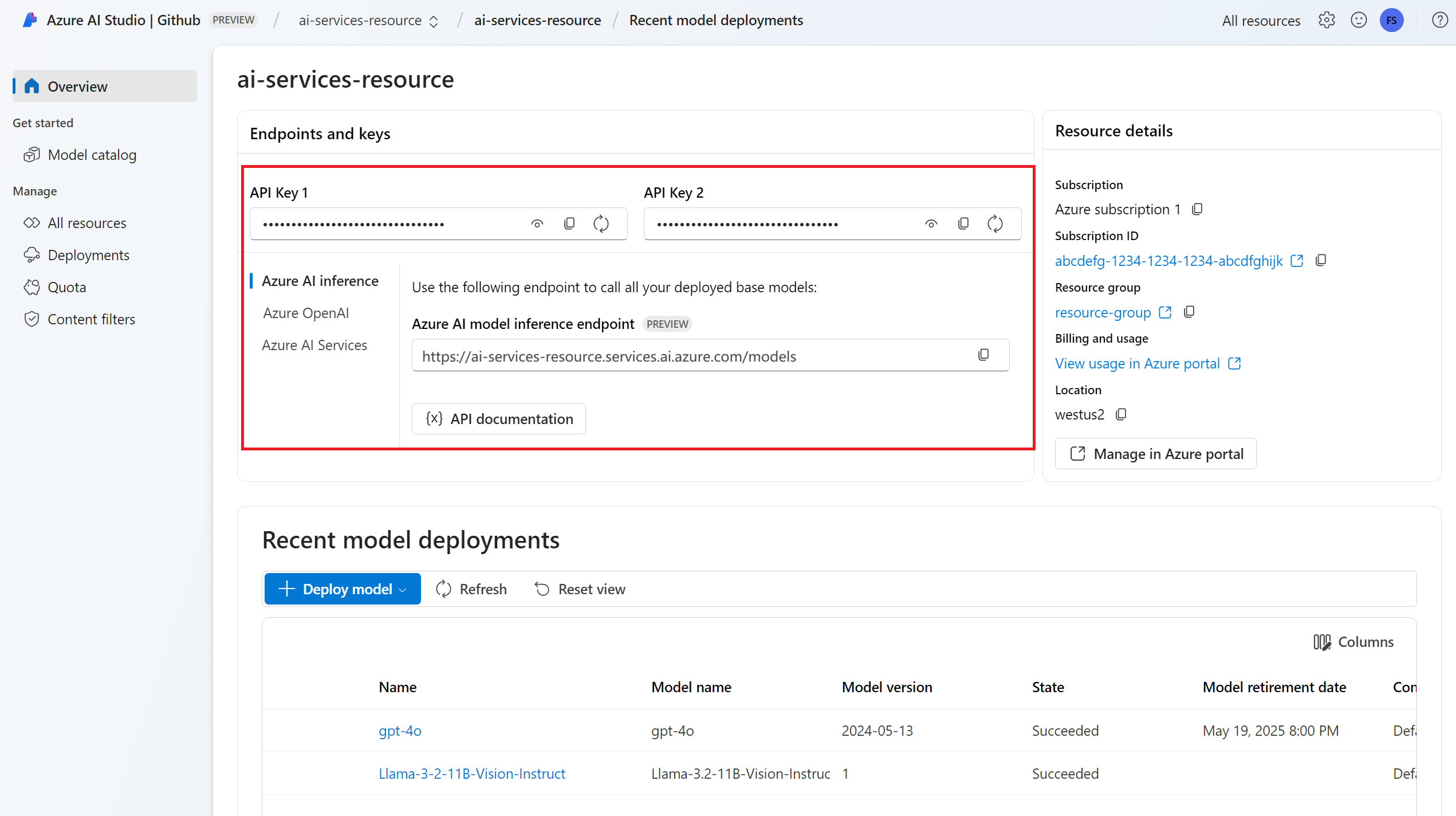

URL-адрес конечной точки и учетные данные можно просмотреть в разделе "Обзор ":

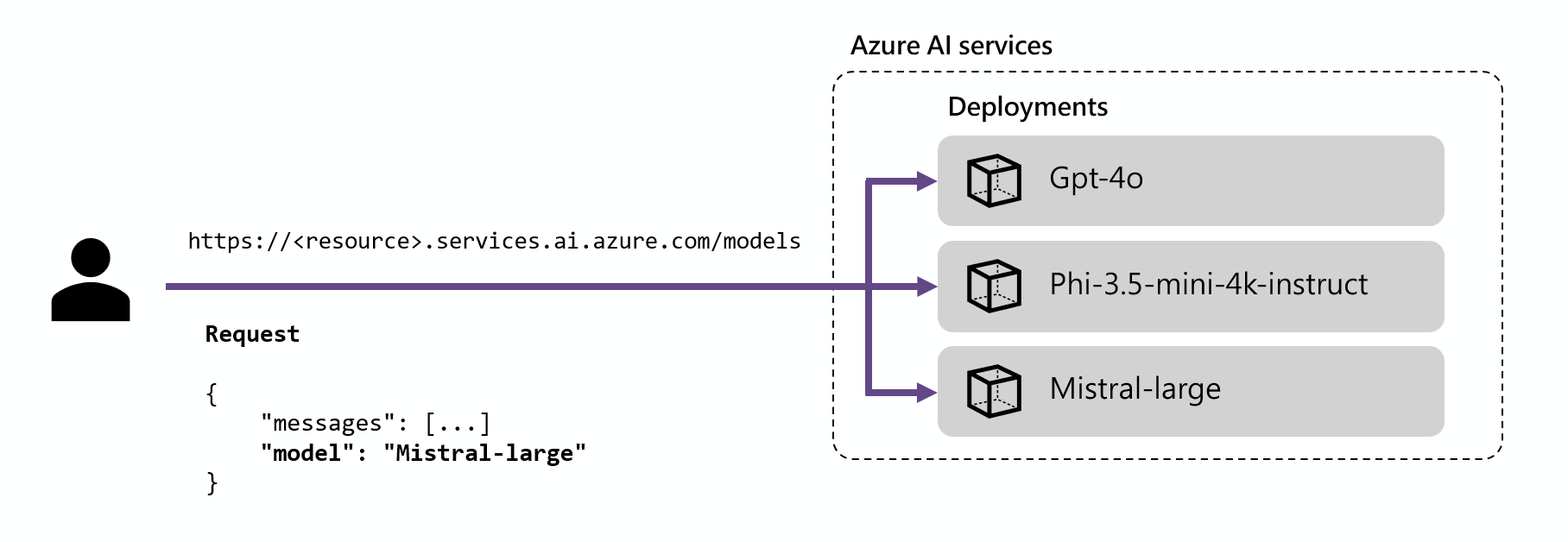

Маршрутизация

Конечная точка вывода направляет запросы к заданному развертыванию, сопоставляя параметр name внутри запроса с именем развертывания. Это означает, что развертывания работают в качестве псевдонима данной модели в определенных конфигурациях. Эта гибкость позволяет развертывать определенную модель несколько раз в службе, но в разных конфигурациях при необходимости.

Например, если создать развертывание с именем Mistral-large, такое развертывание можно вызвать следующим образом:

Установите пакет azure-ai-inference с помощью диспетчера пакетов, например pip:

pip install azure-ai-inference

Затем можно использовать пакет для использования модели. В следующем примере показано, как создать клиент для использования завершения чата:

import os

from azure.ai.inference import ChatCompletionsClient

from azure.core.credentials import AzureKeyCredential

model = ChatCompletionsClient(

endpoint="https://<resource>.services.ai.azure.com/models",

credential=AzureKeyCredential(os.environ["AZUREAI_ENDPOINT_KEY"]),

)

Ознакомьтесь с нашими примерами и ознакомьтесь со справочной документацией по API, чтобы приступить к работе.

from azure.ai.inference.models import SystemMessage, UserMessage

response = client.complete(

messages=[

SystemMessage(content="You are a helpful assistant."),

UserMessage(content="Explain Riemann's conjecture in 1 paragraph"),

],

model="mistral-large"

)

print(response.choices[0].message.content)

Совет

Маршрутизация развертывания не учитывает регистр.

Пакеты SDK

Конечная точка вывода модели искусственного интеллекта Azure поддерживается несколькими пакетами SDK, включая пакет SDK для вывода искусственного интеллекта Azure, пакет SDK для Azure AI Foundry и пакет SDK Для Azure OpenAI, доступный на нескольких языках. Несколько интеграции также поддерживаются в популярных платформах, таких как LangChain, LangGraph, Llama-Index, Семантический ядро и AG2. Дополнительные сведения см. на поддерживаемых языках программирования и пакетах SDK.

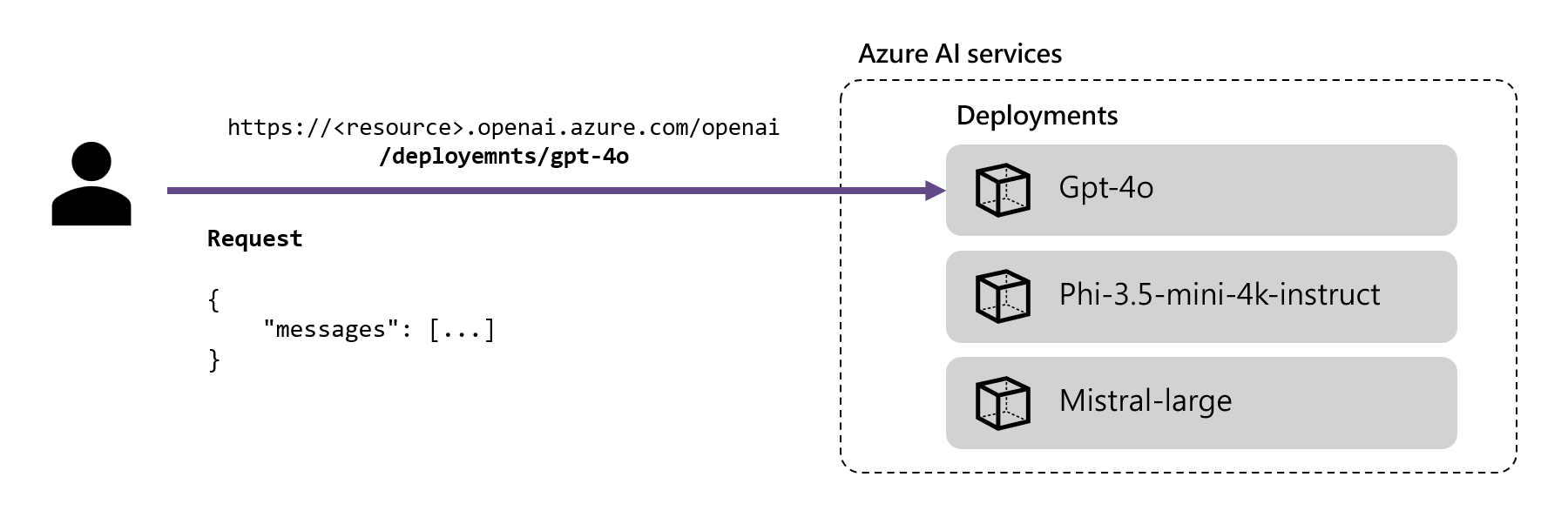

Конечная точка вывода Azure OpenAI

Модели Azure OpenAI, развернутые в службах ИИ, также поддерживают API Azure OpenAI. Этот API предоставляет полные возможности моделей OpenAI и поддерживает дополнительные функции, такие как помощники, потоки, файлы и пакетное вывод.

Конечные точки вывода Azure OpenAI работают на уровне развертывания и имеют собственный URL-адрес, связанный с каждым из них. Однако для их использования можно использовать тот же механизм проверки подлинности. Дополнительные сведения см. на странице справки по API OpenAI Для Azure

У каждого развертывания есть URL-адрес, который является объединением базового URL-адреса Azure OpenAI и маршрута /deployments/<model-deployment-name>.

Внимание

Для конечной точки Azure OpenAI нет механизма маршрутизации, так как каждый URL-адрес является эксклюзивным для каждого развертывания модели.

Пакеты SDK

Конечная точка Azure OpenAI поддерживается пакетами SDK OpenAI (AzureOpenAI класс) и пакетами SDK Для OpenAI Azure, доступными на нескольких языках. Дополнительные сведения см . на поддерживаемых языках .