Opslagruimten Direct implementeren op Windows Server

In dit onderwerp vindt u stapsgewijze instructies voor het implementeren van Storage Spaces Direct op Windows Server. Als u Opslagruimten Direct wilt implementeren als onderdeel van Azure Local, raadpleegt u Over Azure Local

Tip

Wilt u een hypergeconvergeerde infrastructuur verkrijgen? Microsoft raadt u aan een gevalideerde hardware/software azure Local-oplossing van onze partners te kopen. Deze oplossingen zijn ontworpen, samengesteld en gevalideerd op basis van onze referentiearchitectuur om compatibiliteit en betrouwbaarheid te garanderen, zodat u snel aan de slag kunt. Als u een catalogus met hardware-/softwareoplossingen wilt gebruiken die met Azure Local werken, raadpleegt u de Lokale catalogus van Azure.

Tip

U kunt Hyper-V virtuele machines, waaronder in Microsoft Azure, gebruiken om Opslagruimten Direct te evalueren zonder hardware. U kunt ook de handige snelle labimplementatiescripts van Windows Serverbekijken, die we voor trainingsdoeleinden gebruiken.

Voordat u begint

Bekijk de hardwarevereisten van Opslagruimten Direct en skim dit document om vertrouwd te raken met de algemene benadering en belangrijke notities die zijn gekoppeld aan een aantal stappen.

Verzamel de volgende informatie:

Implementatieoptie. Opslagruimten Direct ondersteunt twee implementatieopties: hypergeconvergeerd en geconvergeerd, ook bekend als ontkoppeld. Maak uzelf vertrouwd met de voordelen van elk om te bepalen welke geschikt voor u is. Stap 1 tot en met 3 hieronder zijn van toepassing op beide implementatieopties. Stap 4 is alleen nodig voor geconvergeerde implementatie.

Servernamen. Maak kennis met het naamgevingsbeleid van uw organisatie voor computers, bestanden, paden en andere resources. U moet verschillende servers inrichten, elk met unieke namen.

Domeinnaam. Maak kennis met het beleid van uw organisatie voor domeinnaamgeving en domeindeelname. U voegt de servers toe aan uw domein en u moet de domeinnaam opgeven.

RDMA-netwerken. Er zijn twee typen RDMA-protocollen: iWarp en RoCE. Noteer welk type uw netwerkadapters gebruiken, en als dat RoCE is, noteer dan ook de versie (v1 of v2). Let ook op het model van uw top-of-rack switch voor RoCE.

VLAN-id. Noteer de VLAN-id die moet worden gebruikt voor netwerkadapters van het besturingssysteem op de servers, indien van toepassing. U moet dit kunnen verkrijgen bij uw netwerkbeheerder.

Stap 1: Windows Server implementeren

Stap 1.1: Het besturingssysteem installeren

De eerste stap is het installeren van Windows Server op elke server die zich in het cluster bevindt. Opslagruimten Direct vereist Windows Server Datacenter Edition. U kunt de Server Core-installatieoptie of Server met Bureaubladervaring gebruiken.

Wanneer u Windows Server installeert met behulp van de installatiewizard, kunt u kiezen tussen Windows Server (verwijzend naar Server Core) en Windows Server (Server met Desktopomgeving), wat het equivalent is van de volledige installatieoptie die beschikbaar is in Windows Server 2012 R2. Als u niet kiest, krijgt u de Server Core-installatieoptie. Zie Server Core installerenvoor meer informatie.

Stap 1.2: Verbinding maken met de servers

Deze handleiding richt zich op de Server Core-installatieoptie en het extern implementeren/beheren van een afzonderlijk beheersysteem, dat het volgende moet hebben:

- Een versie van Windows Server of Windows 10 ten minste zo nieuw als de servers die worden beheerd, en met de nieuwste updates

- Netwerkverbinding met de servers die het beheert

- Toegevoegd aan hetzelfde domein of een volledig vertrouwd domein

- RSAT-modules (Remote Server Administration Tools) en PowerShell-modules voor Hyper-V en Failover Clustering. RSAT-hulpprogramma's en PowerShell-modules zijn beschikbaar op Windows Server en kunnen worden geïnstalleerd zonder andere functies te installeren. U kunt ook de Remote Server Administration Tools installeren op een Windows 10-beheercomputer.

Installeer op het beheersysteem het failovercluster en de Hyper-V-beheertools. U kunt dit doen via Serverbeheer met behulp van de wizard Functies en onderdelen toevoegen. Selecteer op de pagina OnderdelenExterne-serverbeheerprogramma'sen selecteer vervolgens de hulpprogramma's die u wilt installeren.

Voer de PS-sessie in en gebruik de servernaam of het IP-adres van het knooppunt waarmee u verbinding wilt maken. Nadat u deze opdracht hebt uitgevoerd, wordt u gevraagd om een wachtwoord. Voer het beheerderswachtwoord in dat u hebt opgegeven bij het instellen van Windows.

Enter-PSSession -ComputerName <myComputerName> -Credential LocalHost\Administrator

Hier volgt een voorbeeld van het doen van hetzelfde op een manier die nuttiger is in scripts, voor het geval u dit meer dan één keer moet doen:

$myServer1 = "myServer-1"

$user = "$myServer1\Administrator"

Enter-PSSession -ComputerName $myServer1 -Credential $user

Tip

Als u extern implementeert vanuit een beheersysteem, krijgt u mogelijk een foutmelding zoals WinRM de aanvraag niet kan verwerken. Om dit op te lossen, gebruikt u Windows PowerShell om elke server toe te voegen aan de lijst met vertrouwde hosts op uw beheercomputer:

Set-Item WSMAN:\Localhost\Client\TrustedHosts -Value Server01 -Force

Opmerking: de lijst met vertrouwde hosts ondersteunt jokertekens, bijvoorbeeld Server*.

Als u de lijst met vertrouwde hosts wilt weergeven, typt u Get-Item WSMAN:\Localhost\Client\TrustedHosts.

Als u de lijst wilt leegmaken, typt u Clear-Item WSMAN:\Localhost\Client\TrustedHost.

Stap 1.3: Lid worden van het domein en domeinaccounts toevoegen

Tot nu toe hebt u de afzonderlijke servers geconfigureerd met het lokale beheerdersaccount, <ComputerName>\Administrator.

Als u Opslagruimten Direct wilt beheren, moet u de servers toevoegen aan een domein en een Active Directory Domain Services-domeinaccount gebruiken dat zich in de groep Administrators op elke server bevindt.

Open vanuit het beheersysteem een PowerShell-console met beheerdersbevoegdheden. Gebruik Enter-PSSession om verbinding te maken met elke server en voer de volgende cmdlet uit, waarbij u uw eigen computernaam, domeinnaam en domeinreferenties vervangt:

Add-Computer -NewName "Server01" -DomainName "contoso.com" -Credential "CONTOSO\User" -Restart -Force

Als uw opslagbeheerdersaccount geen lid is van de groep Domeinadministrators, voegt u uw opslagbeheerdersaccount toe aan de lokale groep Administrators op elk knooppunt of nog beter, voegt u de groep toe die u voor opslagbeheerders gebruikt. U kunt de volgende opdracht gebruiken (of een Windows PowerShell-functie schrijven om dit te doen: zie PowerShell gebruiken om domeingebruikers toe te voegen aan een lokale groep voor meer informatie):

Net localgroup Administrators <Domain\Account> /add

Stap 1.4: Functies en onderdelen installeren

De volgende stap bestaat uit het installeren van serverfuncties op elke server. U kunt dit doen met Windows-beheercentrum, Serverbeheer) of PowerShell. Dit zijn de rollen die moeten worden geïnstalleerd:

- Failover-clusterbeheer

- Hyper-V

- Bestandsserver (als u bestandsshares wilt hosten, zoals voor een geconvergeerde implementatie)

- Data-Center-Bridging (als u RoCEv2 gebruikt in plaats van iWARP-netwerkadapters)

- RSAT-Clustering-PowerShell

- Hyper-V-PowerShell

Als u wilt installeren via PowerShell, gebruikt u de cmdlet Install-WindowsFeature. U kunt deze op één server als volgt gebruiken:

Install-WindowsFeature -Name "Hyper-V", "Failover-Clustering", "Data-Center-Bridging", "RSAT-Clustering-PowerShell", "Hyper-V-PowerShell", "FS-FileServer"

Als u de opdracht op alle servers in het cluster tegelijk wilt uitvoeren, gebruikt u dit kleine stukje script, waarbij u de lijst met variabelen aan het begin van het script wijzigt om aan uw omgeving te voldoen.

# Fill in these variables with your values

$ServerList = "Server01", "Server02", "Server03", "Server04"

$FeatureList = "Hyper-V", "Failover-Clustering", "Data-Center-Bridging", "RSAT-Clustering-PowerShell", "Hyper-V-PowerShell", "FS-FileServer"

# This part runs the Install-WindowsFeature cmdlet on all servers in $ServerList, passing the list of features into the scriptblock with the "Using" scope modifier so you don't have to hard-code them here.

Invoke-Command ($ServerList) {

Install-WindowsFeature -Name $Using:Featurelist

}

Stap 2: het netwerk configureren

Als u Opslagruimten Direct in virtuele machines implementeert, kunt u deze sectie overslaan.

Opslagruimten Direct vereist netwerken met hoge bandbreedte en lage latentie tussen servers in het cluster. Ten minste 10 GbE-netwerken zijn vereist en RDMA (Remote Direct Memory Access) wordt aanbevolen. U kunt iWARP of RoCE gebruiken zolang het het Windows Server-logo heeft dat overeenkomt met uw besturingssysteemversie, maar iWARP is meestal gemakkelijker in te stellen.

Belangrijk

Afhankelijk van uw netwerkapparatuur, en met name met RoCE v2, is mogelijk enige configuratie van de top-of-rack-switch vereist. De juiste switchconfiguratie is belangrijk om de betrouwbaarheid en prestaties van Opslagruimten Direct te garanderen.

Windows Server 2016 heeft switch-embedded teaming (SET) in de virtuele switch Hyper-V geïntroduceerd. Hierdoor kunnen dezelfde fysieke NIC-poorten worden gebruikt voor al het netwerkverkeer tijdens het gebruik van RDMA, waardoor het aantal vereiste fysieke NIC-poorten wordt verminderd. Switch-embedded teaming wordt aanbevolen voor Storage Spaces Direct.

Schakel- of schakelvrije knooppuntverbindingen

- Netwerkswitches moeten correct zijn geconfigureerd om de bandbreedte en het netwerktype te ondersteunen. Als u RDMA gebruikt waarmee het RoCE-protocol wordt geïmplementeerd, is de configuratie van het netwerkapparaat en de switch nog belangrijker.

- Schakelloos: knooppunten kunnen worden verbonden met behulp van directe verbindingen, om te voorkomen dat u een switch gebruikt. Het is vereist dat elk knooppunt een directe verbinding heeft met elk ander knooppunt van het cluster.

Zie de Windows Server 2016 en 2019 RDMA Deployment Guidevoor instructies voor het instellen van netwerken voor Opslagruimten Direct.

Stap 3: Opslagruimten Direct configureren

De volgende stappen worden uitgevoerd op een beheersysteem dat dezelfde versie is als de servers die worden geconfigureerd. De volgende stappen moeten NIET extern worden uitgevoerd met behulp van een PowerShell-sessie, maar in plaats daarvan worden uitgevoerd in een lokale PowerShell-sessie op het beheersysteem, met beheerdersmachtigingen.

Stap 3.1: Schijven opschonen

Voordat u Opslagruimten Direct inschakelt, moet u controleren of uw stations leeg zijn: er mogen geen oude partities of andere gegevens aanwezig zijn. Voer het volgende script uit, waarbij u de computernamen vervangt, om alle oude partities of andere gegevens te verwijderen.

Belangrijk

Met dit script worden alle gegevens op andere stations dan het opstartstation van het besturingssysteem definitief verwijderd.

# Fill in these variables with your values

$ServerList = "Server01", "Server02", "Server03", "Server04"

foreach ($server in $serverlist) {

Invoke-Command ($server) {

# Check for the Azure Temporary Storage volume

$azTempVolume = Get-Volume -FriendlyName "Temporary Storage" -ErrorAction SilentlyContinue

If ($azTempVolume) {

$azTempDrive = (Get-Partition -DriveLetter $azTempVolume.DriveLetter).DiskNumber

}

# Clear and reset the disks

$disks = Get-Disk | Where-Object {

($_.Number -ne $null -and $_.Number -ne $azTempDrive -and !$_.IsBoot -and !$_.IsSystem -and $_.PartitionStyle -ne "RAW")

}

$disks | ft Number,FriendlyName,OperationalStatus

If ($disks) {

Write-Host "This action will permanently remove any data on any drives other than the operating system boot drive!`nReset disks? (Y/N)"

$response = read-host

if ( $response.ToLower() -ne "y" ) { exit }

$disks | % {

$_ | Set-Disk -isoffline:$false

$_ | Set-Disk -isreadonly:$false

$_ | Clear-Disk -RemoveData -RemoveOEM -Confirm:$false -verbose

$_ | Set-Disk -isreadonly:$true

$_ | Set-Disk -isoffline:$true

}

#Get-PhysicalDisk | Reset-PhysicalDisk

}

Get-Disk | Where-Object {

($_.Number -ne $null -and $_.Number -ne $azTempDrive -and !$_.IsBoot -and !$_.IsSystem -and $_.PartitionStyle -eq "RAW")

} | Group -NoElement -Property FriendlyName

}

}

De uitvoer ziet er als volgt uit, waarbij Count het aantal schijven van elk model in elke server is:

Count Name PSComputerName

----- ---- --------------

4 ATA SSDSC2BA800G4n Server01

10 ATA ST4000NM0033 Server01

4 ATA SSDSC2BA800G4n Server02

10 ATA ST4000NM0033 Server02

4 ATA SSDSC2BA800G4n Server03

10 ATA ST4000NM0033 Server03

4 ATA SSDSC2BA800G4n Server04

10 ATA ST4000NM0033 Server04

Stap 3.2: Het cluster valideren

In deze stap voert u het hulpprogramma voor clustervalidatie uit om ervoor te zorgen dat de serverknooppunten correct zijn geconfigureerd om een cluster te maken met Opslagruimten Direct. Wanneer clustervalidatie (Test-Cluster) wordt uitgevoerd voordat het cluster wordt gemaakt, worden de tests uitgevoerd die controleren of de configuratie geschikt is om te functioneren als failovercluster. In het onderstaande voorbeeld wordt de parameter -Include gebruikt en vervolgens worden de specifieke testcategorieën opgegeven. Dit zorgt ervoor dat de specifieke tests voor Opslagruimten Direct worden opgenomen in de validatie.

Gebruik de volgende PowerShell-opdracht om een set servers te valideren voor gebruik als een Storage Spaces Direct-cluster.

Test-Cluster -Node <MachineName1, MachineName2, MachineName3, MachineName4> -Include "Storage Spaces Direct", "Inventory", "Network", "System Configuration"

Stap 3.3: Het cluster maken

In deze stap maakt u een cluster met de knooppunten die u in de vorige stap hebt gevalideerd voor het maken van het cluster met behulp van de volgende PowerShell-cmdlet.

Wanneer u het cluster maakt, krijgt u een waarschuwing met de mededeling: 'Er zijn problemen opgetreden tijdens het maken van de geclusterde rol waardoor deze mogelijk niet kan worden gestart. Bekijk het onderstaande rapportbestand voor meer informatie. U kunt deze waarschuwing veilig negeren. Dit komt doordat er geen schijven beschikbaar zijn voor het clusterquorum. Het wordt aanbevolen om de file share witness of de cloud witness te configureren nadat het cluster is gemaakt.

Notitie

Als de servers statische IP-adressen gebruiken, wijzigt u de volgende opdracht om het statische IP-adres weer te geven door de volgende parameter toe te voegen en het IP-adres op te geven: -StaticAddress <X.X.X.X.X>. In de volgende opdracht moet de tijdelijke aanduiding ClusterName worden vervangen door een netbios-naam die uniek is en 15 tekens of minder.

New-Cluster -Name <ClusterName> -Node <MachineName1,MachineName2,MachineName3,MachineName4> -NoStorage

Nadat het cluster is gemaakt, kan het even duren voordat de DNS-vermelding voor de clusternaam is gerepliceerd. De tijd is afhankelijk van de omgevings- en DNS-replicatieconfiguratie. Als het oplossen van het cluster niet lukt, kunt u in de meeste gevallen de computernaam gebruiken van een knooppunt dat een actief lid van het cluster is, in plaats van de clusternaam.

Stap 3.4: Een clusterwitness configureren

U wordt aangeraden een witness voor het cluster te configureren, zodat clusters met drie of meer servers bestand zijn tegen twee servers die mislukken of offline zijn. Voor een implementatie met twee servers is een clusterwitness vereist, anders gaat de server offline, waardoor de andere ook niet meer beschikbaar is. Met deze systemen kunt u een bestandsshare als witness gebruiken of cloudwitness gebruiken.

Zie de volgende onderwerpen voor meer informatie:

Stap 3.5: Opslagruimten Direct inschakelen

Nadat u het cluster hebt gemaakt, gebruikt u de Enable-ClusterStorageSpacesDirect PowerShell-cmdlet. Hiermee wordt het opslagsysteem in de modus Opslagruimten Direct geplaatst en doet u het volgende automatisch:

Een pool maken: Maakt één grote pool met een naam als 'S2D op Cluster1'.

Configureert de Storage Spaces Direct-caches: Als er meer dan één mediatype (station) beschikbaar is voor het gebruik van Storage Spaces Direct, wordt het snelste ingezet als cacheapparaten (meestal voor lezen en schrijven)

lagen: maakt twee lagen als standaardlagen. De ene heet 'Capaciteit' en de andere 'Prestaties'. De cmdlet analyseert de apparaten en configureert elke laag met de combinatie van apparaattypen en tolerantie.

Start vanuit het beheersysteem in een PowerShell-opdrachtvenster dat is geopend met beheerdersbevoegdheden de volgende opdracht. De clusternaam is de naam van het cluster dat u in de vorige stappen hebt gemaakt. Als deze opdracht lokaal wordt uitgevoerd op een van de knooppunten, is de -CimSession parameter niet nodig.

Enable-ClusterStorageSpacesDirect -CimSession <ClusterName>

Als u Opslagruimten Direct wilt inschakelen met behulp van de bovenstaande opdracht, kunt u ook de naam van het knooppunt gebruiken in plaats van de clusternaam. Het gebruik van de knooppuntnaam is mogelijk betrouwbaarder vanwege VERTRAGINGEN in DNS-replicatie die kunnen optreden met de zojuist gemaakte clusternaam.

Wanneer deze opdracht is voltooid, wat enkele minuten kan duren, is het systeem gereed voor het maken van volumes.

Stap 3.6: Volumes maken

U wordt aangeraden de cmdlet New-Volume te gebruiken, omdat deze de snelste en eenvoudigste ervaring biedt. Met deze enkele cmdlet maakt u automatisch de virtuele schijf, partitioneert en formatteert u deze, maakt u het volume met overeenkomende naam en voegt u deze toe aan gedeelde clustervolumes, allemaal in één eenvoudige stap.

Zie Volumes maken in Opslagruimten Directvoor meer informatie.

Stap 3.7: De CSV-cache optioneel inschakelen

U kunt desgewenst de CSV-cache (Cluster Shared Volume) inschakelen om systeemgeheugen (RAM) te gebruiken als een cache op blokniveau van leesbewerkingen die nog niet zijn opgeslagen in de Cachebeheer van Windows. Dit kan de prestaties voor toepassingen zoals Hyper-V verbeteren. De CSV-cache kan de prestaties van leesaanvragen verbeteren en is ook handig voor Scale-Out bestandsserverscenario's.

Als u de CSV-cache inschakelt, vermindert u de hoeveelheid geheugen die beschikbaar is voor het uitvoeren van VM's op een hypergeconvergeerd cluster, zodat u de opslagprestaties moet verdelen met geheugen dat beschikbaar is voor VHD's.

Als u de grootte van de CSV-cache wilt instellen, opent u een PowerShell-sessie op het beheersysteem met een account met beheerdersmachtigingen voor het opslagcluster en gebruikt u dit script, wijzigt u de variabelen $ClusterName en $CSVCacheSize indien van toepassing (in dit voorbeeld wordt een CSV-cache van 2 GB per server ingesteld):

$ClusterName = "StorageSpacesDirect1"

$CSVCacheSize = 2048 #Size in MB

Write-Output "Setting the CSV cache..."

(Get-Cluster $ClusterName).BlockCacheSize = $CSVCacheSize

$CSVCurrentCacheSize = (Get-Cluster $ClusterName).BlockCacheSize

Write-Output "$ClusterName CSV cache size: $CSVCurrentCacheSize MB"

Voor meer informatie, zie Het gebruik van de CSV-leescache in het geheugen.

Stap 3.8: Virtuele machines implementeren voor hypergeconvergeerde implementaties

Als u een hypergeconvergeerd cluster implementeert, is de laatste stap het inrichten van virtuele machines in het Opslagruimten Direct-cluster.

De bestanden van de virtuele machine moeten worden opgeslagen in de CSV-naamruimte van het systeem (bijvoorbeeld: c:\ClusterStorage\Volume1), net zoals geclusterde VM's op failoverclusters.

U kunt in-boxhulpmiddelen of andere hulpmiddelen gebruiken om de opslagcapaciteit en virtuele machines te beheren, zoals System Center Virtual Machine Manager.

Stap 4: Scale-Out bestandsserver implementeren voor geconvergeerde oplossingen

Als u een geconvergeerde oplossing implementeert, is de volgende stap het maken van een Scale-Out bestandsserverexemplaar en enkele netwerkshares instellen. Als u een hypergeconvergeerd cluster implementeert, bent u klaar en hebt u deze sectie niet nodig.

Stap 4.1: de Scale-Out bestandsserverfunctie maken

De volgende stap bij het instellen van de clusterservices voor uw bestandsserver is het creëren van de rol van geclusterde bestandsserver, waarbij u het Scale-Out bestandsserverexemplaar maakt waarop uw continu beschikbare bestandsshares worden gehost.

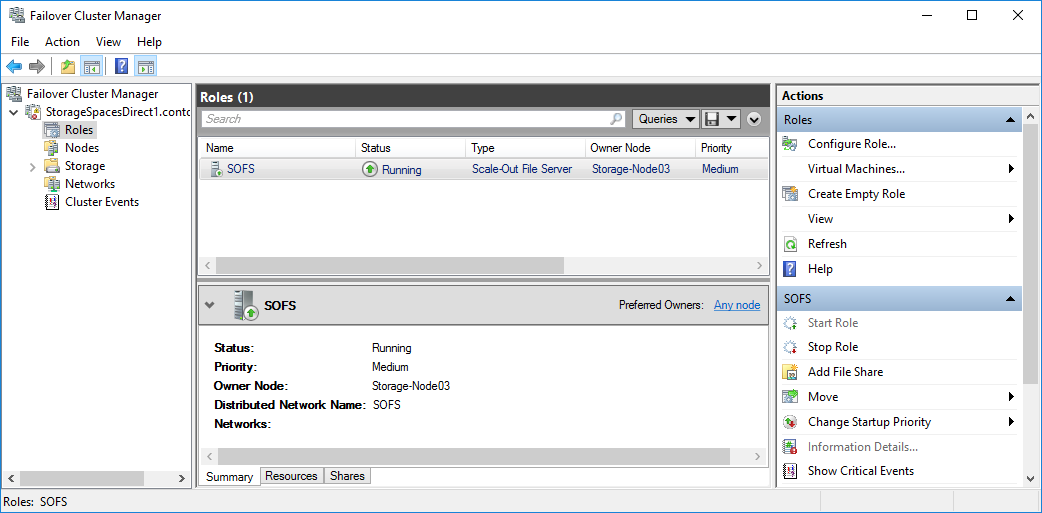

Een Scale-Out-bestandsserverrol maken met Failoverclusterbeheer

Selecteer in Failoverclusterbeheer het cluster, ga naar rollenen klik vervolgens op Rol configureren....

De Hoge Beschikbaarheidswizard verschijnt.Klik op de pagina Functie selecteren op File Server.

Klik op de pagina Bestandsserver Type op Scale-Out Bestandsserver voor applicatiegegevens.

Typ op de pagina clienttoegangspunt een naam voor de Scale-Out bestandsserver.

Controleer of de rol is ingesteld door naar Rollen te gaan en te bevestigen dat in de kolom Status wordt weergegeven Wordt uitgevoerd naast de geclusterde bestandsserverfunctie die u hebt gemaakt, zoals wordt weergegeven in afbeelding 1.

afbeelding 1 Failoverclusterbeheer met de Scale-Out bestandsserver met de status Actief

Notitie

Nadat u de geclusterde rol hebt gemaakt, kan het zijn dat er enkele vertragingen optreden in de netwerkpropagatie, waardoor u mogelijk enkele minuten, of zelfs langer, geen netwerkshares kunt aanmaken.

Een Scale-Out bestandsserverfunctie maken met windows PowerShell

Voer in een Windows PowerShell-sessie die is verbonden met het bestandsservercluster de volgende opdrachten in om de functie Scale-Out bestandsserver te maken, FSCLUSTER- te wijzigen zodat deze overeenkomt met de naam van uw cluster en SOFS- overeenkomen met de naam die u de Scale-Out bestandsserverfunctie wilt geven:

Add-ClusterScaleOutFileServerRole -Name SOFS -Cluster FSCLUSTER

Notitie

Nadat u de geclusterde rol hebt gemaakt, kan het zijn dat er enkele netwerkpropagatievertragingen optreden, waardoor u mogelijk enkele minuten, of mogelijk langer, geen bestandsdeling kunt maken. Als de SOFS-rol onmiddellijk mislukt en niet wordt gestart, kan het zijn dat het computerobject van het cluster geen machtiging heeft om een computeraccount voor de SOFS-rol te maken. Zie dit blogbericht voor hulp: Scale-Out bestandsserverfunctie kan niet worden gestart met gebeurtenis-id's 1205, 1069 en 1194.

Stap 4.2: Bestandsshares maken

Nadat u uw virtuele schijven hebt gemaakt en aan CSV's hebt toegevoegd, is het tijd om bestandsshares op deze schijven te maken: één bestandsshare per CSV per virtuele schijf. System Center Virtual Machine Manager (VMM) is waarschijnlijk de eenvoudigste manier om dit te doen omdat deze machtigingen voor u afhandelt, maar als u deze niet in uw omgeving hebt, kunt u Windows PowerShell gebruiken om de implementatie gedeeltelijk te automatiseren.

Gebruik de scripts die zijn opgenomen in de SMB-shareconfiguratie voor Hyper-V Workloads-script, waarmee het proces van het maken van groepen en shares gedeeltelijk wordt geautomatiseerd. Deze is geschreven voor Hyper-V werkbelastingen. Als u andere workloads implementeert, moet u mogelijk de instellingen wijzigen of extra stappen uitvoeren nadat u de shares hebt gemaakt. Als u bijvoorbeeld Microsoft SQL Server gebruikt, moet het SQL Server-serviceaccount volledige controle krijgen over de share en het bestandssysteem.

Notitie

U moet het groepslidmaatschap bijwerken wanneer u clusterknooppunten toevoegt, tenzij u System Center Virtual Machine Manager gebruikt om uw shares te maken.

Ga als volgt te werk om bestandsshares te maken met behulp van PowerShell-scripts:

Download de scripts die zijn opgenomen in SMB Share Configuration voor Hyper-V Workloads naar een van de knooppunten van het bestandsservercluster.

Open een Windows PowerShell-sessie met domeinbeheerdersreferenties in het beheersysteem en gebruik vervolgens het volgende script om een Active Directory-groep te maken voor de Hyper-V computerobjecten, waarbij de waarden voor de variabelen worden gewijzigd die geschikt zijn voor uw omgeving:

# Replace the values of these variables $HyperVClusterName = "Compute01" $HyperVObjectADGroupSamName = "Hyper-VServerComputerAccounts" <#No spaces#> $ScriptFolder = "C:\Scripts\SetupSMBSharesWithHyperV" # Start of script itself CD $ScriptFolder .\ADGroupSetup.ps1 -HyperVObjectADGroupSamName $HyperVObjectADGroupSamName -HyperVClusterName $HyperVClusterNameOpen een Windows PowerShell-sessie met beheerdersreferenties op een van de opslagknooppunten en gebruik vervolgens het volgende script om shares voor elk CSV te maken en beheerdersmachtigingen voor de shares te verlenen aan de groep Domeinadministrators en het rekencluster.

# Replace the values of these variables $StorageClusterName = "StorageSpacesDirect1" $HyperVObjectADGroupSamName = "Hyper-VServerComputerAccounts" <#No spaces#> $SOFSName = "SOFS" $SharePrefix = "Share" $ScriptFolder = "C:\Scripts\SetupSMBSharesWithHyperV" # Start of the script itself CD $ScriptFolder Get-ClusterSharedVolume -Cluster $StorageClusterName | ForEach-Object { $ShareName = $SharePrefix + $_.SharedVolumeInfo.friendlyvolumename.trimstart("C:\ClusterStorage\Volume") Write-host "Creating share $ShareName on "$_.name "on Volume: " $_.SharedVolumeInfo.friendlyvolumename .\FileShareSetup.ps1 -HyperVClusterName $StorageClusterName -CSVVolumeNumber $_.SharedVolumeInfo.friendlyvolumename.trimstart("C:\ClusterStorage\Volume") -ScaleOutFSName $SOFSName -ShareName $ShareName -HyperVObjectADGroupSamName $HyperVObjectADGroupSamName }

Stap 4.3 Beperkte Kerberos-delegering inschakelen

Gebruik vanaf een van de opslagclusterknooppunten het script KCDSetup.ps1 dat is opgenomen in SMB Share Configuration voor Hyper-V Workloads om Kerberos-beperkte delegatie in te stellen voor extern scenariobeheer en verhoogde beveiliging van Live-Migratie. Hier volgt een kleine wrapper voor het script:

$HyperVClusterName = "Compute01"

$ScaleOutFSName = "SOFS"

$ScriptFolder = "C:\Scripts\SetupSMBSharesWithHyperV"

CD $ScriptFolder

.\KCDSetup.ps1 -HyperVClusterName $HyperVClusterName -ScaleOutFSName $ScaleOutFSName -EnableLM