サーバーレス エグレス制御のネットワーク ポリシーの管理

重要

この機能はパブリック プレビュー段階にあります。

このドキュメントでは、Azure Databricks でサーバーレス ワークロードからの送信ネットワーク接続を制御するためのネットワーク ポリシーを構成および管理する方法について説明します。

ネットワーク ポリシーを管理するためのアクセス許可は、アカウント管理者に制限されます。 Azure Databricks 管理の概要を参照してください。

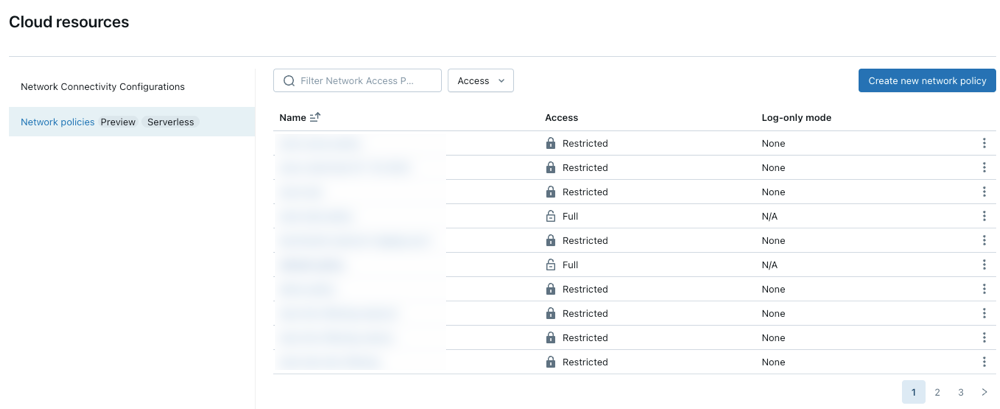

ネットワーク ポリシーへのアクセス

アカウントでネットワーク ポリシーを作成、表示、更新するには:

- account コンソールでCloud リソースクリックします。

- [ネットワーク] タブをクリックします

ネットワークポリシー一覧

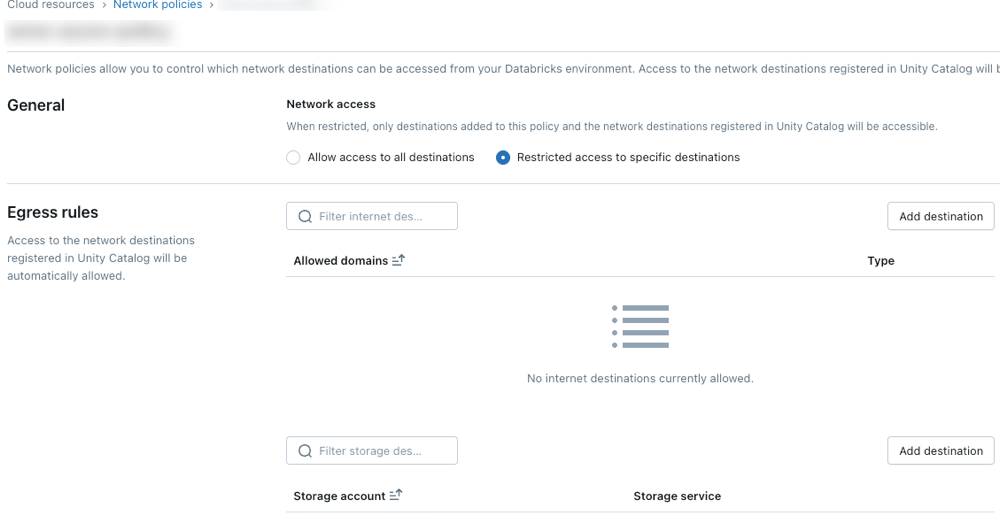

新しいネットワーク ポリシーの作成

[新しいネットワーク ポリシー作成] をクリックします。

ネットワーク アクセス モードを選択します。

- フルアクセス: 無制限の外部へのインターネットアクセス。 フル アクセスを選択した場合、送信インターネット アクセスは制限されません。

- 制限付きアクセス: 送信アクセスは、指定された宛先に制限されます。 詳細については、 Network ポリシーの概要を参照してください。

ネットワーク ポリシーを構成する

次の手順では、制限付きアクセス モードのオプションの設定の概要を示します。

エグレス ルール

Unity Catalog の場所または接続を使用して構成された宛先は、ポリシーによって自動的に許可されます。

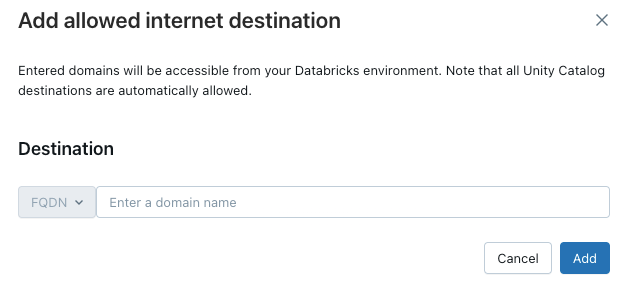

サーバーレス コンピューティングに追加のドメインへのアクセス権を付与するには、[許可されているドメイン] リストの上の [宛先の追加] をクリックします。

FQDN フィルターを使用すると、同じ IP アドレスを共有しているすべてのドメインにアクセスできます。 エンドポイント全体でプロビジョニングされたモデル サービングは、ネットワーク アクセスが制限付きに設定されている場合にインターネット アクセスを遮断します。 ただし、FQDN フィルター処理を使用した詳細な制御はサポートされていません。

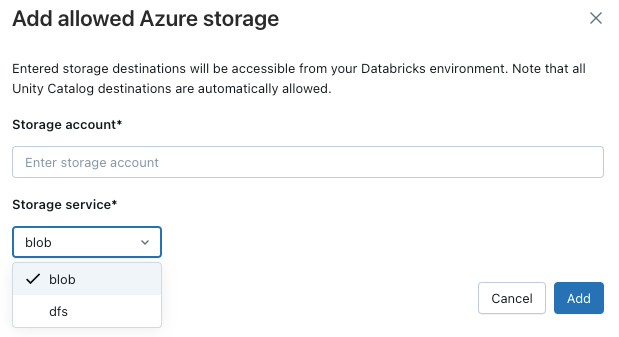

ワークスペースが追加の Azure ストレージ アカウントにアクセスすることを許可するには、[許可されているストレージ アカウント] リストの上の [宛先の追加] ボタンクリックします。

Note

サポートされている宛先の最大数は 2000 です。 これには、ワークスペースからアクセスできるすべての Unity Catalog の場所と接続、およびポリシーに明示的に追加された宛先が含まれます。

ポリシーの適用

ログのみのモードでは、リソースへのアクセスを中断することなく、ポリシー構成をテストし、送信接続を監視できます。 ドライラン モードが有効になっている場合、ポリシーに違反する要求はログに記録されますが、ブロックされません。 選択できるオプションには、以下のものがあります。

Databricks SQL: Databricks SQL ウェアハウスはドライラン モードで動作します。

AI モデル サービング: モデル サービング エンドポイントはドライ ラン モードで動作します。

すべての製品: すべての Azure Databricks サービスはドライラン モードで動作し、他のすべての選択をオーバーライドします。

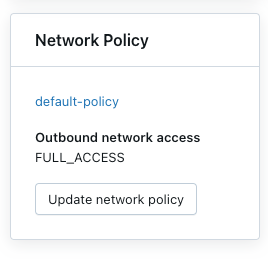

既定のポリシーを更新する

各 Azure Databricks アカウントには、既定のポリシーが含まれています。 既定のポリシーは、明示的なネットワーク ポリシーが割り当てられていないすべてのワークスペース (新しく作成されたワークスペースを含む) に関連付けられています。 このポリシーは変更できますが、削除することはできません。 既定のポリシーは、Premium レベル以上のワークスペースにのみ適用されます。

ネットワーク ポリシーをワークスペースに関連付ける

追加の構成で既定のポリシーを更新した場合、既存のネットワーク ポリシーがないワークスペースに自動的に適用されます。 ワークスペースは Premium レベルである必要があります。

ワークスペースを別のポリシーに関連付けるには、次の操作を行います。

- ワークスペースを選択します。

- "ネットワーク ポリシーで、[ネットワーク ポリシーを更新] をクリックします。"

- 一覧から目的のネットワーク ポリシーを選択します。

ネットワーク ポリシーの変更を適用する

ほとんどのネットワーク構成の更新プログラムは、10 分以内にサーバーレス コンピューティングに自動的に伝達されます。 これには、次のものが含まれます。

- 新しい Unity カタログの外部の場所または接続を追加する。

- ワークスペースを別のメタストアにアタッチする。

- 許可されているストレージまたはインターネットの宛先の変更。

Note

インターネット アクセスまたはドライラン モードの設定を変更する場合は、コンピューティングを再起動する必要があります。

サーバーレス ワークロードを再起動または再デプロイする

更新する必要があるのは、インターネット アクセス モードを切り替える場合、またはドライラン モードを更新する場合のみです。

適切な再起動プロシージャを決定するには、製品別に次のリストを参照してください。

- Databricks ML Serving: ML サービス エンドポイントを再デプロイします。 「カスタム モデル サービング エンドポイントを作成する」を参照してください

- Delta Live Tables: 実行中の Delta Live Tables パイプラインを停止してから再起動します。 「Delta Live Tables パイプラインで更新を実行する」を参照してください。

- サーバーレス SQL ウェアハウス: SQL ウェアハウスを停止して再起動します。 「SQL ウェアハウスを管理する」を参照してください。

- ワークフロー: ネットワーク ポリシーの変更は、新しいジョブの実行がトリガーされるか、既存のジョブの実行が再起動されたときに自動的に適用されます。

- ノートブック:

- ノートブックが Spark と対話しない場合は、新しいサーバーレス クラスターを終了してアタッチして、ノートブックに適用されているネットワーク構成を更新できます。

- ノートブックが Spark と対話すると、サーバーレス リソースが更新され、変更が自動的に検出されます。 アクセス モードとドライラン モードの切り替えは、適用されるまでに最大 24 時間かかる場合があり、その他の変更の適用には最大 10 分かかることがあります。

ネットワーク ポリシーの適用を確認する

異なるサーバーレス ワークロードから制限されたリソースにアクセスしようとすると、ネットワーク ポリシーが正しく適用されていることを検証できます。 検証プロセスは、サーバーレス製品によって異なります。

Delta Live テーブルを使用して検証する

- Python ノートブックを作成します。 Delta Live Tables wikipedia python チュートリアルで提供されているノートブックの例を使用できます。

- Delta Live Tables パイプラインを作成します。

- ワークスペースのサイドバーで、[Data Engineering] の下にある [パイプライン] をクリックします。

- [パイプライン 作成] をクリックします。

- 次の設定でパイプラインを構成します。

- パイプライン モード: サーバーレス

- ソース コード: 作成したノートブックを選択します。

- ストレージ オプション: Unity カタログ。 目的のカタログとスキーマを選択します。

- Create をクリックしてください。

- Delta Live Tables パイプラインを実行します。

- パイプライン ページで、 [開始] をクリックします。

- パイプラインが完了するまで待ちます。

- 結果を確認する

- 信頼された宛先: パイプラインは正常に実行され、宛先にデータが書き込まれます。

- 信頼されていない宛先: パイプラインは、ネットワーク アクセスがブロックされていることを示すエラーで失敗する必要があります。

Databricks SQL を使用して検証する

- SQL Warehouse を作成します。 手順については、「 SQL ウェアハウスの作成」を参照してください。

- ネットワーク ポリシーによって制御されるリソースへのアクセスを試みるテスト クエリを SQL エディターで実行します。

- 結果を確認します。

- 信頼できる宛先: クエリは成功するはずです。

- 信頼されていない宛先: クエリはネットワーク アクセス エラーで失敗します。

モデル サービスを使用して検証する

テスト モデルを作成する

- Python ノートブックで、ファイルのダウンロードや API 要求の作成など、パブリック インターネット リソースへのアクセスを試みるモデルを作成します。

- このノートブックを実行して、テスト ワークスペースにモデルを生成します。 次に例を示します。

import mlflow import mlflow.pyfunc import mlflow.sklearn import requests class DummyModel(mlflow.pyfunc.PythonModel): def load_context(self, context): pass def predict(self, _, model_input): first_row = model_input.iloc[0] try: response = requests.get(first_row['host']) except requests.exceptions.RequestException as e: # Return the error details as text return f"Error: An error occurred - {e}" return [response.status_code] with mlflow.start_run(run_name='internet-access-model'): wrappedModel = DummyModel() mlflow.pyfunc.log_model(artifact_path="internet_access_ml_model", python_model=wrappedModel, registered_model_name="internet-http-access")サービス エンドポイントを作成する

- ワークスペースのナビゲーションで、 Machine Learning を選択します。

- [ 予約 ] タブをクリックします。

- [サービス エンドポイント 作成をクリックします。

- 次の設定でエンドポイントを構成します。

- サービス エンドポイント名: わかりやすい名前を指定します。

- エンティティの詳細: Model レジストリ モデルを選択します。

- モデル: 前の手順で作成したモデルを選択します。

- [Confirm]\(確認\) をクリックします。

- サービス エンドポイントが Ready 状態になるまで待ちます。

エンドポイントのクエリを実行します。

- サービス エンドポイント ページ内の Query Endpoint オプションを使用して、テスト要求を送信します。

{"dataframe_records": [{"host": "https://www.google.com"}]}結果を確認します。

- インターネット アクセスが有効: クエリは成功します。

- インターネット アクセスが制限されています: クエリはネットワーク アクセス エラーで失敗します。

ネットワーク ポリシーを更新する

ネットワーク ポリシーは、作成後にいつでも更新できます。 ネットワーク ポリシーを更新するには:

- アカウント コンソール内のネットワーク ポリシーの詳細ページで、ポリシーを変更します。

- ネットワーク アクセス モードを変更します。

- 特定のサービスのドライラン モードを有効または無効にします。

- FQDN またはストレージの宛先を追加または削除します。

- [Update] をクリックします。

- 更新プログラムが既存のワークロードに適用されることを確認するには、「ネットワーク ポリシーの変更を適用する」を参照してください。

拒否ログを確認する

拒否ログは、Unity カタログの system.access.outbound_network テーブルに格納されます。 これらのログは、送信ネットワーク要求が拒否されたときに追跡します。 拒否ログにアクセスするには、Unity カタログメタストアでアクセス スキーマが有効になっていることを確認します。 「システム テーブル スキーマを有効にする」を参照してください。

拒否イベントを表示するには、次のような SQL クエリを使用します。 ドライラン ログが有効になっている場合、クエリは拒否ログとドライラン ログの両方を返します。このログは、access_type 列を使用して区別できます。 拒否ログには DROP 値があり、ドライラン ログには DRY_RUN_DENIALが表示されます。

次の例では、過去 2 時間のログを取得します。

select * from system.access.outbound_network

where event_time >= current_timestamp() - interval 2 hour

sort by event_time desc

Mosaic AI ゲートウェイを使用して外部の生成 AI モデルに接続する場合、拒否はネットワーク送信システム テーブルにログ記録されません。 「Mosaic AI Gateway」を参照してください。

Note

アクセス時間と拒否ログが表示される時間の間には、ある程度の待機時間が発生する可能性があります。

制限事項

構成: この機能は、アカウント コンソールを介してのみ構成できます。 API のサポートは、まだ使用できません。

成果物のアップロード サイズ: MLflow の内部 Databricks ファイルシステムを

dbfs:/databricks/mlflow-tracking/<experiment_id>/<run_id>/artifacts/<artifactPath>形式で使用する場合、成果物のアップロードは、log_artifact、log_artifacts、log_modelAPI で 5 GB に制限されます。サポートされている Unity Catalog 接続: 次の接続の種類がサポートされています: MySQL、PostgreSQL、Snowflake、Redshift、Azure Synapse、SQL Server、Salesforce、BigQuery、Netsuite、Workday RaaS、Hive MetaStore、Salesforce Data Cloud。

モデル サービング: モデル サービング用のイメージを構築する場合、エグレス制御は適用されません。

Azure Storage アクセス: Azure Data Lake Storage 用の Azure Blob Filesystem ドライバーのみがサポートされています。 Azure Blob Storage ドライバーまたは WASB ドライバーを使用したアクセスはサポートされていません。