Read data shared using Delta Sharing open sharing (for recipients)

This article describes how to read data that has been shared with you using the Delta Sharing open sharing protocol. It includes instructions for reading shared data using Databricks, Apache Spark, pandas, Power BI, and Tableau.

In open sharing, you use a credential file that was shared with a member of your team by the data provider to gain secure read access to shared data. Access persists as long as the credential is valid and the provider continues to share the data. Providers manage credential expiration and rotation. Updates to the data are available to you in near real time. You can read and make copies of the shared data, but you can’t modify the source data.

Note

If data has been shared with you using Databricks-to-Databricks Delta Sharing, you don’t need a credential file to access data, and this article doesn’t apply to you. For instructions, see Read data shared using Databricks-to-Databricks Delta Sharing (for recipients).

The sections that follow describe how to use Azure Databricks, Apache Spark, pandas, and Power BI to access and read shared data using the credential file. For a full list of Delta Sharing connectors and information about how to use them, see the Delta Sharing open source documentation. If you run into trouble accessing the shared data, contact the data provider.

Note

Partner integrations are, unless otherwise noted, provided by the third parties and you must have an account with the appropriate provider for the use of their products and services. While Databricks does its best to keep this content up to date, we make no representation regarding the integrations or the accuracy of the content on the partner integration pages. Reach out to the appropriate providers regarding the integrations.

Before you begin

A member of your team must download the credential file shared by the data provider. See Get access in the open sharing model.

They should use a secure channel to share that file or file location with you.

Azure Databricks: Read shared data using open sharing connectors

This section describes how to import a provider using the provider’s credential file in Catalog Explorer or in a Python notebook. The notebook instructions also describe how to use a notebook to list and read shared tables. To read shared data imported using Catalog Explorer, see Read data shared using Databricks-to-Databricks Delta Sharing (for recipients).

Note

If the data provider is using Databricks-to-Databricks sharing and did not share a credential file with you, you must access the data using Unity Catalog. For instructions, see Read data shared using Databricks-to-Databricks Delta Sharing (for recipients).

Catalog Explorer

Permissions required: A metastore admin or a user who has both the CREATE PROVIDER and USE PROVIDER privileges for your Unity Catalog metastore.

In your Azure Databricks workspace, click

Catalog to open Catalog Explorer.

Catalog to open Catalog Explorer.At the top of the Catalog pane, click the

gear icon and select Delta Sharing.

gear icon and select Delta Sharing.Alternatively, from the Quick access page, click the Delta Sharing > button.

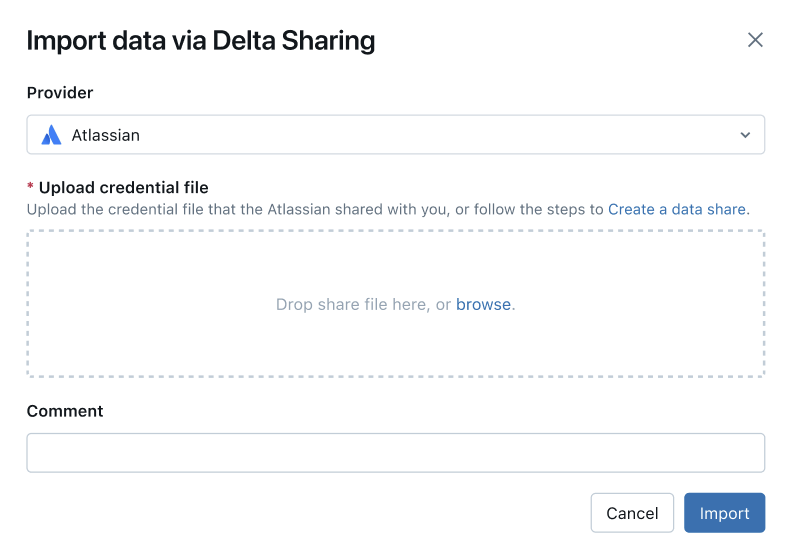

On the Shared with me tab, at the top right corner of the page click Import provider directly.

Select a provider from the drop-down menu or enter a name.

Upload the credential file that the provider shared with you. You can read the specific instructions for different providers:

(Optional) Enter a comment.

Click Import.

Create catalogs from the shared data.

The process is the same as in the Databricks-to-Databricks sharing model.

Grant access to the catalogs.

See How do I make shared data available to my team? and Manage permissions for the schemas, tables, and volumes in a Delta Sharing catalog.

Read the shared data objects just like you would any data object that is registered in Unity Catalog.

For details and examples, see Access data in a shared table or volume.

Python

This section describes how to use an open sharing connector to access shared data using a notebook in your Azure Databricks workspace. You or another member of your team store the credential file in Azure Databricks, then you use it to authenticate to the data provider’s Azure Databricks account and read the data that the data provider shared with you.

First use a Python notebook in Azure Databricks to store the credential file so that users on your team can access shared data.

In a text editor, open the credential file.

In your Azure Databricks workspace, click New > Notebook.

- Enter a name.

- Set the default language for the notebook to Python.

- Select a cluster to attach to the notebook.

- Click Create.

The notebook opens in the notebook editor.

To use Python or pandas to access the shared data, install the delta-sharing Python connector. In the notebook editor, paste the following command:

%sh pip install delta-sharingRun the cell.

The

delta-sharingPython library gets installed in the cluster if it isn’t already installed.In a new cell, paste the following command, which uploads the contents of the credential file to a folder in DBFS. Replace the variables as follows:

<dbfs-path>: the path to the folder where you want to save the credential file<credential-file-contents>: the contents of the credential file. This is not a path to the file, but the copied contents of the file.The credential file contains JSON that defines three fields:

shareCredentialsVersion,endpoint, andbearerToken.%scala dbutils.fs.put("<dbfs-path>/config.share",""" <credential-file-contents> """)

Run the cell.

After the credential file is uploaded, you can delete this cell. All workspace users can read the credential file from DBFS, and the credential file is available in DBFS on all clusters and SQL warehouses in your workspace. To delete the cell, click x in the cell actions menu

at the far right.

at the far right.

Now that the credential file is stored, you can use a notebook to list and read shared tables

Using Python, list the tables in the share.

In a new cell, paste the following command. Replace

<dbfs-path>with the path that was created in above.When the code runs, Python reads the credential file from DBFS on the cluster. Access data stored in DBFS at the path

/dbfs/.import delta_sharing client = delta_sharing.SharingClient(f"/dbfs/<dbfs-path>/config.share") client.list_all_tables()Run the cell.

The result is an array of tables, along with metadata for each table. The following output shows two tables:

Out[10]: [Table(name='example_table', share='example_share_0', schema='default'), Table(name='other_example_table', share='example_share_0', schema='default')]If the output is empty or doesn’t contain the tables you expect, contact the data provider.

Query a shared table.

Using Scala:

In a new cell, paste the following command. When the code runs, the credential file is read from DBFS through the JVM.

Replace the variables as follows:

<profile-path>: the DBFS path of the credential file. For example,/<dbfs-path>/config.share.<share-name>: the value ofshare=for the table.<schema-name>: the value ofschema=for the table.<table-name>: the value ofname=for the table.

%scala spark.read.format("deltaSharing") .load("<profile-path>#<share-name>.<schema-name>.<table-name>").limit(10);Run the cell. Each time you load the shared table, you see fresh data from the source.

Using SQL:

To query the data using SQL, you create a local table in the workspace from the shared table, then query the local table. The shared data is not stored or cached in the local table. Each time you query the local table, you see the current state of the shared data.

In a new cell, paste the following command.

Replace the variables as follows:

<local-table-name>: the name of the local table.<profile-path>: the location of the credential file.<share-name>: the value ofshare=for the table.<schema-name>: the value ofschema=for the table.<table-name>: the value ofname=for the table.

%sql DROP TABLE IF EXISTS table_name; CREATE TABLE <local-table-name> USING deltaSharing LOCATION "<profile-path>#<share-name>.<schema-name>.<table-name>"; SELECT * FROM <local-table-name> LIMIT 10;When you run the command, the shared data is queried directly. As a test, the table is queried and the first 10 results are returned.

If the output is empty or doesn’t contain the data you expect, contact the data provider.

Apache Spark: Read shared data

Follow these steps to access shared data using Spark 3.x or above.

These instructions assume that you have access to the credential file that was shared by the data provider. See Get access in the open sharing model.

Install the Delta Sharing Python and Spark connectors

To access metadata related to the shared data, such as the list of tables shared with you, do the following. This example uses Python.

Install the delta-sharing Python connector:

pip install delta-sharingInstall the Apache Spark connector.

List shared tables using Spark

List the tables in the share. In the following example, replace <profile-path> with the location of the credential file.

import delta_sharing

client = delta_sharing.SharingClient(f"<profile-path>/config.share")

client.list_all_tables()

The result is an array of tables, along with metadata for each table. The following output shows two tables:

Out[10]: [Table(name='example_table', share='example_share_0', schema='default'), Table(name='other_example_table', share='example_share_0', schema='default')]

If the output is empty or doesn’t contain the tables you expect, contact the data provider.

Access shared data using Spark

Run the following, replacing these variables:

<profile-path>: the location of the credential file.<share-name>: the value ofshare=for the table.<schema-name>: the value ofschema=for the table.<table-name>: the value ofname=for the table.<version-as-of>: optional. The version of the table to load the data. Only works if the data provider shares the history of the table. Requiresdelta-sharing-spark0.5.0 or above.<timestamp-as-of>: optional. Load the data at the version before or at the given timestamp. Only works if the data provider shares the history of the table. Requiresdelta-sharing-spark0.6.0 or above.

Python

delta_sharing.load_as_spark(f"<profile-path>#<share-name>.<schema-name>.<table-name>", version=<version-as-of>)

spark.read.format("deltaSharing")\

.option("versionAsOf", <version-as-of>)\

.load("<profile-path>#<share-name>.<schema-name>.<table-name>")\

.limit(10))

delta_sharing.load_as_spark(f"<profile-path>#<share-name>.<schema-name>.<table-name>", timestamp=<timestamp-as-of>)

spark.read.format("deltaSharing")\

.option("timestampAsOf", <timestamp-as-of>)\

.load("<profile-path>#<share-name>.<schema-name>.<table-name>")\

.limit(10))

Scala

Run the following, replacing these variables:

<profile-path>: the location of the credential file.<share-name>: the value ofshare=for the table.<schema-name>: the value ofschema=for the table.<table-name>: the value ofname=for the table.<version-as-of>: optional. The version of the table to load the data. Only works if the data provider shares the history of the table. Requiresdelta-sharing-spark0.5.0 or above.<timestamp-as-of>: optional. Load the data at the version before or at the given timestamp. Only works if the data provider shares the history of the table. Requiresdelta-sharing-spark0.6.0 or above.

spark.read.format("deltaSharing")

.option("versionAsOf", <version-as-of>)

.load("<profile-path>#<share-name>.<schema-name>.<table-name>")

.limit(10)

spark.read.format("deltaSharing")

.option("timestampAsOf", <version-as-of>)

.load("<profile-path>#<share-name>.<schema-name>.<table-name>")

.limit(10)

Access shared change data feed using Spark

If the table history has been shared with you and change data feed (CDF) is enabled on the source table, you can access the change data feed by running the following, replacing these variables. Requires delta-sharing-spark 0.5.0 or above.

One and only one start parameter must be provided.

<profile-path>: the location of the credential file.<share-name>: the value ofshare=for the table.<schema-name>: the value ofschema=for the table.<table-name>: the value ofname=for the table.<starting-version>: optional. The starting version of the query, inclusive. Specify as a Long.<ending-version>: optional. The ending version of the query, inclusive. If the ending version is not provided, the API uses the latest table version.<starting-timestamp>: optional. The starting timestamp of the query, this is converted to a version created greater or equal to this timestamp. Specify as a string in the formatyyyy-mm-dd hh:mm:ss[.fffffffff].<ending-timestamp>: optional. The ending timestamp of the query, this is converted to a version created earlier or equal to this timestamp. Specify as a string in the formatyyyy-mm-dd hh:mm:ss[.fffffffff]

Python

delta_sharing.load_table_changes_as_spark(f"<profile-path>#<share-name>.<schema-name>.<table-name>",

starting_version=<starting-version>,

ending_version=<ending-version>)

delta_sharing.load_table_changes_as_spark(f"<profile-path>#<share-name>.<schema-name>.<table-name>",

starting_timestamp=<starting-timestamp>,

ending_timestamp=<ending-timestamp>)

spark.read.format("deltaSharing").option("readChangeFeed", "true")\

.option("statingVersion", <starting-version>)\

.option("endingVersion", <ending-version>)\

.load("<profile-path>#<share-name>.<schema-name>.<table-name>")

spark.read.format("deltaSharing").option("readChangeFeed", "true")\

.option("startingTimestamp", <starting-timestamp>)\

.option("endingTimestamp", <ending-timestamp>)\

.load("<profile-path>#<share-name>.<schema-name>.<table-name>")

Scala

spark.read.format("deltaSharing").option("readChangeFeed", "true")

.option("statingVersion", <starting-version>)

.option("endingVersion", <ending-version>)

.load("<profile-path>#<share-name>.<schema-name>.<table-name>")

spark.read.format("deltaSharing").option("readChangeFeed", "true")

.option("startingTimestamp", <starting-timestamp>)

.option("endingTimestamp", <ending-timestamp>)

.load("<profile-path>#<share-name>.<schema-name>.<table-name>")

If the output is empty or doesn’t contain the data you expect, contact the data provider.

Access a shared table using Spark Structured Streaming

If the table history is shared with you, you can stream read the shared data. Requires delta-sharing-spark 0.6.0 or above.

Supported options:

ignoreDeletes: Ignore transactions that delete data.ignoreChanges: Re-process updates if files were rewritten in the source table due to a data changing operation such asUPDATE,MERGE INTO,DELETE(within partitions), orOVERWRITE. Unchanged rows can still be emitted. Therefore your downstream consumers should be able to handle duplicates. Deletes are not propagated downstream.ignoreChangessubsumesignoreDeletes. Therefore if you useignoreChanges, your stream will not be disrupted by either deletions or updates to the source table.startingVersion: The shared table version to start from. All table changes starting from this version (inclusive) will be read by the streaming source.startingTimestamp: The timestamp to start from. All table changes committed at or after the timestamp (inclusive) will be read by the streaming source. Example:"2023-01-01 00:00:00.0".maxFilesPerTrigger: The number of new files to be considered in every micro-batch.maxBytesPerTrigger: The amount of data that gets processed in each micro-batch. This option sets a “soft max”, meaning that a batch processes approximately this amount of data and may process more than the limit in order to make the streaming query move forward in cases when the smallest input unit is larger than this limit.readChangeFeed: Stream read the change data feed of the shared table.

Unsupported options:

Trigger.availableNow

Sample Structured Streaming queries

Scala

spark.readStream.format("deltaSharing")

.option("startingVersion", 0)

.option("ignoreChanges", true)

.option("maxFilesPerTrigger", 10)

.load("<profile-path>#<share-name>.<schema-name>.<table-name>")

Python

spark.readStream.format("deltaSharing")\

.option("startingVersion", 0)\

.option("ignoreDeletes", true)\

.option("maxBytesPerTrigger", 10000)\

.load("<profile-path>#<share-name>.<schema-name>.<table-name>")

See also Streaming on Azure Databricks.

Read tables with deletion vectors or column mapping enabled

Important

This feature is in Public Preview.

Deletion vectors are a storage optimization feature that your provider can enable on shared Delta tables. See What are deletion vectors?.

Azure Databricks also supports column mapping for Delta tables. See Rename and drop columns with Delta Lake column mapping.

If your provider shared a table with deletion vectors or column mapping enabled, you can read the table using compute that is running delta-sharing-spark 3.1 or above. If you are using Databricks clusters, you can perform batch reads using a cluster running Databricks Runtime 14.1 or above. CDF and streaming queries require Databricks Runtime 14.2 or above.

You can perform batch queries as-is, because they can automatically resolve responseFormat based on the table features of the shared table.

To read a change data feed (CDF) or to perform streaming queries on shared tables with deletion vectors or column mapping enabled, you must set the additional option responseFormat=delta.

The following examples show batch, CDF, and streaming queries:

import org.apache.spark.sql.SparkSession

val spark = SparkSession

.builder()

.appName("...")

.master("...")

.config("spark.sql.extensions", "io.delta.sql.DeltaSparkSessionExtension")

.config("spark.sql.catalog.spark_catalog", "org.apache.spark.sql.delta.catalog.DeltaCatalog")

.getOrCreate()

val tablePath = "<profile-file-path>#<share-name>.<schema-name>.<table-name>"

// Batch query

spark.read.format("deltaSharing").load(tablePath)

// CDF query

spark.read.format("deltaSharing")

.option("readChangeFeed", "true")

.option("responseFormat", "delta")

.option("startingVersion", 1)

.load(tablePath)

// Streaming query

spark.readStream.format("deltaSharing").option("responseFormat", "delta").load(tablePath)

Pandas: Read shared data

Follow these steps to access shared data in pandas 0.25.3 or above.

These instructions assume that you have access to the credential file that was shared by the data provider. See Get access in the open sharing model.

Install the Delta Sharing Python connector

To access metadata related to the shared data, such as the list of tables shared with you, you must install the delta-sharing Python connector.

pip install delta-sharing

List shared tables using pandas

To list the tables in the share, run the following, replacing <profile-path>/config.share with the location of the credential file.

import delta_sharing

client = delta_sharing.SharingClient(f"<profile-path>/config.share")

client.list_all_tables()

If the output is empty or doesn’t contain the tables you expect, contact the data provider.

Access shared data using pandas

To access shared data in pandas using Python, run the following, replacing the variables as follows:

<profile-path>: the location of the credential file.<share-name>: the value ofshare=for the table.<schema-name>: the value ofschema=for the table.<table-name>: the value ofname=for the table.

import delta_sharing

delta_sharing.load_as_pandas(f"<profile-path>#<share-name>.<schema-name>.<table-name>")

Access a shared change data feed using pandas

To access the change data feed for a shared table in pandas using Python run the following, replacing the variables as follows. A change data feed may not be available, depending on whether or not the data provider shared the change data feed for the table.

<starting-version>: optional. The starting version of the query, inclusive.<ending-version>: optional. The ending version of the query, inclusive.<starting-timestamp>: optional. The starting timestamp of the query. This is converted to a version created greater or equal to this timestamp.<ending-timestamp>: optional. The ending timestamp of the query. This is converted to a version created earlier or equal to this timestamp.

import delta_sharing

delta_sharing.load_table_changes_as_pandas(

f"<profile-path>#<share-name>.<schema-name>.<table-name>",

starting_version=<starting-version>,

ending_version=<starting-version>)

delta_sharing.load_table_changes_as_pandas(

f"<profile-path>#<share-name>.<schema-name>.<table-name>",

starting_timestamp=<starting-timestamp>,

ending_timestamp=<ending-timestamp>)

If the output is empty or doesn’t contain the data you expect, contact the data provider.

Power BI: Read shared data

The Power BI Delta Sharing connector allows you to discover, analyze, and visualize datasets shared with you through the Delta Sharing open protocol.

Requirements

- Power BI Desktop 2.99.621.0 or above.

- Access to the credential file that was shared by the data provider. See Get access in the open sharing model.

Connect to Databricks

To connect to Azure Databricks using the Delta Sharing connector, do the following:

- Open the shared credential file with a text editor to retrieve the endpoint URL and the token.

- Open Power BI Desktop.

- On the Get Data menu, search for Delta Sharing.

- Select the connector and click Connect.

- Enter the endpoint URL that you copied from the credentials file into the Delta Sharing Server URL field.

- Optionally, in the Advanced Options tab, set a Row Limit for the maximum number of rows that you can download. This is set to 1 million rows by default.

- Click OK.

- For Authentication, copy the token that you retrieved from the credentials file into Bearer Token.

- Click Connect.

Limitations of the Power BI Delta Sharing connector

The Power BI Delta Sharing Connector has the following limitations:

- The data that the connector loads must fit into the memory of your machine. To manage this requirement, the connector limits the number of imported rows to the Row Limit that you set under the Advanced Options tab in Power BI Desktop.

Tableau: Read shared data

The Tableau Delta Sharing connector allows you to discover, analyze, and visualize datasets that are shared with you through the Delta Sharing open protocol.

Requirements

- Tableau Desktop and Tableau Server 2024.1 or above

- Access to the credential file that was shared by the data provider. See Get access in the open sharing model.

Connect to Azure Databricks

To connect to Azure Databricks using the Delta Sharing connector, do the following:

- Go to Tableau Exchange, follow the instructions to download the Delta Sharing Connector, and put it in an appropriate desktop folder.

- Open Tableau Desktop.

- On the Connectors page, search for “Delta Sharing by Databricks”.

- Select Upload Share file, and choose the credential file that was shared by the provider.

- Click Get Data.

- In the Data Explorer, select the table.

- Optionally add SQL filters or row limits.

- Click Get Table Data.

Limitations of the Tableau Delta Sharing connector

The Tableau Delta Sharing Connector has the following limitations:

- The data that the connector loads must fit into the memory of your machine. To manage this requirement, the connector limits the number of imported rows to the row limit that you set in Tableau.

- All columns are returned as type

String. - SQL Filter only works if your Delta Sharing server supports predicateHint.

Request a new credential

If your credential activation URL or downloaded credential is lost, corrupted, or compromised, or your credential expires without your provider sending you a new one, contact your provider to request a new credential.