Add audio quality enhancements to your audio calling experience

The Azure Communication Services audio effects noise suppression abilities can improve your audio calls by filtering out unwanted background noises. Noise suppression is a technology that removes background noises from audio calls. Eliminating background noise makes it easier to talk and listen. Noise suppression can also reduce distractions and tiredness caused by noisy places. For example, if you're taking an Azure Communication Services WebJS call in a noisy coffee shop, turning on noise suppression can make the call experience better.

Use audio effects: Install the calling effects npm package

Important

This tutorial employs the Azure Communication Services Calling SDK version 1.28.4 or later, alongside the Azure Communication Services Calling Effects SDK version 1.1.2 or later. The general availability (GA) stable version 1.28.4 and later of the Calling SDK support noise suppression features. Alternatively, if you opt to use the public preview version, Calling SDK versions 1.24.2-beta.1 and later also support noise suppression.

Current browser support for adding audio noise suppression effects is available only on Chrome and Edge desktop browsers.

The calling effects library can't be used standalone. It works only when used with the Azure Communication Services Calling client library for WebJS.

Use the npm install command to install the Azure Communication Services Audio Effects SDK for JavaScript.

If you use the GA version of the Calling SDK, you must use the GA version of the Calling Effects SDK.

@azure/communication-calling-effects/v/latest

If you use the public preview of the Calling SDK, you must use the beta version of the Calling Effects SDK.

@azure/communication-calling-effects/v/next

Load the noise suppression effects library

For information on the interface that details audio effects properties and methods, see the Audio Effects Feature interface API documentation page.

To use noise suppression audio effects within the Azure Communication Services Calling SDK, you need the LocalAudioStream property that's currently in the call. You need access to the AudioEffects API of the LocalAudioStream property to start and stop audio effects.

import * as AzureCommunicationCallingSDK from '@azure/communication-calling';

import { DeepNoiseSuppressionEffect } from '@azure/communication-calling-effects';

// Get LocalAudioStream from the localAudioStream collection on the call object.

// 'call' here represents the call object.

const localAudioStreamInCall = call.localAudioStreams[0];

// Get the audio effects feature API from LocalAudioStream

const audioEffectsFeatureApi = localAudioStreamInCall.feature(AzureCommunicationCallingSDK.Features.AudioEffects);

// Subscribe to useful events that show audio effects status

audioEffectsFeatureApi.on('effectsStarted', (activeEffects: ActiveAudioEffects) => {

console.log(`Current status audio effects: ${activeEffects}`);

});

audioEffectsFeatureApi.on('effectsStopped', (activeEffects: ActiveAudioEffects) => {

console.log(`Current status audio effects: ${activeEffects}`);

});

audioEffectsFeatureApi.on('effectsError', (error: AudioEffectErrorPayload) => {

console.log(`Error with audio effects: ${error.message}`);

});

Check what audio effects are active

To check what noise suppression effects are currently active, you can use the activeEffects property.

The activeEffects property returns an object with the names of the current active effects.

// Use the audio effects feature API.

const currentActiveEffects = audioEffectsFeatureApi.activeEffects;

// Create the noise suppression instance.

const deepNoiseSuppression = new DeepNoiseSuppressionEffect();

// We recommend that you check support for the effect in the current environment by using the isSupported API

// method. Remember that noise supression is only supported on desktop browsers for Chrome and Edge.

const isDeepNoiseSuppressionSupported = await audioEffectsFeatureApi.isSupported(deepNoiseSuppression);

if (isDeepNoiseSuppressionSupported) {

console.log('Noise supression is supported in local browser environment');

}

// To start Communication Services Deep Noise Suppression

await audioEffectsFeatureApi.startEffects({

noiseSuppression: deepNoiseSuppression

});

// To stop Communication Services Deep Noise Suppression

await audioEffectsFeatureApi.stopEffects({

noiseSuppression: true

});

Start a call with noise suppression automatically enabled

You can start a call with noise suppression turned on. Create a new LocalAudioStream property with AudioDeviceInfo (the LocalAudioStream source shouldn't be a raw MediaStream property to use audio effects), and pass it in CallStartOptions.audioOptions:

// As an example, here we're simply creating LocalAudioStream by using the current selected mic on DeviceManager.

const audioDevice = deviceManager.selectedMicrophone;

const localAudioStreamWithEffects = new AzureCommunicationCallingSDK.LocalAudioStream(audioDevice);

const audioEffectsFeatureApi = localAudioStreamWithEffects.feature(AzureCommunicationCallingSDK.Features.AudioEffects);

// Start effect

await audioEffectsFeatureApi.startEffects({

noiseSuppression: deepNoiseSuppression

});

// Pass LocalAudioStream in audioOptions in call start/accept options.

await call.startCall({

audioOptions: {

muted: false,

localAudioStreams: [localAudioStreamWithEffects]

}

});

Turn on noise suppression during an ongoing call

You might start a call and not have noise suppression turned on. The environment might get noisy so that you need to turn on noise suppression. To turn on noise suppression, you can use the audioEffectsFeatureApi.startEffects API.

// Create the noise supression instance

const deepNoiseSuppression = new DeepNoiseSuppressionEffect();

// Get LocalAudioStream from the localAudioStream collection on the call object

// 'call' here represents the call object.

const localAudioStreamInCall = call.localAudioStreams[0];

// Get the audio effects feature API from LocalAudioStream

const audioEffectsFeatureApi = localAudioStreamInCall.feature(AzureCommunicationCallingSDK.Features.AudioEffects);

// We recommend that you check support for the effect in the current environment by using the isSupported method on the feature API. Remember that noise supression is only supported on desktop browsers for Chrome and Edge.

const isDeepNoiseSuppressionSupported = await audioEffectsFeatureApi.isSupported(deepNoiseSuppression);

if (isDeepNoiseSuppressionSupported) {

console.log('Noise supression is supported in the current browser environment');

}

// To start Communication Services Deep Noise Suppression

await audioEffectsFeatureApi.startEffects({

noiseSuppression: deepNoiseSuppression

});

// To stop Communication Services Deep Noise Suppression

await audioEffectsFeatureApi.stopEffects({

noiseSuppression: true

});

Related content

See the Audio Effects Feature interface documentation page for extended API feature details.

Learn how to configure audio filters with the Native Calling SDKs

The Azure Communication Services audio effects offer filters that can improve your audio call. For native platforms (Android, iOS, and Windows), you can configure the following filters.

Echo cancellation

You can eliminate acoustic echo caused by the caller's voice echoing back into the microphone after it's emitted from the speaker. Echo cancellation ensures clear communication.

You can configure the filter before and during a call. You can toggle echo cancellation only if music mode is enabled. By default, this filter is enabled.

Noise suppression

You can improve audio quality by filtering out unwanted background noises such as typing, air conditioning, or street sounds. This technology ensures that the voice is crisp and clear to facilitate more effective communication.

You can configure the filter before and during a call. The currently available modes are Off, Auto, Low, and High. By default, this feature is set to High.

Automatic gain control

You can automatically adjust the microphone's volume to ensure consistent audio levels throughout the call.

- Analog automatic gain control is a filter that's available only before a call. By default, this filter is enabled.

- Digital automatic gain control is a filter that's available only before a call. By default, this filter is enabled.

Music mode

Music mode is a filter that's available before and during a call. To learn more about music mode, see Music mode on Native Calling SDK. Music mode works only on native platforms over one-on-one or group calls. It doesn't work in one-to-one calls between native platforms and the web. By default, music mode is disabled.

Prerequisites

- An Azure account with an active subscription. Create an account for free.

- A deployed Azure Communication Services resource. Create an Azure Communication Services resource.

- A user access token to enable the calling client. For more information, see Create and manage access tokens.

- Optional: Complete the quickstart to add voice calling to your application.

Install the SDK

Locate your project-level build.gradle file and add mavenCentral() to the list of repositories under buildscript and allprojects:

buildscript {

repositories {

...

mavenCentral()

...

}

}

allprojects {

repositories {

...

mavenCentral()

...

}

}

Then, in your module-level build.gradle file, add the following lines to the dependencies section:

dependencies {

...

implementation 'com.azure.android:azure-communication-calling:1.0.0'

...

}

Initialize the required objects

To create a CallAgent instance, you have to call the createCallAgent method on a CallClient instance. This call asynchronously returns a CallAgent instance object.

The createCallAgent method takes CommunicationUserCredential as an argument, which encapsulates an access token.

To access DeviceManager, you must create a callAgent instance first. Then you can use the CallClient.getDeviceManager method to get DeviceManager.

String userToken = '<user token>';

CallClient callClient = new CallClient();

CommunicationTokenCredential tokenCredential = new CommunicationTokenCredential(userToken);

android.content.Context appContext = this.getApplicationContext(); // From within an activity, for instance

CallAgent callAgent = callClient.createCallAgent(appContext, tokenCredential).get();

DeviceManager deviceManager = callClient.getDeviceManager(appContext).get();

To set a display name for the caller, use this alternative method:

String userToken = '<user token>';

CallClient callClient = new CallClient();

CommunicationTokenCredential tokenCredential = new CommunicationTokenCredential(userToken);

android.content.Context appContext = this.getApplicationContext(); // From within an activity, for instance

CallAgentOptions callAgentOptions = new CallAgentOptions();

callAgentOptions.setDisplayName("Alice Bob");

DeviceManager deviceManager = callClient.getDeviceManager(appContext).get();

CallAgent callAgent = callClient.createCallAgent(appContext, tokenCredential, callAgentOptions).get();

You can use the audio filter feature to apply different audio preprocessing options to outgoing audio. The two types of audio filters are OutgoingAudioFilters and LiveOutgoingAudioFilters. Use OutgoingAudioFilters to change settings before the call starts. Use LiveOutgoingAudioFilters to change settings while a call is in progress.

You first need to import the Calling SDK and the associated classes:

import com.azure.android.communication.calling.OutgoingAudioOptions;

import com.azure.android.communication.calling.OutgoingAudioFilters;

import com.azure.android.communication.calling.LiveOutgoingAudioFilters;

Before a call starts

You can apply OutgoingAudioFilters when a call starts.

Begin by creating an OutgoingAudioFilters property and passing it into OutgoingAudioOptions, as shown in the following code:

OutgoingAudioOptions outgoingAudioOptions = new OutgoingAudioOptions();

OutgoingAudioFilters filters = new OutgoingAudioFilters();

filters.setNoiseSuppressionMode(NoiseSuppressionMode.HIGH);

filters.setAnalogAutomaticGainControlEnabled(true);

filters.setDigitalAutomaticGainControlEnabled(true);

filters.setMusicModeEnabled(true);

filters.setAcousticEchoCancellationEnabled(true);

outgoingAudioOptions.setAudioFilters(filters);

During the call

You can apply LiveOutgoingAudioFilters after a call begins. You can retrieve this object from the call object during the call. To change the setting in LiveOutgoingAudioFilters, set the members inside the class to a valid value and they're applied.

Only a subset of the filters available from OutgoingAudioFilters are available during an active call. They're music mode, echo cancellation, and noise suppression mode.

LiveOutgoingAudioFilters filters = call.getLiveOutgoingAudioFilters();

filters.setMusicModeEnabled(false);

filters.setAcousticEchoCancellationEnabled(false);

filters.setNoiseSuppressionMode(NoiseSuppressionMode.HIGH);

Learn how to configure audio filters with the Native Calling SDKs

The Azure Communication Services audio effects offer filters that can improve your audio call. For native platforms (Android, iOS, and Windows), you can configure the following filters.

Echo cancellation

You can eliminate acoustic echo caused by the caller's voice echoing back into the microphone after it's emitted from the speaker. Echo cancellation ensures clear communication.

You can configure the filter before and during a call. You can toggle echo cancellation only if music mode is enabled. By default, this filter is enabled.

Noise suppression

You can improve audio quality by filtering out unwanted background noises such as typing, air conditioning, or street sounds. This technology ensures that the voice is crisp and clear to facilitate more effective communication.

You can configure the filter before and during a call. The currently available modes are Off, Auto, Low, and High. By default, this feature is set to High.

Automatic gain control

You can automatically adjust the microphone's volume to ensure consistent audio levels throughout the call.

- Analog automatic gain control is a filter that's available only before a call. By default, this filter is enabled.

- Digital automatic gain control is a filter that's available only before a call. By default, this filter is enabled.

Music mode

Music mode is a filter that's available before and during a call. To learn more about music mode, see Music mode on Native Calling SDK. Music mode works only on native platforms over one-on-one or group calls. It doesn't work in one-to-one calls between native platforms and the web. By default, music mode is disabled.

Prerequisites

- An Azure account with an active subscription. Create an account for free.

- A deployed Azure Communication Services resource. Create an Azure Communication Services resource.

- A user access token to enable the calling client. For more information, see Create and manage access tokens.

- Optional: Complete the quickstart to add voice calling to your application.

Set up your system

Follow these steps to set up your system.

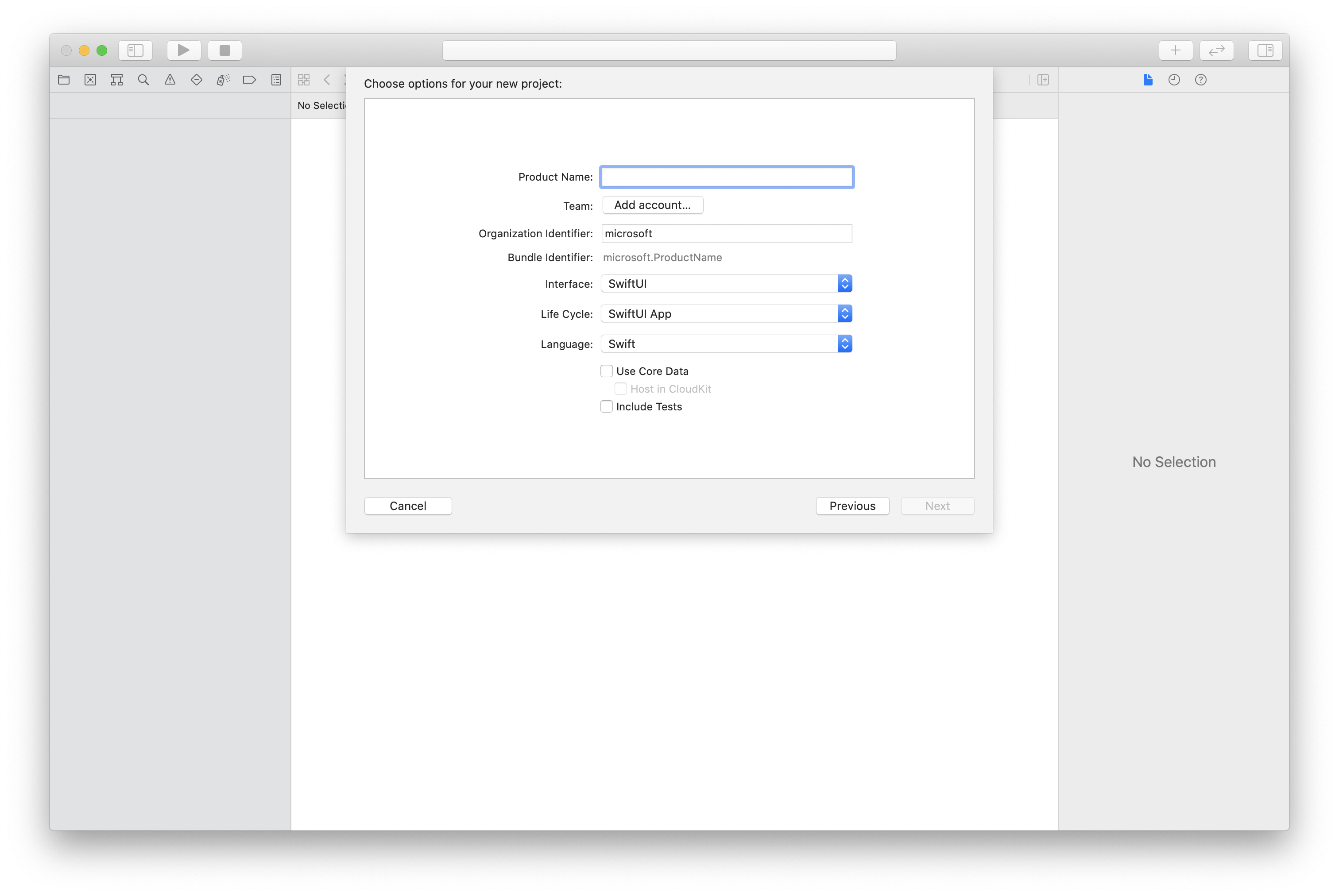

Create the Xcode project

In Xcode, create a new iOS project and select the Single View App template. This article uses the SwiftUI framework, so you should set Language to Swift and set Interface to SwiftUI.

You're not going to create tests in this article. Feel free to clear the Include Tests checkbox.

Install the package and dependencies by using CocoaPods

Create a Podfile for your application, like this example:

platform :ios, '13.0' use_frameworks! target 'AzureCommunicationCallingSample' do pod 'AzureCommunicationCalling', '~> 1.0.0' endRun

pod install.Open

.xcworkspaceby using Xcode.

Request access to the microphone

To access the device's microphone, you need to update your app's information property list by using NSMicrophoneUsageDescription. Set the associated value to a string that's included in the dialog that the system uses to request access from the user.

Right-click the Info.plist entry of the project tree, and then select Open As > Source Code. Add the following lines in the top-level <dict> section, and then save the file.

<key>NSMicrophoneUsageDescription</key>

<string>Need microphone access for VOIP calling.</string>

Set up the app framework

Open your project's ContentView.swift file. Add an import declaration to the top of the file to import the AzureCommunicationCalling library. In addition, import AVFoundation. You need it for audio permission requests in the code.

import AzureCommunicationCalling

import AVFoundation

Initialize CallAgent

To create a CallAgent instance from CallClient, you have to use a callClient.createCallAgent method that asynchronously returns a CallAgent object after it's initialized.

To create a call client, pass a CommunicationTokenCredential object:

import AzureCommunication

let tokenString = "token_string"

var userCredential: CommunicationTokenCredential?

do {

let options = CommunicationTokenRefreshOptions(initialToken: token, refreshProactively: true, tokenRefresher: self.fetchTokenSync)

userCredential = try CommunicationTokenCredential(withOptions: options)

} catch {

updates("Couldn't created Credential object", false)

initializationDispatchGroup!.leave()

return

}

// tokenProvider needs to be implemented by Contoso, which fetches a new token

public func fetchTokenSync(then onCompletion: TokenRefreshOnCompletion) {

let newToken = self.tokenProvider!.fetchNewToken()

onCompletion(newToken, nil)

}

Pass the CommunicationTokenCredential object that you created to CallClient, and set the display name:

self.callClient = CallClient()

let callAgentOptions = CallAgentOptions()

options.displayName = " iOS Azure Communication Services User"

self.callClient!.createCallAgent(userCredential: userCredential!,

options: callAgentOptions) { (callAgent, error) in

if error == nil {

print("Create agent succeeded")

self.callAgent = callAgent

} else {

print("Create agent failed")

}

})

You can use the audio filter feature to apply different audio preprocessing options to outgoing audio. The two types of audio filters are OutgoingAudioFilters and LiveOutgoingAudioFilters. Use OutgoingAudioFilters to change settings before the call starts. Use LiveOutgoingAudioFilters to change settings while a call is in progress.

You first need to import the Calling SDK:

import AzureCommunicationCalling

Before the call starts

You can apply OutgoingAudioFilters when a call starts.

Begin by creating an OutgoingAudioFilters property and passing it into OutgoingAudioOptions, as shown here:

let outgoingAudioOptions = OutgoingAudioOptions()

let filters = OutgoingAudioFilters()

filters.NoiseSuppressionMode = NoiseSuppressionMode.high

filters.analogAutomaticGainControlEnabled = true

filters.digitalAutomaticGainControlEnabled = true

filters.musicModeEnabled = true

filters.acousticEchoCancellationEnabled = true

outgoingAudioOptions.audioFilters = filters

During the call

You can apply LiveOutgoingAudioFilters after a call begins. You can retrieve this object from the call object during the call. To change the setting in LiveOutgoingAudioFilters, set the members inside the class to a valid value and they're applied.

Only a subset of the filters available from OutgoingAudioFilters are available during an active call. They're music mode, echo cancellation, and noise suppression mode.

LiveOutgoingAudioFilters filters = call.liveOutgoingAudioFilters

filters.musicModeEnabled = true

filters.acousticEchoCancellationEnabled = true

filters.NoiseSuppressionMode = NoiseSuppressionMode.high

Learn how to configure audio filters with the Native Calling SDKs

The Azure Communication Services audio effects offer filters that can improve your audio call. For native platforms (Android, iOS, and Windows), you can configure the following filters.

Echo cancellation

You can eliminate acoustic echo caused by the caller's voice echoing back into the microphone after it's emitted from the speaker. Echo cancellation ensures clear communication.

You can configure the filter before and during a call. You can toggle echo cancellation only if music mode is enabled. By default, this filter is enabled.

Noise suppression

You can improve audio quality by filtering out unwanted background noises such as typing, air conditioning, or street sounds. This technology ensures that the voice is crisp and clear to facilitate more effective communication.

You can configure the filter before and during a call. The currently available modes are Off, Auto, Low, and High. By default, this feature is set to High.

Automatic gain control

You can automatically adjust the microphone's volume to ensure consistent audio levels throughout the call.

- Analog automatic gain control is a filter that's available only before a call. By default, this filter is enabled.

- Digital automatic gain control is a filter that's available only before a call. By default, this filter is enabled.

Music mode

Music mode is a filter that's available before and during a call. To learn more about music mode, see Music mode on Native Calling SDK. Music mode works only on native platforms over one-on-one or group calls. It doesn't work in one-to-one calls between native platforms and the web. By default, music mode is disabled.

Prerequisites

- An Azure account with an active subscription. Create an account for free.

- A deployed Azure Communication Services resource. Create an Azure Communication Services resource.

- A user access token to enable the calling client. For more information, see Create and manage access tokens.

- Optional: Complete the quickstart to add voice calling to your application.

Set up your system

Follow these steps to set up your system.

Create the Visual Studio project

For a Universal Windows Platform app, in Visual Studio 2022, create a new Blank App (Universal Windows) project. After you enter the project name, feel free to choose any Windows SDK later than 10.0.17763.0.

For a WinUI 3 app, create a new project with the Blank App, Packaged (WinUI 3 in Desktop) template to set up a single-page WinUI 3 app. Windows App SDK version 1.3 or later is required.

Install the package and dependencies by using NuGet Package Manager

The Calling SDK APIs and libraries are publicly available via a NuGet package.

To find, download, and install the Calling SDK NuGet package:

- Open NuGet Package Manager by selecting Tools > NuGet Package Manager > Manage NuGet Packages for Solution.

- Select Browse, and then enter Azure.Communication.Calling.WindowsClient in the search box.

- Make sure that the Include prerelease checkbox is selected.

- Select the Azure.Communication.Calling.WindowsClient package, and then select Azure.Communication.Calling.WindowsClient 1.4.0-beta.1 or a newer version.

- Select the checkbox that corresponds to the Azure Communication Services project on the right pane.

- Select Install.

You can use the audio filter feature to apply different audio preprocessing to outgoing audio. The two types of audio filters are OutgoingAudioFilters and LiveOutgoingAudioFilters. Use OutgoingAudioFilters to change settings before the call starts. Use LiveOutgoingAudioFilters to change settings while a call is in progress.

You first need to import the Calling SDK:

using Azure.Communication;

using Azure.Communication.Calling.WindowsClient;

Before a call starts

You can apply OutgoingAudioFilters when a call starts.

Begin by creating a OutgoingAudioFilters property and passing it into OutgoingAudioOptions, as shown in the following code:

var outgoingAudioOptions = new OutgoingAudioOptions();

var filters = new OutgoingAudioFilters()

{

AnalogAutomaticGainControlEnabled = true,

DigitalAutomaticGainControlEnabled = true,

MusicModeEnabled = true,

AcousticEchoCancellationEnabled = true,

NoiseSuppressionMode = NoiseSuppressionMode.High

};

outgoingAudioOptions.Filters = filters;

During the call

You can apply LiveOutgoingAudioFilters after a call begins. You can retrieve this object from the call object after the call begins. To change the setting in LiveOutgoingAudioFilters, set the members inside the class to a valid value and they're applied.

Only a subset of the filters available from OutgoingAudioFilters are available during an active call. They're music mode, echo cancellation, and noise suppression mode.

LiveOutgoingAudioFilters filter = call.LiveOutgoingAudioFilters;

filter.MusicModeEnabled = true;

filter.AcousticEchoCancellationEnabled = true;

filter.NoiseSuppressionMode = NoiseSuppressionMode.Auto;