Use GPUs with clustered VMs

Applies to: Azure Stack HCI, version 22H2

You can include graphics processing units (GPUs) in your clusters to provide GPU acceleration to workloads running in clustered VMs. GPU acceleration can be provided via Discrete Device Assignment (DDA), which allows you to dedicate one or more physical GPUs to a VM, or through GPU Partitioning. Clustered VMs can take advantage of GPU acceleration, and clustering capabilities such as high availability via failover. Live migration of virtual machines (VMs) isn't currently supported, but VMs can be automatically restarted and placed where GPU resources are available if there's a failure.

In this article, you will learn how to use GPUs with clustered VMs to provide GPU acceleration to workloads using Discrete Device Assignment. This article guides you through preparing the cluster, assigning a GPU to a cluster VM, and failing over that VM using Windows Admin Center and PowerShell.

For information about how to manage GPUs in Azure Local, version 23H2, see Prepare GPUs for Azure Local.

Prerequisites

There are several requirements and things to consider before you begin to use GPUs with clustered VMs:

- You need an Azure Local instance running Azure Stack HCI operating system, version 22H2 or later..

- You need a Windows Server Failover cluster running Windows Server 2025 or later.

You must install the same make and model of the GPUs across all the servers in your cluster.

Review and follow the instructions from your GPU manufacturer to install the necessary drivers and software on each server in the cluster.

Depending on your hardware vendor, you might also need to configure any GPU licensing requirements.

You need a machine with Windows Admin Center installed. This machine could be one of your cluster nodes.

Create a VM to assign the GPU to. Prepare that VM for DDA by setting its cache behavior, stop action, and memory-mapped I/O (MMIO) properties according to the instructions in Deploy graphics devices using Discrete Device Assignment.

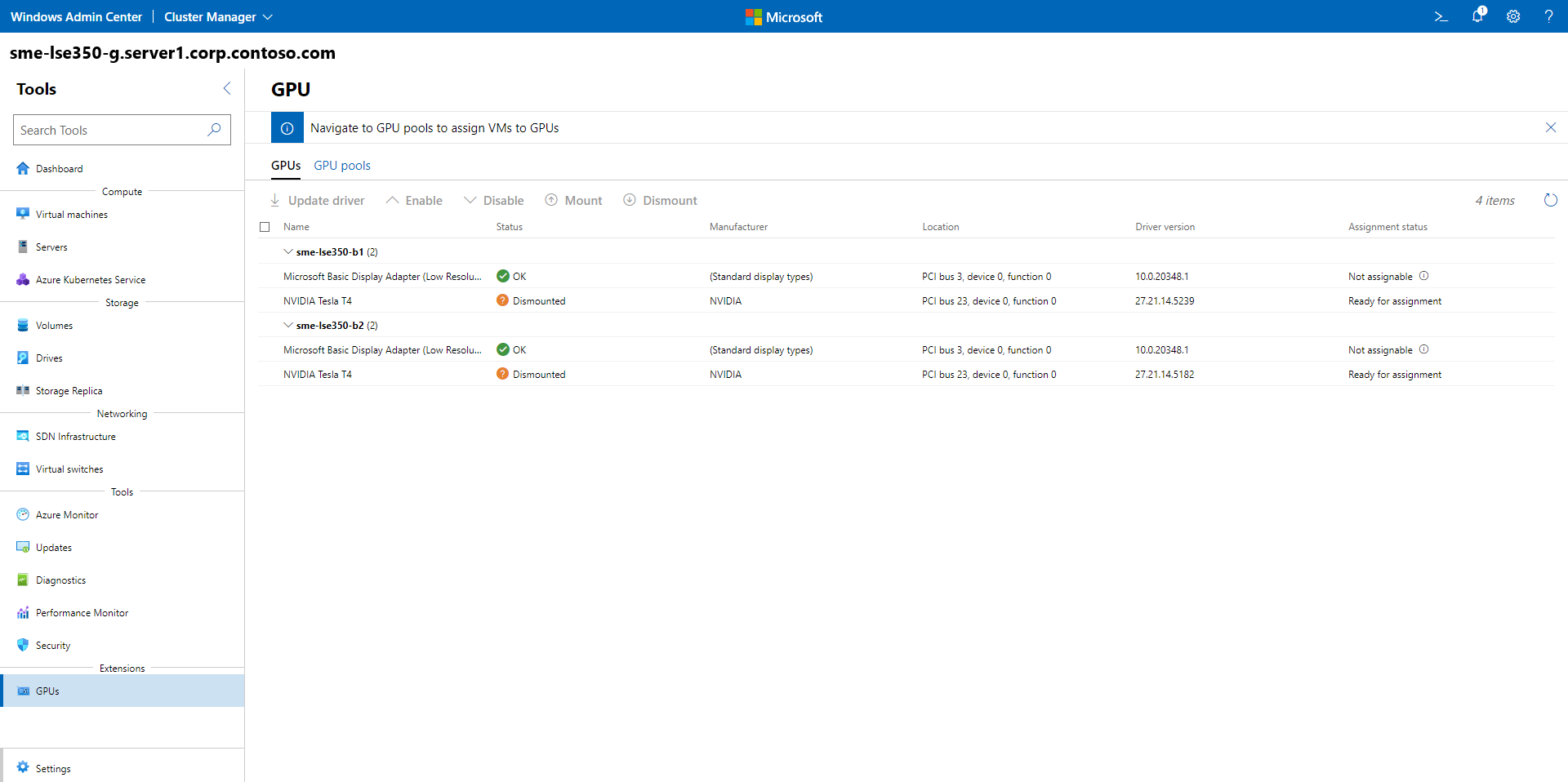

Prepare the GPUs in each server by installing security mitigation drivers on each server, disabling the GPUs, and dismounting them from the host. To learn more about this process, see Deploy graphics devices by using Discrete Device Assignment.

Follow the steps in Plan for deploying devices by using Discrete Device Assignment to prepare GPU devices in the cluster.

Make sure your device has enough MMIO space allocated within the VM. For more information, see MMIO Space.

Create a VM to assign the GPU to. Prepare that VM for DDA by setting its cache behavior, stop action, and memory-mapped I/O (MMIO) properties according to the instructions in Deploy graphics devices using Discrete Device Assignment.

Prepare the GPUs in each server by installing security mitigation drivers on each server, disabling the GPUs, and dismounting them from the host. To learn more about this process, see Deploy graphics devices by using Discrete Device Assignment.

Note

Your system must be supported Azure Local solution with GPU support. To browse options, visit the Azure Local Catalog.

Prepare the cluster

When the prerequisites are complete, you can prepare the cluster to use GPUs with clustered VMs.

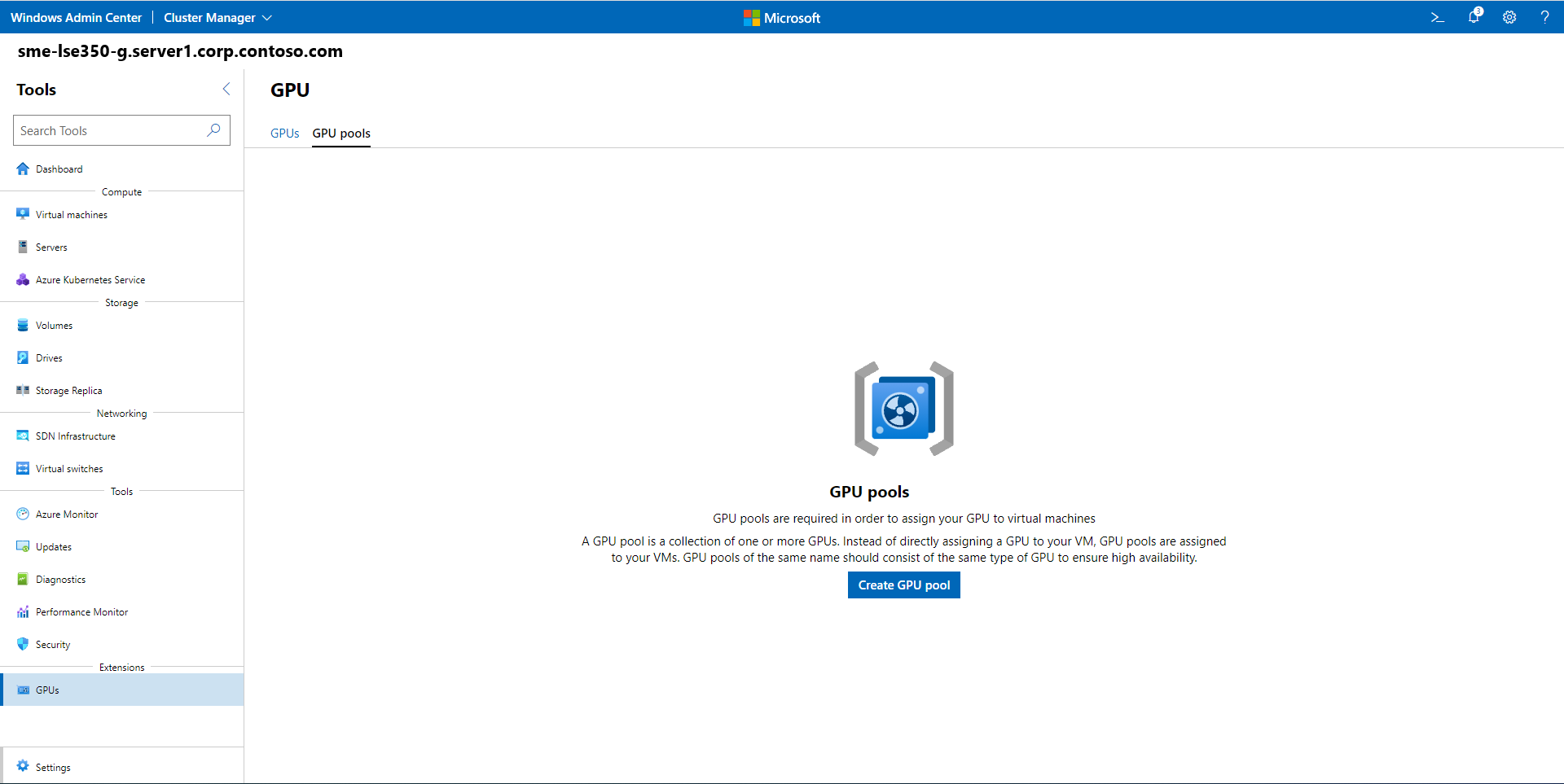

Preparing the cluster involves creating a resource pool that contains the GPUs that are available for assignment to VMs. The cluster uses this pool to determine VM placement for any started or moved VMs that are assigned to the GPU resource pool.

Using Windows Admin Center, follow these steps to prepare the cluster to use GPUs with clustered VMs.

To prepare the cluster and assign a VM to a GPU resource pool:

Launch Windows Admin Center and make sure the GPUs extension is already installed.

Select Cluster Manager from the top dropdown menu and connect to your cluster.

From the Settings menu, select Extensions > GPUs.

On the Tools menu, under Extensions, select GPUs to open the tool.

On tool's main page, select the GPU pools tab, and then select Create GPU pool.

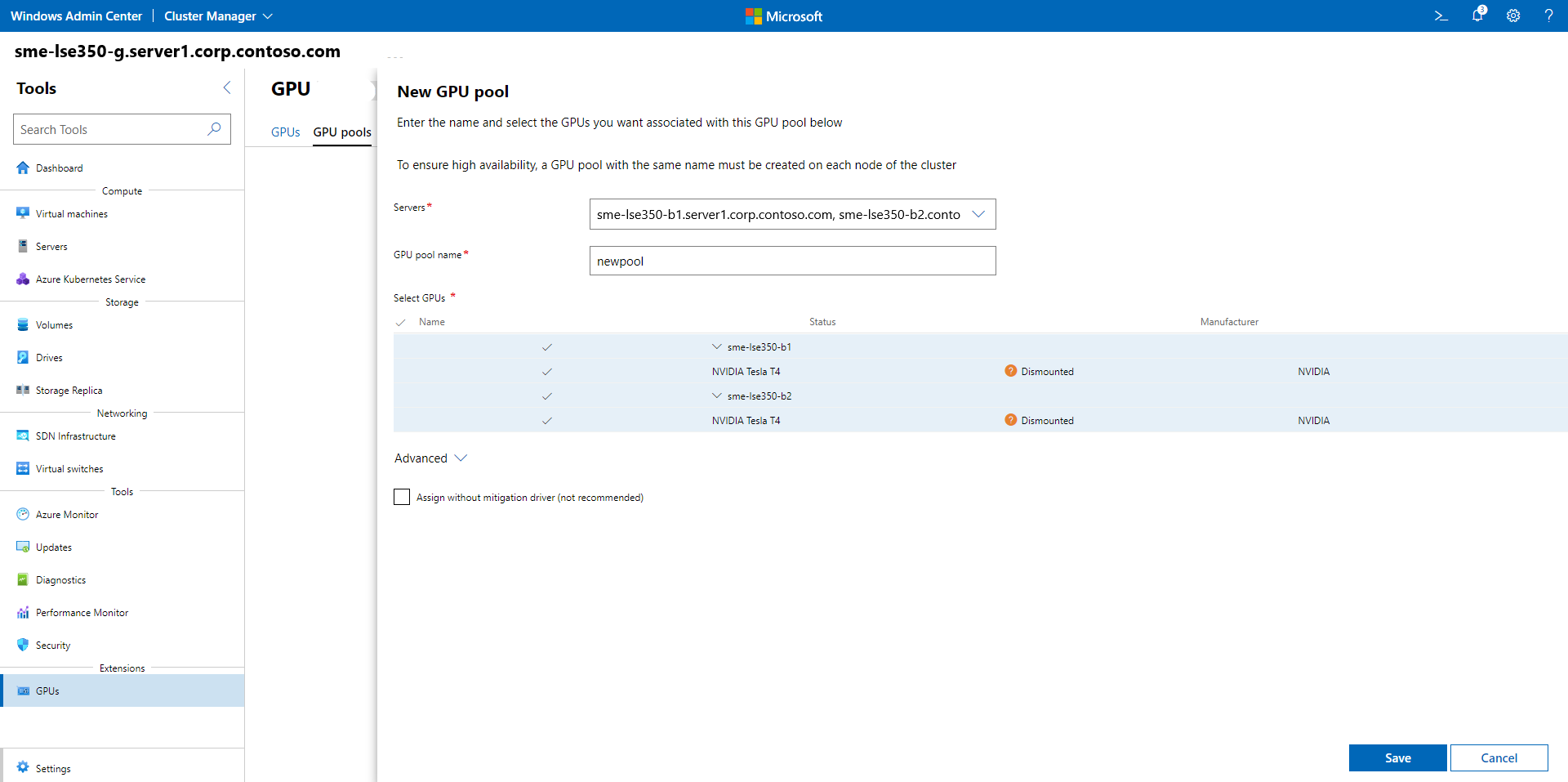

On the New GPU pool page, specify the following and then select Save:

- Server name

- GPU pool name

- GPUs that you want to add to the pool

After the process completes, you'll receive a success prompt that shows the name of the new GPU pool and the host server.

Assign a VM to a GPU resource pool

You can now assign a VM to a GPU resource pool. You can assign one or more VMs to a clustered GPU resource pool, and remove a VM from a clustered GPU resource pool.

Follow these steps to assign an existing VM to a GPU resource pool using Windows Admin Center.

Note

You also need to install drivers from your GPU manufacturer inside the VM so that apps in the VM can take advantage of the GPU assigned to them.

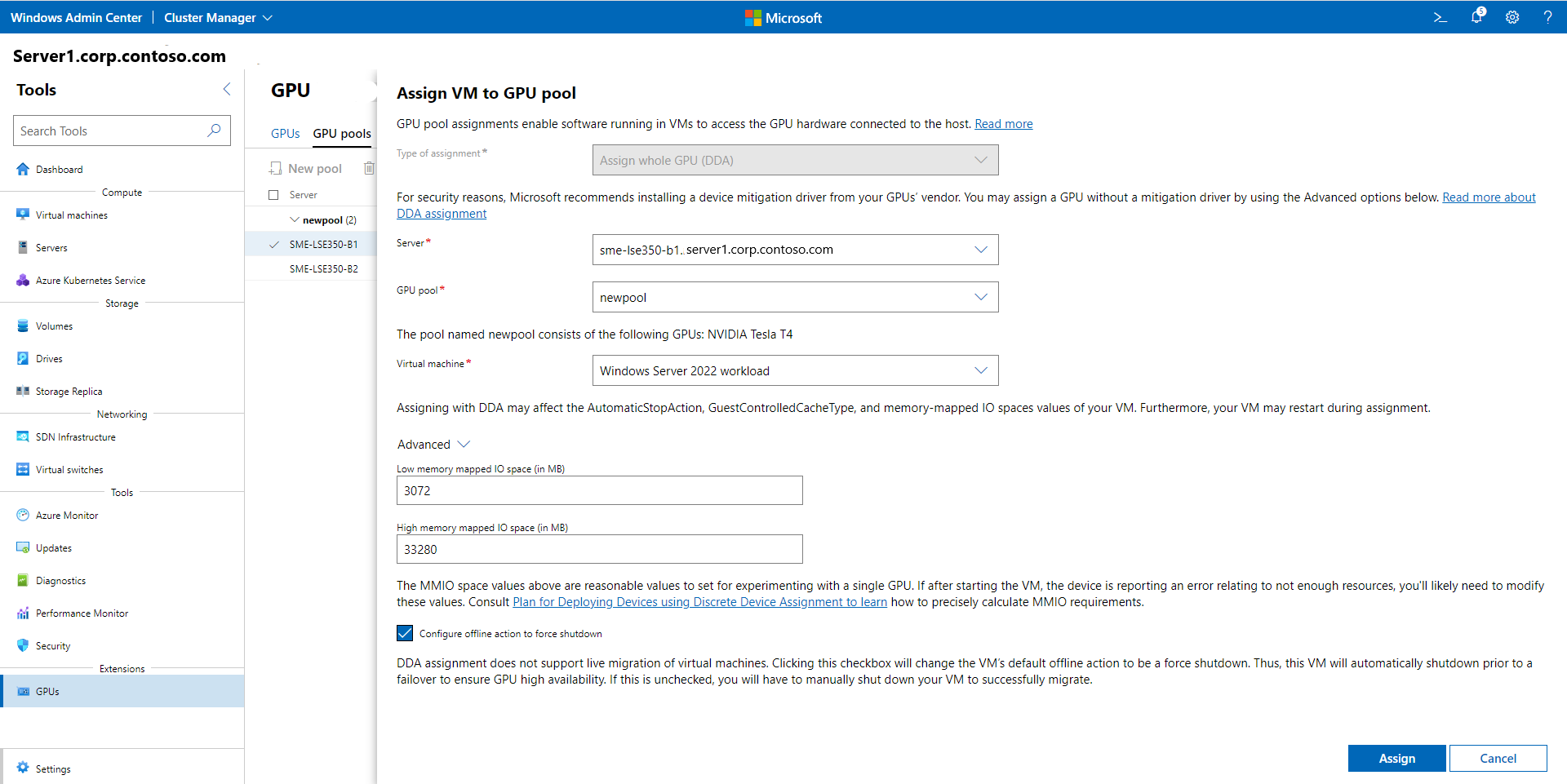

On the Assign VM to GPU pool page, specify the following, then select Assign:

- Server name

- GPU pool name

- Virtual machine that you want to assign the GPU to from the GPU pool.

You can also define advanced setting values for memory-mapped IO (MMIO) spaces to determine resource requirements for a single GPU.

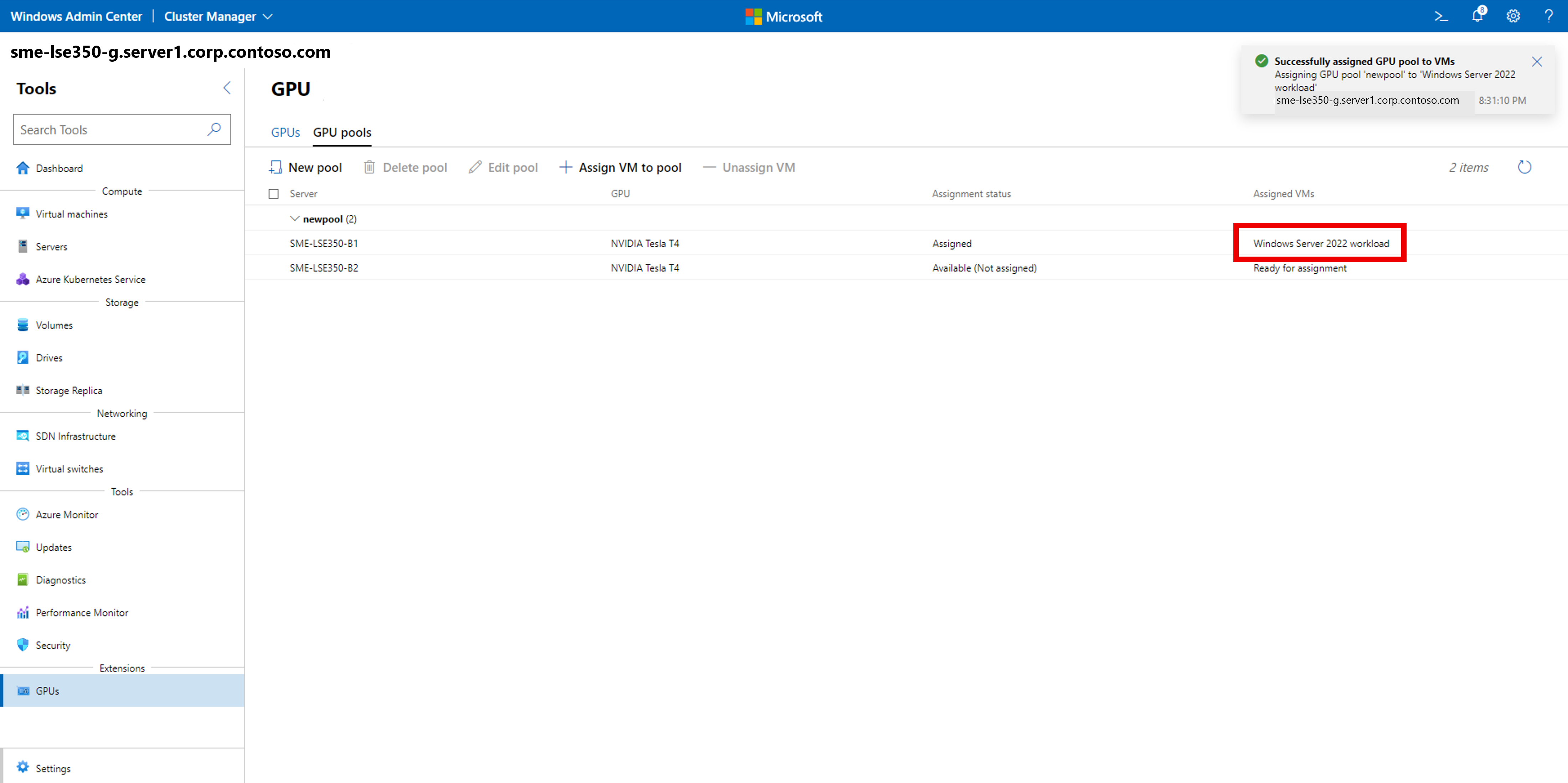

After the process completes, you'll receive a confirmation prompt that shows you successfully assigned the GPU from the GPU resource pool to the VM, which displays under Assigned VMs.

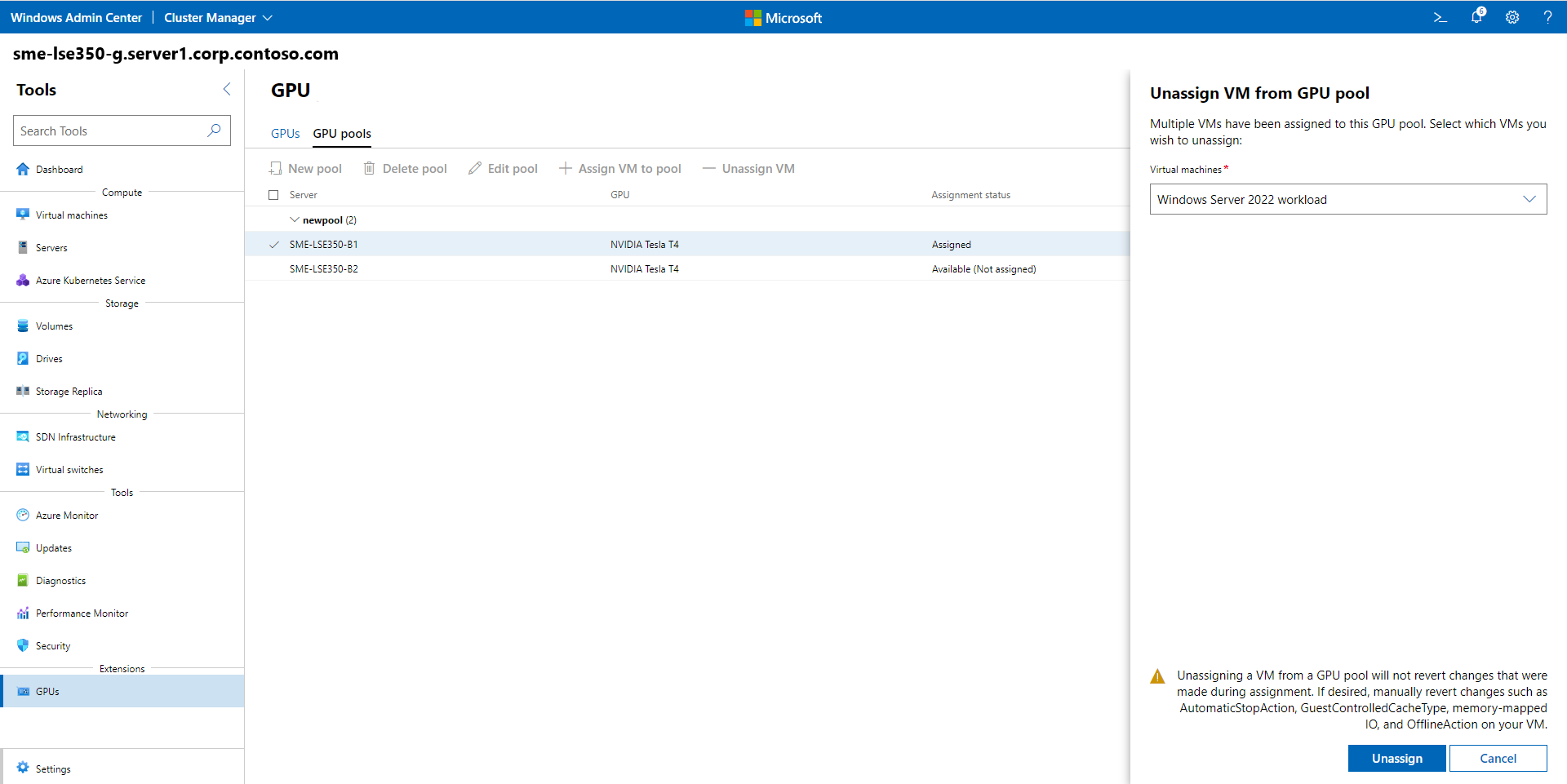

To unassign a VM from a GPU resource pool:

On the GPU pools tab, select the GPU that you want to unassign, and then select Unassign VM.

On the Unassign VM from GPU pool page, in the Virtual machines list box, specify the name of the VM, and then select Unassign.

After the process completes, you receive a success prompt that the VM has been unassigned from the GPU pool, and under Assignment status the GPU shows Available (Not assigned).

When you start the VM, the cluster ensures that the VM is placed on a server with available GPU resources from this cluster-wide pool. The cluster also assigns the GPU to the VM through DDA, which allows the GPU to be accessed from workloads inside the VM.

Fail over a VM with an assigned GPU

To test the cluster’s ability to keep your GPU workload available, perform a drain operation on the server where the VM is running with an assigned GPU. To drain the server, follow the instructions in Failover cluster maintenance procedures. The cluster restarts the VM on another server in the cluster, as long as another server has sufficient available GPU resources in the pool that you created.

To test the cluster’s ability to keep your GPU workload available, perform a drain operation on the server where the VM is running with an assigned GPU. To drain the server, follow the instructions in Failover cluster maintenance procedures. The cluster restarts the VM on another server in the cluster, as long as another server has sufficient available GPU resources in the pool that you created.

Related content

For more information on using GPUs with your clustered VMs, see:

For more information on using GPUs with your VMs and GPU partitioning, see: