Implement Azure OpenAI with RAG using vector search in a .NET app

This tutorial explores integration of the RAG pattern using Open AI models and vector search capabilities in a .NET app. The sample application performs vector searches on custom data stored in Azure Cosmos DB for MongoDB and further refines the responses using generative AI models, such as GPT-35 and GPT-4. In the sections that follow, you'll set up a sample application and explore key code examples that demonstrate these concepts.

Prerequisites

- .NET 8.0 installed

- An Azure Account

- An Azure Cosmos DB for MongoDB vCore service

- An Azure Open AI service

- Deploy

text-embedding-ada-002model for embeddings - Deploy

gpt-35-turbomodel for chat completions

- Deploy

App overview

The Cosmos Recipe Guide app allows you to perform vector and AI driven searches against a set of recipe data. You can search directly for available recipes or prompt the app with ingredient names to find related recipes. The app and the sections ahead guide you through the following workflow to demonstrate this type of functionality:

Upload sample data to an Azure Cosmos DB for MongoDB database.

Create embeddings and a vector index for the uploaded sample data using the Azure OpenAI

text-embedding-ada-002model.Perform vector similarity search based on the user prompts.

Use the Azure OpenAI

gpt-35-turbocompletions model to compose more meaningful answers based on the search results data.

Get started

Clone the following GitHub repository:

git clone https://github.com/microsoft/AzureDataRetrievalAugmentedGenerationSamples.gitIn the C#/CosmosDB-MongoDBvCore folder, open the CosmosRecipeGuide.sln file.

In the appsettings.json file, replace the following config values with your Azure OpenAI and Azure CosmosDB for MongoDb values:

"OpenAIEndpoint": "https://<your-service-name>.openai.azure.com/", "OpenAIKey": "<your-api-key>", "OpenAIEmbeddingDeployment": "<your-ada-deployment-name>", "OpenAIcompletionsDeployment": "<your-gpt-deployment-name>", "MongoVcoreConnection": "<your-mongo-connection-string>"Launch the app by pressing the Start button at the top of Visual Studio.

Explore the app

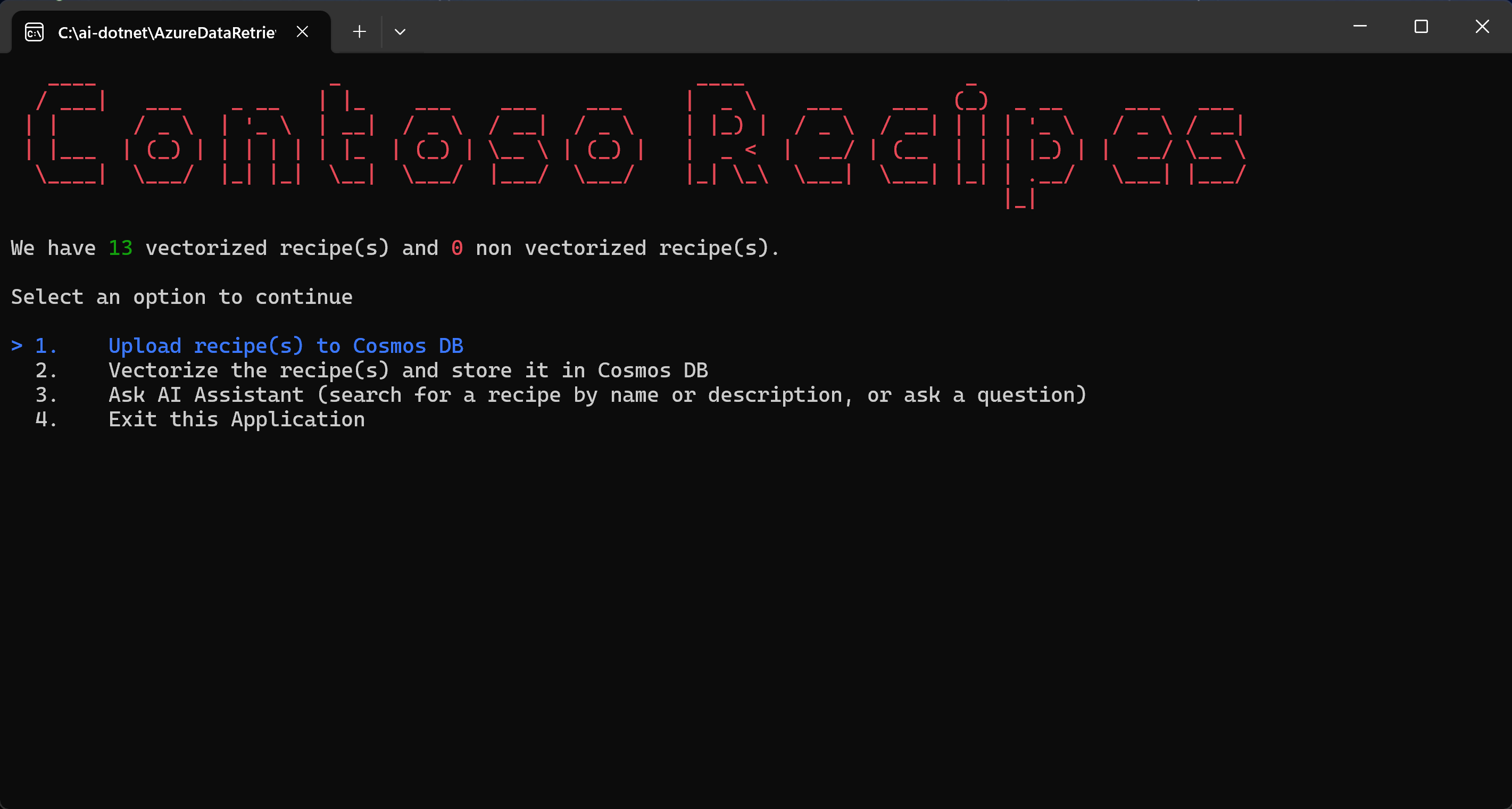

When you run the app for the first time, it connects to Azure Cosmos DB and reports that there are no recipes available yet. Follow the steps displayed by the app to begin the core workflow.

Select Upload recipe(s) to Cosmos DB and press Enter. This command reads sample JSON files from the local project and uploads them to the Cosmos DB account.

The code from the Utility.cs class parses the local JSON files.

public static List<Recipe> ParseDocuments(string Folderpath) { List<Recipe> recipes = new List<Recipe>(); Directory.GetFiles(Folderpath) .ToList() .ForEach(f => { var jsonString= System.IO.File.ReadAllText(f); Recipe recipe = JsonConvert.DeserializeObject<Recipe>(jsonString); recipe.id = recipe.name.ToLower().Replace(" ", ""); ret.Add(recipe); } ); return recipes; }The

UpsertVectorAsyncmethod in the VCoreMongoService.cs file uploads the documents to Azure Cosmos DB for MongoDB.public async Task UpsertVectorAsync(Recipe recipe) { BsonDocument document = recipe.ToBsonDocument(); if (!document.Contains("_id")) { Console.WriteLine("UpsertVectorAsync: Document does not contain _id."); throw new ArgumentException("UpsertVectorAsync: Document does not contain _id."); } string? _idValue = document["_id"].ToString(); try { var filter = Builders<BsonDocument>.Filter.Eq("_id", _idValue); var options = new ReplaceOptions { IsUpsert = true }; await _recipeCollection.ReplaceOneAsync(filter, document, options); } catch (Exception ex) { Console.WriteLine($"Exception: UpsertVectorAsync(): {ex.Message}"); throw; } }Select Vectorize the recipe(s) and store them in Cosmos DB.

The JSON items uploaded to Cosmos DB do not contain embeddings and therefore are not optimized for RAG via vector search. An embedding is an information-dense, numerical representation of the semantic meaning of a piece of text. Vector searches are able to find items with contextually similar embeddings.

The

GetEmbeddingsAsyncmethod in the OpenAIService.cs file creates an embedding for each item in the database.public async Task<float[]?> GetEmbeddingsAsync(dynamic data) { try { EmbeddingsOptions options = new EmbeddingsOptions(data) { Input = data }; var response = await _openAIClient.GetEmbeddingsAsync(openAIEmbeddingDeployment, options); Embeddings embeddings = response.Value; float[] embedding = embeddings.Data[0].Embedding.ToArray(); return embedding; } catch (Exception ex) { Console.WriteLine($"GetEmbeddingsAsync Exception: {ex.Message}"); return null; } }The

CreateVectorIndexIfNotExistsin the VCoreMongoService.cs file creates a vector index, which enables you to perform vector similarity searches.public void CreateVectorIndexIfNotExists(string vectorIndexName) { try { //Find if vector index exists in vectors collection using (IAsyncCursor<BsonDocument> indexCursor = _recipeCollection.Indexes.List()) { bool vectorIndexExists = indexCursor.ToList().Any(x => x["name"] == vectorIndexName); if (!vectorIndexExists) { BsonDocumentCommand<BsonDocument> command = new BsonDocumentCommand<BsonDocument>( BsonDocument.Parse(@" { createIndexes: 'Recipe', indexes: [{ name: 'vectorSearchIndex', key: { embedding: 'cosmosSearch' }, cosmosSearchOptions: { kind: 'vector-ivf', numLists: 5, similarity: 'COS', dimensions: 1536 } }] }")); BsonDocument result = _database.RunCommand(command); if (result["ok"] != 1) { Console.WriteLine("CreateIndex failed with response: " + result.ToJson()); } } } } catch (MongoException ex) { Console.WriteLine("MongoDbService InitializeVectorIndex: " + ex.Message); throw; } }Select the Ask AI Assistant (search for a recipe by name or description, or ask a question) option in the application to run a user query.

The user query is converted to an embedding using the Open AI service and the embedding model. The embedding is then sent to Azure Cosmos DB for MongoDB and is used to perform a vector search. The

VectorSearchAsyncmethod in the VCoreMongoService.cs file performs a vector search to find vectors that are close to the supplied vector and returns a list of documents from Azure Cosmos DB for MongoDB vCore.public async Task<List<Recipe>> VectorSearchAsync(float[] queryVector) { List<string> retDocs = new List<string>(); string resultDocuments = string.Empty; try { //Search Azure Cosmos DB for MongoDB vCore collection for similar embeddings //Project the fields that are needed BsonDocument[] pipeline = new BsonDocument[] { BsonDocument.Parse( @$"{{$search: {{ cosmosSearch: {{ vector: [{string.Join(',', queryVector)}], path: 'embedding', k: {_maxVectorSearchResults}}}, returnStoredSource:true }} }}"), BsonDocument.Parse($"{{$project: {{embedding: 0}}}}"), }; var bsonDocuments = await _recipeCollection .Aggregate<BsonDocument>(pipeline).ToListAsync(); var recipes = bsonDocuments .ToList() .ConvertAll(bsonDocument => BsonSerializer.Deserialize<Recipe>(bsonDocument)); return recipes; } catch (MongoException ex) { Console.WriteLine($"Exception: VectorSearchAsync(): {ex.Message}"); throw; } }The

GetChatCompletionAsyncmethod generates an improved chat completion response based on the user prompt and the related vector search results.public async Task<(string response, int promptTokens, int responseTokens)> GetChatCompletionAsync(string userPrompt, string documents) { try { ChatMessage systemMessage = new ChatMessage( ChatRole.System, _systemPromptRecipeAssistant + documents); ChatMessage userMessage = new ChatMessage( ChatRole.User, userPrompt); ChatCompletionsOptions options = new() { Messages = { systemMessage, userMessage }, MaxTokens = openAIMaxTokens, Temperature = 0.5f, //0.3f, NucleusSamplingFactor = 0.95f, FrequencyPenalty = 0, PresencePenalty = 0 }; Azure.Response<ChatCompletions> completionsResponse = await openAIClient.GetChatCompletionsAsync(openAICompletionDeployment, options); ChatCompletions completions = completionsResponse.Value; return ( response: completions.Choices[0].Message.Content, promptTokens: completions.Usage.PromptTokens, responseTokens: completions.Usage.CompletionTokens ); } catch (Exception ex) { string message = $"OpenAIService.GetChatCompletionAsync(): {ex.Message}"; Console.WriteLine(message); throw; } }The app also uses prompt engineering to ensure Open AI service limits and formats the response for supplied recipes.

//System prompts to send with user prompts to instruct the model for chat session private readonly string _systemPromptRecipeAssistant = @" You are an intelligent assistant for Contoso Recipes. You are designed to provide helpful answers to user questions about recipes, cooking instructions provided in JSON format below. Instructions: - Only answer questions related to the recipe provided below. - Don't reference any recipe not provided below. - If you're unsure of an answer, say ""I don't know"" and recommend users search themselves. - Your response should be complete. - List the Name of the Recipe at the start of your response followed by step by step cooking instructions. - Assume the user is not an expert in cooking. - Format the content so that it can be printed to the Command Line console. - In case there is more than one recipe you find, let the user pick the most appropriate recipe.";