Connect to dbt Cloud

dbt (data build tool) is a development environment that enables data analysts and data engineers to transform data by simply writing select statements. dbt handles turning these select statements into tables and views. dbt compiles your code into raw SQL and then runs that code on the specified database in Azure Databricks. dbt supports collaborative coding patterns and best practices such as version control, documentation, and modularity.

dbt does not extract or load data. dbt focuses on the transformation step only, using a “transform after load” architecture. dbt assumes that you already have a copy of your data in your database.

This article focuses on dbt Cloud. dbt Cloud comes equipped with turnkey support for scheduling jobs, CI/CD, serving documentation, monitoring and alerting, and an integrated development environment (IDE).

A local version of dbt called dbt Core is also available. dbt Core enables you to write dbt code in the text editor or IDE of your choice on your local development machine and then run dbt from the command line. dbt Core includes the dbt Command Line Interface (CLI). The dbt CLI is free to use and open source. For more information, see Connect to dbt Core.

Because dbt Cloud and dbt Core can use hosted git repositories (for example, on GitHub, GitLab or BitBucket), you can use dbt Cloud to create a dbt project and then make it available to your dbt Cloud and dbt Core users. For more information, see Creating a dbt project and Using an existing project on the dbt website.

For a general overview of dbt, watch the following YouTube video (26 minutes).

Connect to dbt Cloud using Partner Connect

This section describes how to connect a Databricks SQL warehouse to dbt Cloud using Partner Connect, then give dbt Cloud read access to your data.

Differences between standard connections and dbt Cloud

To connect to dbt Cloud using Partner Connect, you follow the steps in Connect to data prep partners using Partner Connect. The dbt Cloud connection is different from standard data preparation and transformation connections in the following ways:

- In addition to a service principal and a personal access token, Partner Connect creates a SQL warehouse (formerly SQL endpoint) named DBT_CLOUD_ENDPOINT by default.

Steps to connect

To connect to dbt Cloud using Partner Connect, do the following:

After you connect to dbt Cloud, your dbt Cloud dashboard appears. To explore your dbt Cloud project, in the menu bar, next to the dbt logo, select your dbt account name from the first drop-down if it is not displayed, and then select the Databricks Partner Connect Trial project from the second drop-down menu if it is not displayed.

Tip

To view your project’s settings, click the “three stripes” or “hamburger” menu, click Account Settings > Projects, and click the name of the project. To view the connection settings, click the link next to Connection. To change any settings, click Edit.

To view the Azure Databricks personal access token information for this project, click the “person” icon on the menu bar, click Profile > Credentials > Databricks Partner Connect Trial, and click the name of the project. To make a change, click Edit.

Steps to give dbt Cloud read access to your data

Partner Connect gives create-only permission to the DBT_CLOUD_USER service principal only on the default catalog. Follow these steps in your Azure Databricks workspace to give the DBT_CLOUD_USER service principal read access to the data that you choose.

Warning

You can adapt these steps to give dbt Cloud additional access across catalogs, databases, and tables within your workspace. However, as a security best practice, Databricks strongly recommends that you give access only to the individual tables that you need the DBT_CLOUD_USER service principal to work with and only read access to those tables.

Click

Catalog in the sidebar.

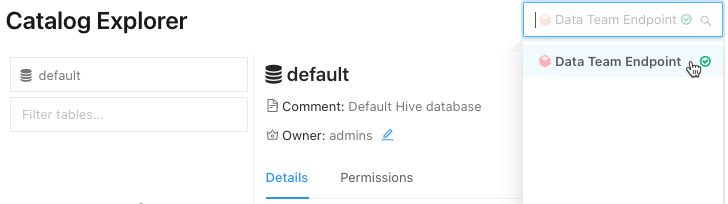

Catalog in the sidebar.Select the SQL warehouse (DBT_CLOUD_ENDPOINT) in the drop-down list at the top right.

- Under Catalog Explorer, select the catalog that contains the database for your table.

- Select the database that contains your table.

- Select your table.

Tip

If you do not see your catalog, database, or table listed, enter any portion of the name in the Select Catalog, Select Database, or Filter tables boxes, respectively, to narrow down the list.

Click Permissions.

Click Grant.

For Type to add multiple users or groups, select DBT_CLOUD_USER. This is the Azure Databricks service principal that Partner Connect created for you in the previous section.

Tip

If you do not see DBT_CLOUD_USER, begin typing

DBT_CLOUD_USERin the Type to add multiple users or groups box until it appears in the list, and then select it.Grant read access only by selecting

SELECTandREAD METADATA.Click OK.

Repeat steps 4-9 for each additional table that you want to give dbt Cloud read access to.

Troubleshoot the dbt Cloud connection

If someone deletes the project in dbt Cloud for this account, and you the click the dbt tile, an error message appears, stating that the project cannot be found. To fix this, click Delete connection, and then start from the beginning of this procedure to create the connection again.

Connect to dbt Cloud manually

This section describes how to connect an Azure Databricks cluster or a Databricks SQL warehouse in your Azure Databricks workspace to dbt Cloud.

Important

Databricks recommends connecting to a SQL warehouse. If you don’t have the Databricks SQL access entitlement, or if you want to run Python models, you can connect to a cluster instead.

Requirements

A cluster or SQL warehouse in your Azure Databricks workspace.

The connection details for your cluster or SQL warehouse, specifically the Server Hostname, Port, and HTTP Path values.

An Azure Databricks personal access token or a Microsoft Entra ID (formerly Azure Active Directory) token.. To create a personal access token, follow the steps in Azure Databricks personal access tokens for workspace users.

Note

As a security best practice, when you authenticate with automated tools, systems, scripts, and apps, Databricks recommends that you use personal access tokens belonging to service principals instead of workspace users. To create tokens for service principals, see Manage tokens for a service principal.

To connect dbt Cloud to data managed by Unity Catalog, dbt version 1.1 or above.

The steps in this article create a new environment that uses the latest dbt version. For information about upgrading the dbt version for an existing environment, see Upgrading to the latest version of dbt in Cloud in the dbt documentation.

Step 1: Sign up for dbt Cloud

Go to dbt Cloud - Signup and enter your email, name, and company information. Create a password and click Create my account.

Step 2: Create a dbt project

In this step, you create a dbt project, which contains a connection to an Azure Databricks cluster or a SQL warehouse, a repository that contains your source code, and one or more environments (such as testing and production environments).

- Sign in to dbt Cloud.

- Click the settings icon, and then click Account Settings.

- Click New Project.

- For Name, enter a unique name for your project, and then click Continue.

- Select an Azure Databricks compute connection from the Choose a connection drop-down menu or create a new connection:

Click Add new connection.

The Add new connection wizard opens in a new tab.

Click Databricks, and then click Next.

Note

Databricks recommends using

dbt-databricks, which supports Unity Catalog, instead ofdbt-spark. By default, new projects usedbt-databricks. To migrate an existing project todbt-databricks, see Migrating from dbt-spark to dbt-databricks in the dbt documentation.Under Settings, for Server Hostname, enter the server hostname value from the requirements.

For HTTP Path, enter the HTTP path value from the requirements.

If your workspace is Unity Catalog-enabled, under Optional Settings, enter the name of the catalog for dbt to use.

Click Save.

Return to the New project wizard and select the connection you just created from the Connection drop-down menu.

- Under Development Credentials, for Token, enter the personal access token or Microsoft Entra ID token from the requirements.

- For Schema, enter the name of the schema where you want dbt to create the tables and views.

- Click Test Connection.

- If the test completes successfully, click Save.

For more information, see Connecting to Databricks ODBC on the dbt website.

Tip

To view or change the settings for this project, or to delete the project altogether, click the settings icon, click Account Settings > Projects, and click the name of the project. To change the settings, click Edit. To delete the project, click Edit > Delete Project.

To view or change your Azure Databricks personal access token value for this project, click the “person” icon, click Profile > Credentials, and click the name of the project. To make a change, click Edit.

After you connect to an Azure Databricks cluster or a Databricks SQL warehouse, follow the on-screen instructions to Setup a Repository, and then click Continue.

After you set up the repository, follow the on-screen instructions to invite users and then click Complete. Or click Skip & Complete.

Tutorial

In this section, you use your dbt Cloud project to work with some sample data. This section assumes that you have already created your project and have the dbt Cloud IDE open to that project.

Step 1: Create and run models

In this step, you use the dbt Cloud IDE to create and run models, which are select statements that create either a new view (the default) or a new table in a database, based on existing data in that same database. This procedure creates a model based on the sample diamonds table from the Sample datasets.

Use the following code to create this table.

DROP TABLE IF EXISTS diamonds;

CREATE TABLE diamonds USING CSV OPTIONS (path "/databricks-datasets/Rdatasets/data-001/csv/ggplot2/diamonds.csv", header "true")

This procedure assumes this table has already been created in your workspace’s default database.

With the project open, click Develop at the top of the UI.

Click Initialize dbt project.

Click Commit and sync, enter a commit message, and then click Commit.

Click Create branch, enter a name for your branch, and then click Submit.

Create the first model: Click Create New File.

In the text editor, enter the following SQL statement. This statement selects only the carat, cut, color, and clarity details for each diamond from the

diamondstable. Theconfigblock instructs dbt to create a table in the database based on this statement.{{ config( materialized='table', file_format='delta' ) }}select carat, cut, color, clarity from diamondsTip

For additional

configoptions such as themergeincremental strategy, see Databricks configurations in the dbt documentation.Click Save As.

For the filename, enter

models/diamonds_four_cs.sqland then click Create.Create a second model: Click

(Create New File) in the upper-right corner.

(Create New File) in the upper-right corner.In the text editor, enter the following SQL statement. This statement selects unique values from the

colorscolumn in thediamonds_four_cstable, sorting the results in alphabetical order first to last. Because there is noconfigblock, this model instructs dbt to create a view in the database based on this statement.select distinct color from diamonds_four_cs sort by color ascClick Save As.

For the filename, enter

models/diamonds_list_colors.sql, and then click Create.Create a third model: Click

(Create New File) in the upper-right corner.

(Create New File) in the upper-right corner.In the text editor, enter the following SQL statement. This statement averages diamond prices by color, sorting the results by average price from highest to lowest. This model instructs dbt to create a view in the database based on this statement.

select color, avg(price) as price from diamonds group by color order by price descClick Save As.

For the filename, enter

models/diamonds_prices.sqland click Create.Run the models: In the command line, run the

dbt runcommand with the paths to the three preceding files. In thedefaultdatabase, dbt creates one table nameddiamonds_four_csand two views nameddiamonds_list_colorsanddiamonds_prices. dbt gets these view and table names from their related.sqlfile names.dbt run --model models/diamonds_four_cs.sql models/diamonds_list_colors.sql models/diamonds_prices.sql... ... | 1 of 3 START table model default.diamonds_four_cs.................... [RUN] ... | 1 of 3 OK created table model default.diamonds_four_cs............... [OK ...] ... | 2 of 3 START view model default.diamonds_list_colors................. [RUN] ... | 2 of 3 OK created view model default.diamonds_list_colors............ [OK ...] ... | 3 of 3 START view model default.diamonds_prices...................... [RUN] ... | 3 of 3 OK created view model default.diamonds_prices................. [OK ...] ... | ... | Finished running 1 table model, 2 view models ... Completed successfully Done. PASS=3 WARN=0 ERROR=0 SKIP=0 TOTAL=3Run the following SQL code to list information about the new views and to select all rows from the table and views.

If you are connecting to a cluster, you can run this SQL code from a notebook that is attached to the cluster, specifying SQL as the default language for the notebook. If you are connecting to a SQL warehouse, you can run this SQL code from a query.

SHOW views IN default+-----------+----------------------+-------------+ | namespace | viewName | isTemporary | +===========+======================+=============+ | default | diamonds_list_colors | false | +-----------+----------------------+-------------+ | default | diamonds_prices | false | +-----------+----------------------+-------------+SELECT * FROM diamonds_four_cs+-------+---------+-------+---------+ | carat | cut | color | clarity | +=======+=========+=======+=========+ | 0.23 | Ideal | E | SI2 | +-------+---------+-------+---------+ | 0.21 | Premium | E | SI1 | +-------+---------+-------+---------+ ...SELECT * FROM diamonds_list_colors+-------+ | color | +=======+ | D | +-------+ | E | +-------+ ...SELECT * FROM diamonds_prices+-------+---------+ | color | price | +=======+=========+ | J | 5323.82 | +-------+---------+ | I | 5091.87 | +-------+---------+ ...

Step 2: Create and run more complex models

In this step, you create more complex models for a set of related data tables. These data tables contain information about a fictional sports league of three teams playing a season of six games. This procedure creates the data tables, creates the models, and runs the models.

Run the following SQL code to create the necessary data tables.

If you are connecting to a cluster, you can run this SQL code from a notebook that is attached to the cluster, specifying SQL as the default language for the notebook. If you are connecting to a SQL warehouse, you can run this SQL code from a query.

The tables and views in this step start with

zzz_to help identify them as part of this example. You do not need to follow this pattern for your own tables and views.DROP TABLE IF EXISTS zzz_game_opponents; DROP TABLE IF EXISTS zzz_game_scores; DROP TABLE IF EXISTS zzz_games; DROP TABLE IF EXISTS zzz_teams; CREATE TABLE zzz_game_opponents ( game_id INT, home_team_id INT, visitor_team_id INT ) USING DELTA; INSERT INTO zzz_game_opponents VALUES (1, 1, 2); INSERT INTO zzz_game_opponents VALUES (2, 1, 3); INSERT INTO zzz_game_opponents VALUES (3, 2, 1); INSERT INTO zzz_game_opponents VALUES (4, 2, 3); INSERT INTO zzz_game_opponents VALUES (5, 3, 1); INSERT INTO zzz_game_opponents VALUES (6, 3, 2); -- Result: -- +---------+--------------+-----------------+ -- | game_id | home_team_id | visitor_team_id | -- +=========+==============+=================+ -- | 1 | 1 | 2 | -- +---------+--------------+-----------------+ -- | 2 | 1 | 3 | -- +---------+--------------+-----------------+ -- | 3 | 2 | 1 | -- +---------+--------------+-----------------+ -- | 4 | 2 | 3 | -- +---------+--------------+-----------------+ -- | 5 | 3 | 1 | -- +---------+--------------+-----------------+ -- | 6 | 3 | 2 | -- +---------+--------------+-----------------+ CREATE TABLE zzz_game_scores ( game_id INT, home_team_score INT, visitor_team_score INT ) USING DELTA; INSERT INTO zzz_game_scores VALUES (1, 4, 2); INSERT INTO zzz_game_scores VALUES (2, 0, 1); INSERT INTO zzz_game_scores VALUES (3, 1, 2); INSERT INTO zzz_game_scores VALUES (4, 3, 2); INSERT INTO zzz_game_scores VALUES (5, 3, 0); INSERT INTO zzz_game_scores VALUES (6, 3, 1); -- Result: -- +---------+-----------------+--------------------+ -- | game_id | home_team_score | visitor_team_score | -- +=========+=================+====================+ -- | 1 | 4 | 2 | -- +---------+-----------------+--------------------+ -- | 2 | 0 | 1 | -- +---------+-----------------+--------------------+ -- | 3 | 1 | 2 | -- +---------+-----------------+--------------------+ -- | 4 | 3 | 2 | -- +---------+-----------------+--------------------+ -- | 5 | 3 | 0 | -- +---------+-----------------+--------------------+ -- | 6 | 3 | 1 | -- +---------+-----------------+--------------------+ CREATE TABLE zzz_games ( game_id INT, game_date DATE ) USING DELTA; INSERT INTO zzz_games VALUES (1, '2020-12-12'); INSERT INTO zzz_games VALUES (2, '2021-01-09'); INSERT INTO zzz_games VALUES (3, '2020-12-19'); INSERT INTO zzz_games VALUES (4, '2021-01-16'); INSERT INTO zzz_games VALUES (5, '2021-01-23'); INSERT INTO zzz_games VALUES (6, '2021-02-06'); -- Result: -- +---------+------------+ -- | game_id | game_date | -- +=========+============+ -- | 1 | 2020-12-12 | -- +---------+------------+ -- | 2 | 2021-01-09 | -- +---------+------------+ -- | 3 | 2020-12-19 | -- +---------+------------+ -- | 4 | 2021-01-16 | -- +---------+------------+ -- | 5 | 2021-01-23 | -- +---------+------------+ -- | 6 | 2021-02-06 | -- +---------+------------+ CREATE TABLE zzz_teams ( team_id INT, team_city VARCHAR(15) ) USING DELTA; INSERT INTO zzz_teams VALUES (1, "San Francisco"); INSERT INTO zzz_teams VALUES (2, "Seattle"); INSERT INTO zzz_teams VALUES (3, "Amsterdam"); -- Result: -- +---------+---------------+ -- | team_id | team_city | -- +=========+===============+ -- | 1 | San Francisco | -- +---------+---------------+ -- | 2 | Seattle | -- +---------+---------------+ -- | 3 | Amsterdam | -- +---------+---------------+Create the first model: Click

(Create New File) in the upper-right corner.

(Create New File) in the upper-right corner.In the text editor, enter the following SQL statement. This statement creates a table that provides the details of each game, such as team names and scores. The

configblock instructs dbt to create a table in the database based on this statement.-- Create a table that provides full details for each game, including -- the game ID, the home and visiting teams' city names and scores, -- the game winner's city name, and the game date.{{ config( materialized='table', file_format='delta' ) }}-- Step 4 of 4: Replace the visitor team IDs with their city names. select game_id, home, t.team_city as visitor, home_score, visitor_score, -- Step 3 of 4: Display the city name for each game's winner. case when home_score > visitor_score then home when visitor_score > home_score then t.team_city end as winner, game_date as date from ( -- Step 2 of 4: Replace the home team IDs with their actual city names. select game_id, t.team_city as home, home_score, visitor_team_id, visitor_score, game_date from ( -- Step 1 of 4: Combine data from various tables (for example, game and team IDs, scores, dates). select g.game_id, go.home_team_id, gs.home_team_score as home_score, go.visitor_team_id, gs.visitor_team_score as visitor_score, g.game_date from zzz_games as g, zzz_game_opponents as go, zzz_game_scores as gs where g.game_id = go.game_id and g.game_id = gs.game_id ) as all_ids, zzz_teams as t where all_ids.home_team_id = t.team_id ) as visitor_ids, zzz_teams as t where visitor_ids.visitor_team_id = t.team_id order by game_date descClick Save As.

For the filename, enter

models/zzz_game_details.sqland then click Create.Create a second model: Click

(Create New File) in the upper-right corner.

(Create New File) in the upper-right corner.In the text editor, enter the following SQL statement. This statement creates a view that lists team win-loss records for the season.

-- Create a view that summarizes the season's win and loss records by team. -- Step 2 of 2: Calculate the number of wins and losses for each team. select winner as team, count(winner) as wins, -- Each team played in 4 games. (4 - count(winner)) as losses from ( -- Step 1 of 2: Determine the winner and loser for each game. select game_id, winner, case when home = winner then visitor else home end as loser from zzz_game_details ) group by winner order by wins descClick Save As.

For the filename, enter

models/zzz_win_loss_records.sqland then click Create.Run the models: In the command line, run the

dbt runcommand with the paths to the two preceding files. In thedefaultdatabase (as specified in your project settings), dbt creates one table namedzzz_game_detailsand one view namedzzz_win_loss_records. dbt gets these view and table names from their related.sqlfile names.dbt run --model models/zzz_game_details.sql models/zzz_win_loss_records.sql... ... | 1 of 2 START table model default.zzz_game_details.................... [RUN] ... | 1 of 2 OK created table model default.zzz_game_details............... [OK ...] ... | 2 of 2 START view model default.zzz_win_loss_records................. [RUN] ... | 2 of 2 OK created view model default.zzz_win_loss_records............ [OK ...] ... | ... | Finished running 1 table model, 1 view model ... Completed successfully Done. PASS=2 WARN=0 ERROR=0 SKIP=0 TOTAL=2Run the following SQL code to list information about the new view and to select all rows from the table and view.

If you are connecting to a cluster, you can run this SQL code from a notebook that is attached to the cluster, specifying SQL as the default language for the notebook. If you are connecting to a SQL warehouse, you can run this SQL code from a query.

SHOW VIEWS FROM default LIKE 'zzz_win_loss_records';+-----------+----------------------+-------------+ | namespace | viewName | isTemporary | +===========+======================+=============+ | default | zzz_win_loss_records | false | +-----------+----------------------+-------------+SELECT * FROM zzz_game_details;+---------+---------------+---------------+------------+---------------+---------------+------------+ | game_id | home | visitor | home_score | visitor_score | winner | date | +=========+===============+===============+============+===============+===============+============+ | 1 | San Francisco | Seattle | 4 | 2 | San Francisco | 2020-12-12 | +---------+---------------+---------------+------------+---------------+---------------+------------+ | 2 | San Francisco | Amsterdam | 0 | 1 | Amsterdam | 2021-01-09 | +---------+---------------+---------------+------------+---------------+---------------+------------+ | 3 | Seattle | San Francisco | 1 | 2 | San Francisco | 2020-12-19 | +---------+---------------+---------------+------------+---------------+---------------+------------+ | 4 | Seattle | Amsterdam | 3 | 2 | Seattle | 2021-01-16 | +---------+---------------+---------------+------------+---------------+---------------+------------+ | 5 | Amsterdam | San Francisco | 3 | 0 | Amsterdam | 2021-01-23 | +---------+---------------+---------------+------------+---------------+---------------+------------+ | 6 | Amsterdam | Seattle | 3 | 1 | Amsterdam | 2021-02-06 | +---------+---------------+---------------+------------+---------------+---------------+------------+SELECT * FROM zzz_win_loss_records;+---------------+------+--------+ | team | wins | losses | +===============+======+========+ | Amsterdam | 3 | 1 | +---------------+------+--------+ | San Francisco | 2 | 2 | +---------------+------+--------+ | Seattle | 1 | 3 | +---------------+------+--------+

Step 3: Create and run tests

In this step, you create tests, which are assertions you make about your models. When you run these tests, dbt tells you if each test in your project passes or fails.

There are two type of tests. Schema tests, written in YAML, return the number of records that do not pass an assertion. When this number is zero, all records pass, therefore the tests pass. Data tests are specific queries that must return zero records to pass.

Create the schema tests: Click

(Create New File) in the upper-right corner.

(Create New File) in the upper-right corner.In the text editor, enter the following content. This file includes schema tests that determine whether the specified columns have unique values, are not null, have only the specified values, or a combination.

version: 2 models: - name: zzz_game_details columns: - name: game_id tests: - unique - not_null - name: home tests: - not_null - accepted_values: values: ['Amsterdam', 'San Francisco', 'Seattle'] - name: visitor tests: - not_null - accepted_values: values: ['Amsterdam', 'San Francisco', 'Seattle'] - name: home_score tests: - not_null - name: visitor_score tests: - not_null - name: winner tests: - not_null - accepted_values: values: ['Amsterdam', 'San Francisco', 'Seattle'] - name: date tests: - not_null - name: zzz_win_loss_records columns: - name: team tests: - unique - not_null - relationships: to: ref('zzz_game_details') field: home - name: wins tests: - not_null - name: losses tests: - not_nullClick Save As.

For the filename, enter

models/schema.yml, and then click Create.Create the first data test: Click

(Create New File) in the upper-right corner.

(Create New File) in the upper-right corner.In the text editor, enter the following SQL statement. This file includes a data test to determine whether any games happened outside of the regular season.

-- This season's games happened between 2020-12-12 and 2021-02-06. -- For this test to pass, this query must return no results. select date from zzz_game_details where date < '2020-12-12' or date > '2021-02-06'Click Save As.

For the filename, enter

tests/zzz_game_details_check_dates.sql, and then click Create.Create a second data test: Click

(Create New File) in the upper-right corner.

(Create New File) in the upper-right corner.In the text editor, enter the following SQL statement. This file includes a data test to determine whether any scores were negative or any games were tied.

-- This sport allows no negative scores or tie games. -- For this test to pass, this query must return no results. select home_score, visitor_score from zzz_game_details where home_score < 0 or visitor_score < 0 or home_score = visitor_scoreClick Save As.

For the filename, enter

tests/zzz_game_details_check_scores.sql, and then click Create.Create a third data test: Click

(Create New File) in the upper-right corner.

(Create New File) in the upper-right corner.In the text editor, enter the following SQL statement. This file includes a data test to determine whether any teams had negative win or loss records, had more win or loss records than games played, or played more games than were allowed.

-- Each team participated in 4 games this season. -- For this test to pass, this query must return no results. select wins, losses from zzz_win_loss_records where wins < 0 or wins > 4 or losses < 0 or losses > 4 or (wins + losses) > 4Click Save As.

For the filename, enter

tests/zzz_win_loss_records_check_records.sql, and then click Create.Run the tests: In the command line, run the

dbt testcommand.

Step 4: Clean up

You can delete the tables and views you created for this example by running the following SQL code.

If you are connecting to a cluster, you can run this SQL code from a notebook that is attached to the cluster, specifying SQL as the default language for the notebook. If you are connecting to a SQL warehouse, you can run this SQL code from a query.

DROP TABLE zzz_game_opponents;

DROP TABLE zzz_game_scores;

DROP TABLE zzz_games;

DROP TABLE zzz_teams;

DROP TABLE zzz_game_details;

DROP VIEW zzz_win_loss_records;

DROP TABLE diamonds;

DROP TABLE diamonds_four_cs;

DROP VIEW diamonds_list_colors;

DROP VIEW diamonds_prices;

Next steps

- Learn more about dbt models.

- Learn how to test your dbt projects.

- Learn how to use Jinja, a templating language, for programming SQL in your dbt projects.

- Learn about dbt best practices.