Capture and view data lineage using Unity Catalog

This article describes how to capture and visualize data lineage using Catalog Explorer, the data lineage system tables, and the REST API.

You can use Unity Catalog to capture runtime data lineage across queries run on Azure Databricks. Lineage is supported for all languages and is captured down to the column level. Lineage data includes notebooks, jobs, and dashboards related to the query. Lineage can be visualized in Catalog Explorer in near real time and retrieved programmatically using the lineage system tables and the Databricks REST API.

Lineage is aggregated across all workspaces attached to a Unity Catalog metastore. This means that lineage captured in one workspace is visible in any other workspace that shares that metastore. Specifically, tables and other data objects registered in the metastore are visible to users who have at least BROWSE permissions on those objects, across all workspaces attached to the metastore. However, detailed information about workspace-level objects like notebooks and dashboards in other workspaces is masked (see Limitations and Lineage permissions).

Lineage data is retained for one year.

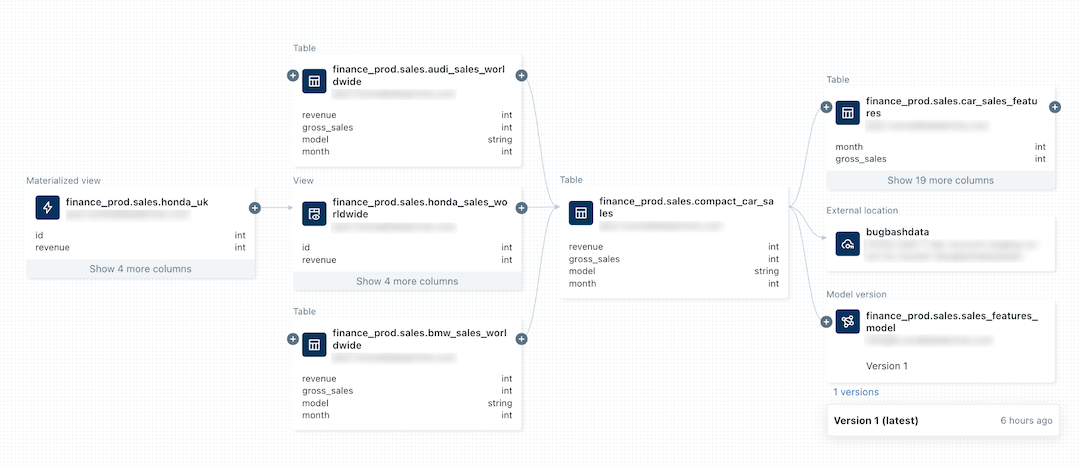

The following image is a sample lineage graph. Specific data lineage functionality and examples are addressed later in this article.

For information about tracking the lineage of a machine learning model, see Track the data lineage of a model in Unity Catalog.

Requirements

The following are required to capture data lineage using Unity Catalog:

- The workspace must have Unity Catalog enabled.

- Tables must be registered in a Unity Catalog metastore.

- Queries must use the Spark DataFrame (for example, Spark SQL functions that return a DataFrame) or Databricks SQL interfaces. For examples of Databricks SQL and PySpark queries, see Examples.

- To view the lineage of a table or view, users must have at least the

BROWSEprivilege on the parent catalog of the table or view. The parent catalog must also be accessible from the workspace. See Limit catalog access to specific workspaces. - To view lineage information for notebooks, jobs, or dashboards, users must have permissions on these objects as defined by the access control settings in the workspace. See Lineage permissions.

- To view lineage for a Unity Catalog-enabled pipeline, you must have

CAN_VIEWpermissions on the pipeline. - Lineage tracking of streaming between Delta tables requires Databricks Runtime 11.3 LTS or above.

- Column lineage tracking for DLT workloads requires Databricks Runtime 13.3 LTS or above.

- You might need to update your outbound firewall rules to allow for connectivity to the Event Hubs endpoint in the Azure Databricks control plane. Typically this applies if your Azure Databricks workspace is deployed in your own VNet (also known as VNet injection). To get the Event Hubs endpoint for your workspace region, see Metastore, artifact Blob storage, system tables storage, log Blob storage, and Event Hubs endpoint IP addresses. For information about setting up user-defined routes (UDR) for Azure Databricks, see User-defined route settings for Azure Databricks.

Examples

Note

The following examples use the catalog name

lineage_dataand the schema namelineagedemo. To use a different catalog and schema, change the names used in the examples.To complete this example, you must have

CREATEandUSE SCHEMAprivileges on a schema. A metastore admin, catalog owner, schema owner, or user with theMANAGEprivilege on the schema can grant these privileges. For example, to give all users in the group ‘data_engineers’ permission to create tables in thelineagedemoschema in thelineage_datacatalog, a user with one of the above privileges or roles can run the following queries:CREATE SCHEMA lineage_data.lineagedemo; GRANT USE SCHEMA, CREATE on SCHEMA lineage_data.lineagedemo to `data_engineers`;

Capture and explore lineage

To capture lineage data:

Go to your Azure Databricks landing page, click

New in the sidebar, and select Notebook from the menu.

New in the sidebar, and select Notebook from the menu.Enter a name for the notebook and select SQL in Default Language.

In Cluster, select a cluster with access to Unity Catalog.

Click Create.

In the first notebook cell, enter the following queries:

CREATE TABLE IF NOT EXISTS lineage_data.lineagedemo.menu ( recipe_id INT, app string, main string, dessert string ); INSERT INTO lineage_data.lineagedemo.menu (recipe_id, app, main, dessert) VALUES (1,"Ceviche", "Tacos", "Flan"), (2,"Tomato Soup", "Souffle", "Creme Brulee"), (3,"Chips","Grilled Cheese","Cheesecake"); CREATE TABLE lineage_data.lineagedemo.dinner AS SELECT recipe_id, concat(app," + ", main," + ",dessert) AS full_menu FROM lineage_data.lineagedemo.menuTo run the queries, click in the cell and press shift+enter or click

and select Run Cell.

and select Run Cell.

To use Catalog Explorer to view the lineage generated by these queries:

In the Search box in the top bar of the Azure Databricks workspace, search for the

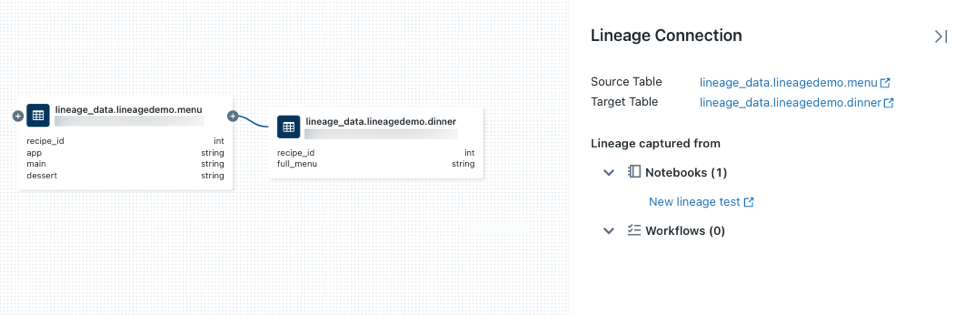

lineage_data.lineagedemo.dinnertable and select it.Select the Lineage tab. The lineage panel appears and displays related tables (for this example it’s the

menutable).To view an interactive graph of the data lineage, click See Lineage Graph. By default, one level is displayed in the graph. Click the

icon on a node to reveal more connections if they are available.

icon on a node to reveal more connections if they are available.Click an arrow that connects nodes in the lineage graph to open the Lineage connection panel. The Lineage connection panel shows details about the connection, including source and target tables, notebooks, and jobs.

To show the notebook associated with the

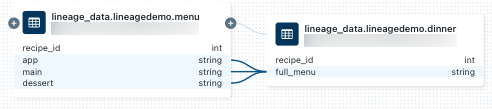

dinnertable, select the notebook in the Lineage connection panel or close the lineage graph and click Notebooks. To open the notebook in a new tab, click the notebook name.To view the column-level lineage, click a column in the graph to show links to related columns. For example, clicking on the ‘full_menu’ column shows the upstream columns the column was derived from:

To view lineage using a different language, for example, Python:

Open the notebook you created previously, create a new cell, and enter the following Python code:

%python from pyspark.sql.functions import rand, round df = spark.range(3).withColumn("price", round(10*rand(seed=42),2)).withColumnRenamed("id","recipe_id") df.write.mode("overwrite").saveAsTable("lineage_data.lineagedemo.price") dinner = spark.read.table("lineage_data.lineagedemo.dinner") price = spark.read.table("lineage_data.lineagedemo.price") dinner_price = dinner.join(price, on="recipe_id") dinner_price.write.mode("overwrite").saveAsTable("lineage_data.lineagedemo.dinner_price")Run the cell by clicking in the cell and pressing shift+enter or clicking

and selecting Run Cell.

and selecting Run Cell.In the Search box in the top bar of the Azure Databricks workspace, search for the

lineage_data.lineagedemo.pricetable and select it.Go to the Lineage tab and click See Lineage Graph. Click the

icons to explore the data lineage generated by the queries.

icons to explore the data lineage generated by the queries.

Click an arrow that connects nodes in the lineage graph to open the Lineage connection panel. The Lineage connection panel shows details about the connection, including source and target tables, notebooks, and jobs.

Capture and view workflow lineage

Lineage is also captured for any workflow that reads or writes to Unity Catalog. To view lineage for an Azure Databricks workflow:

Click

New in the sidebar and select Notebook from the menu.

New in the sidebar and select Notebook from the menu.Enter a name for the notebook and select SQL in Default Language.

Click Create.

In the first notebook cell, enter the following query:

SELECT * FROM lineage_data.lineagedemo.menuClick Schedule in the top bar. In the schedule dialog, select Manual, select a cluster with access to Unity Catalog, and click Create.

Click Run now.

In the Search box in the top bar of the Azure Databricks workspace, search for the

lineage_data.lineagedemo.menutable and select it.On the Lineage tab, click Workflows, and select the Downstream tab. The job name appears under Job Name as a consumer of the

menutable.

Capture and view dashboard lineage

To create a dashboard and view its data lineage:

Go to your Azure Databricks landing page and open Catalog Explorer by clicking Catalog in the sidebar.

Click the catalog name, click lineagedemo, and select the

menutable. You can also use the Search box in the top bar to search for themenutable.Click Open in a dashboard.

Select the columns that you want to add to the dashboard and click Create.

Publish the dashboard.

Only published dashboards are tracked in data lineage.

In the Search box in the top bar, search for the

lineage_data.lineagedemo.menutable and select it.On the Lineage tab, click Dashboards. The dashboard appears under Dashboard Name as a consumer of the menu table.

Lineage permissions

Lineage graphs share the same permission model as Unity Catalog. Tables and other data objects registered in the Unity Catalog metastore are visible only to users who have at least BROWSE permissions on those objects. If a user does not have the BROWSE or SELECT privilege on a table, they cannot explore its lineage. Lineage graphs display Unity Catalog objects across all workspaces attached to the metastore, as long as the user has adequate object permissions.

For example, run the following commands for userA:

GRANT USE SCHEMA on lineage_data.lineagedemo to `userA@company.com`;

GRANT SELECT on lineage_data.lineagedemo.menu to `userA@company.com`;

When userA views the lineage graph for the lineage_data.lineagedemo.menu table, they will see the menu table. They will not be able to see information about associated tables, such as the downstream lineage_data.lineagedemo.dinner table. The dinner table is displayed as a masked node in the display to userA, and userA cannot expand the graph to reveal downstream tables from tables they do not have permission to access.

If you run the following command to grant the BROWSE permission to userB, that user can view the lineage graph for any table in the lineage_data schema.:

GRANT BROWSE on lineage_data to `userB@company.com`;

Likewise, lineage users must have specific permissions to view workspace objects like notebooks, jobs, and dashboards. In addition, they can only see detailed information about workspace objects when they are logged into the workspace in which those objects were created. Detailed information about workspace-level objects in other workspaces is masked in the lineage graph.

For more information about managing access to securable objects in Unity Catalog, see Manage privileges in Unity Catalog. For more information about managing access to workspace objects like notebooks, jobs, and dashboards, see Access control lists.

Delete lineage data

Warning

The following instructions delete all objects stored in Unity Catalog. Use these instructions only if necessary. For example, to meet compliance requirements.

To delete lineage data, you must delete the metastore managing the Unity Catalog objects. For more information about deleting the metastore, see Delete a metastore. Data will be deleted within 90 days.

Get table lineage using Databricks Assistant

Databricks Assistant provides detailed information on table lineages and insights.

To get lineage information using Assistant:

- Go to your Azure Databricks landing page and open Catalog Explorer by clicking

Catalog in the sidebar.

Catalog in the sidebar. - Click the catalog name, and then click the

Assistant icon in the upper-right corner.

Assistant icon in the upper-right corner. - At the Assistant prompt, type:

- /getTableLineages to view upstream and downstream dependencies.

- /getTableInsights to access metadata-driven insights, such as user activity and query patterns.

These queries enable Assistant to answer questions like “show me downstream lineages” or “who queries this table most often.”

Query lineage data using system tables

You can use the lineage system tables to programmatically query lineage data. For detailed instructions, see Monitor account activity with system tables and Lineage system tables reference.

If your workspace is in a region that doesn’t support lineage system tables, you can alternatively use the Data Lineage REST API to retrieve lineage data programmatically.

Retrieve lineage using the Data Lineage REST API

The data lineage API allows you to retrieve table and column lineage. However, if your workspace is in a region that supports the lineage system tables, you should use system table queries instead of the REST API. System tables are a better option for programmatic retrieval of lineage data. Most regions support the lineage system tables.

Important

To access Databricks REST APIs, you must authenticate.

Retrieve table lineage

This example retrieves lineage data for the dinner table.

Request

curl --netrc -X GET \

-H 'Content-Type: application/json' \

https://<workspace-instance>/api/2.0/lineage-tracking/table-lineage \

-d '{"table_name": "lineage_data.lineagedemo.dinner", "include_entity_lineage": true}'

Replace <workspace-instance>.

This example uses a .netrc file.

Response

{

"upstreams": [

{

"tableInfo": {

"name": "menu",

"catalog_name": "lineage_data",

"schema_name": "lineagedemo",

"table_type": "TABLE"

},

"notebookInfos": [

{

"workspace_id": 4169371664718798,

"notebook_id": 1111169262439324

}

]

}

],

"downstreams": [

{

"notebookInfos": [

{

"workspace_id": 4169371664718798,

"notebook_id": 1111169262439324

}

]

},

{

"tableInfo": {

"name": "dinner_price",

"catalog_name": "lineage_data",

"schema_name": "lineagedemo",

"table_type": "TABLE"

},

"notebookInfos": [

{

"workspace_id": 4169371664718798,

"notebook_id": 1111169262439324

}

]

}

]

}

Retrieve column lineage

This example retrieves column data for the dinner table.

Request

curl --netrc -X GET \

-H 'Content-Type: application/json' \

https://<workspace-instance>/api/2.0/lineage-tracking/column-lineage \

-d '{"table_name": "lineage_data.lineagedemo.dinner", "column_name": "dessert"}'

Replace <workspace-instance>.

This example uses a .netrc file.

Response

{

"upstream_cols": [

{

"name": "dessert",

"catalog_name": "lineage_data",

"schema_name": "lineagedemo",

"table_name": "menu",

"table_type": "TABLE"

},

{

"name": "main",

"catalog_name": "lineage_data",

"schema_name": "lineagedemo",

"table_name": "menu",

"table_type": "TABLE"

},

{

"name": "app",

"catalog_name": "lineage_data",

"schema_name": "lineagedemo",

"table_name": "menu",

"table_type": "TABLE"

}

],

"downstream_cols": [

{

"name": "full_menu",

"catalog_name": "lineage_data",

"schema_name": "lineagedemo",

"table_name": "dinner_price",

"table_type": "TABLE"

}

]

}

Limitations

Although lineage is aggregated for all workspaces that are attached to the same Unity Catalog metastore, details for workspace objects like notebooks and dashboards are visible only in the workspace in which they were created.

Because lineage is computed on a one-year rolling window, lineage collected more than one year ago is not displayed. For example, if a job or query reads data from table A and writes to table B, the link between table A and table B is displayed for one year only. You can filter lineage data by time frame within the one-year window.

Jobs that use the Jobs API

runs submitrequest are unavailable when viewing lineage. Table and column level lineage is still captured when using theruns submitrequest, but the link to the run is not captured.Unity Catalog captures lineage to the column level as much as possible. However, there are some cases where column-level lineage cannot be captured.

Column lineage is supported only when both the source and target are referenced by table name (Example:

select * from <catalog>.<schema>.<table>). Column lineage cannot be captured if the source or the target is addressed by path (Example:select * from delta."s3://<bucket>/<path>").If a table or view is renamed, lineage is not captured for the renamed table or view.

If a schema or catalog is renamed, lineage is not captured for tables and views under the renamed catalog or schema.

If you use Spark SQL dataset checkpointing, lineage is not captured.

Unity Catalog captures lineage from DLT pipelines in most cases. However, in some instances, complete lineage coverage cannot be guaranteed, such as when pipelines use the APPLY CHANGES API or TEMPORARY tables.

Lineage does not capture Stack functions.

Global temp views are not captured in lineage.

Tables under

system.information_schemaare not captured in lineage.Complete column-level lineage is not captured by default for

MERGEoperations.You can turn on lineage capture for

MERGEoperations by setting the Spark propertyspark.databricks.dataLineage.mergeIntoV2Enabledtotrue. Enabling this flag can slow down query performance, particularly in workloads that involve very wide tables.