Cognitive Services: Optical Character Recognition (OCR) from an image using Computer Vision API And C#

Introduction

In our previous article, we learned how to Analyze an Image Using Computer Vision API With ASP.Net Core & C#. In this article, we are going to learn how to extract printed text also known as optical character recognition (OCR) from an image using one of the important Cognitive Services API called as Computer Vision API. So we need a valid subscription key for accessing this feature in an image.

Optical Character Recognition (OCR)

Optical Character Recognition (OCR) detects text in an image and extracts the recognized characters into a machine-usable character stream.

Prerequisites

- Subscription key ( Azure Portal ).

- Visual Studio 2015 or 2017

Subscription Key Free Trial

If you don’t have Microsoft Azure Subscription and want to test the Computer Vision API because it requires a valid Subscription key for processing the image information. Don’t worry !! Microsoft gives a 7-day trial Subscription Key ( Click here ). We can use that Subscription key for testing purposes. If you sign up using the Computer Vision free trial, then your subscription keys are valid for the west-central region (https://westcentralus.api.cognitive.microsoft.com ).

Requirements

These are the major requirements mentioned in the Microsoft docs.

- Supported input methods: Raw image binary in the form of an application/octet-stream or image URL.

- Supported image formats: JPEG, PNG, GIF, BMP.

- Image file size: Less than 4 MB.

- Image dimension: Greater than 50 x 50 pixels.

Computer Vision API

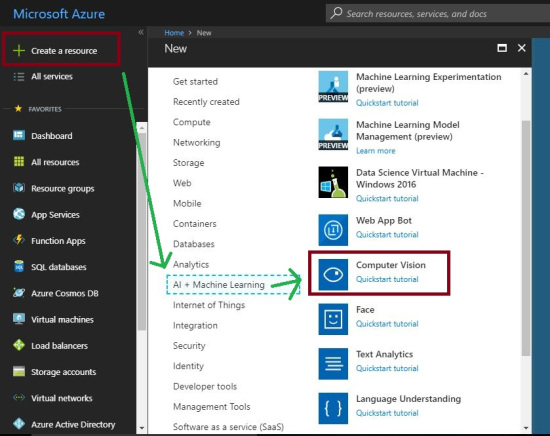

First, we need to log into the Azure Portal with our Azure credentials. Then we need to create an Azure Computer Vision Subscription Key in the Azure portal.

Click on "Create a resource" on the left side menu and it will open an "Azure Marketplace". There, we can see the list of services. Click "AI + Machine Learning" then click on the "Computer Vision".

Provision a Computer Vision Subscription Key

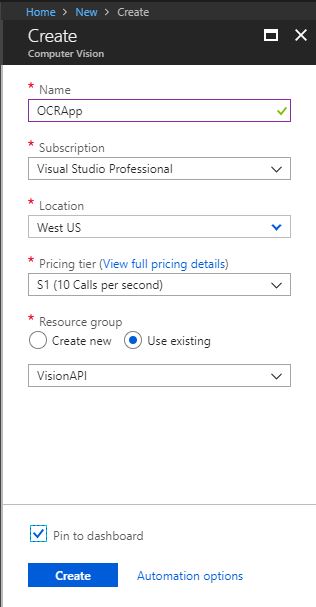

After clicking the "Computer Vision", It will open another section. There, we need to provide the basic information about Computer Vision API.

Name: Name of the Computer Vision API ( Eg. OCRApp ).

Subscription: We can select our Azure subscription for Computer Vision API creation.

Location: We can select our location of the resource group. The best thing is we can choose a location closest to our customer.

Pricing tier: Select an appropriate pricing tier for our requirement.

Resource group: We can create a new resource group or choose from an existing one.

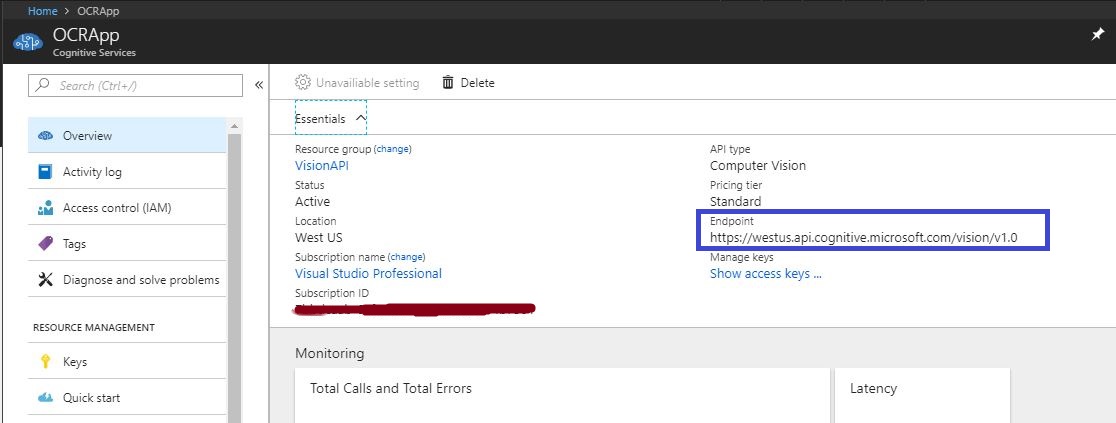

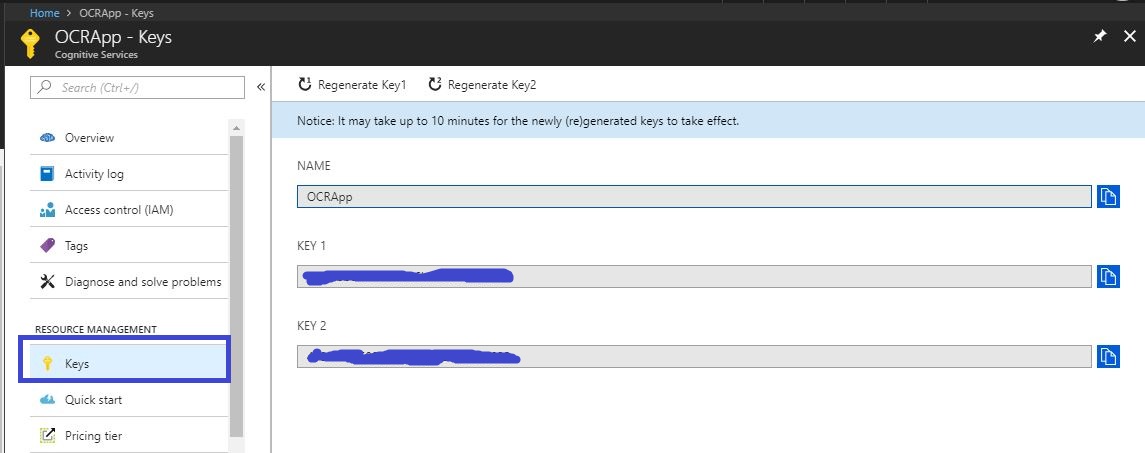

Now click on the "OCRApp" in dashboard page and it will redirect to the details page of OCRApp ( "Overview" ). Here, we can see the Manage Key ( Subscription key details ) & Endpoint details. Click on the Show access keys links and it will redirect to another page.

We can use any of the subscription keys or regenerate the given key for getting image information using Computer Vision API.

Endpoint

As we mentioned above the location is the same for all the free trial Subscription Keys. In Azure, we can choose available locations while creating a Computer Vision API. We have used the following endpoint in our code.

***"https://westus.api.cognitive.microsoft.com/vision/v1.0/ocr"

View Model

The following model will contain the API image response information.

using System.Collections.Generic;

namespace OCRApp.Models

{

public class Word

{

public string boundingBox { get; set; }

public string text { get; set; }

}

public class Line

{

public string boundingBox { get; set; }

public List<Word> words { get; set; }

}

public class Region

{

public string boundingBox { get; set; }

public List<Line> lines { get; set; }

}

public class ImageInfoViewModel

{

public string language { get; set; }

public string orientation { get; set; }

public int textAngle { get; set; }

public List<Region> regions { get; set; }

}

}

Request URL

We can add additional parameters or request parameters ( optional ) in our API "endPoint" and it will provide more information for the given image.

https://[location].api.cognitive.microsoft.com/vision/v1.0/ocr[?language][&detectOrientation ]

Request parameters

These are the following optional parameters available in computer vision API.

- language

- detectOrientation

language

The service will detect 26 languages of the text in the image and It will contain “unk” as the default value. That means the service will auto-detect the language of the text in the image.

The following are the supported language mention in the Microsoft API documentation.

- unk (AutoDetect)

- en (English)

- zh-Hans (ChineseSimplified)

- zh-Hant (ChineseTraditional)

- cs (Czech)

- da (Danish)

- nl (Dutch)

- fi (Finnish)

- fr (French)

- de (German)

- el (Greek)

- hu (Hungarian)

- it (Italian)

- ja (Japanese)

- ko (Korean)

- nb (Norwegian)

- pl (Polish)

- pt (Portuguese,

- ru (Russian)

- es (Spanish)

- sv (Swedish)

- tr (Turkish)

- ar (Arabic)

- ro (Romanian)

- sr-Cyrl (SerbianCyrillic)

- sr-Latn (SerbianLatin)

- sk (Slovak)

detectOrientation

This will detect the text orientation in the image, for this feature we need to add detectOrientation=true in the service url or Request url as we discussed earlier.

Vision API Service

The following code will process and generate image information using Computer Vision API and its response is mapped into the “ImageInfoViewModel”. We can add the valid Computer Vision API Subscription Key into the following code.

using Newtonsoft.Json;

using OCRApp.Models;

using System;

using System.Collections.Generic;

using System.IO;

using System.Net.Http;

using System.Net.Http.Headers;

using System.Threading.Tasks;

namespace OCRApp.Business_Layer

{

public class VisionApiService

{

// Replace <Subscription Key> with your valid subscription key.

const string subscriptionKey = "<Subscription Key>";

// You must use the same region in your REST call as you used to

// get your subscription keys. The paid subscription keys you will get

// it from microsoft azure portal.

// Free trial subscription keys are generated in the westcentralus region.

// If you use a free trial subscription key, you shouldn't need to change

// this region.

const string endPoint =

"https://westus.api.cognitive.microsoft.com/vision/v1.0/ocr";

///

<summary>

/// Gets the text visible in the specified image file by using

/// the Computer Vision REST API.

/// </summary>

public async Task<string> MakeOCRRequest()

{

string imageFilePath = @"C:\Users\rajeesh.raveendran\Desktop\bill.jpg";

var errors = new List<string>();

string extractedResult = "";

ImageInfoViewModel responeData = new ImageInfoViewModel();

try

{

HttpClient client = new HttpClient();

// Request headers.

client.DefaultRequestHeaders.Add(

"Ocp-Apim-Subscription-Key", subscriptionKey);

// Request parameters.

string requestParameters = "language=unk&detectOrientation=true";

// Assemble the URI for the REST API Call.

string uri = endPoint + "?" + requestParameters;

HttpResponseMessage response;

// Request body. Posts a locally stored JPEG image.

byte[] byteData = GetImageAsByteArray(imageFilePath);

using (ByteArrayContent content = new ByteArrayContent(byteData))

{

// This example uses content type "application/octet-stream".

// The other content types you can use are "application/json"

// and "multipart/form-data".

content.Headers.ContentType =

new MediaTypeHeaderValue("application/octet-stream");

// Make the REST API call.

response = await client.PostAsync(uri, content);

}

// Get the JSON response.

string result = await response.Content.ReadAsStringAsync();

//If it is success it will execute further process.

if (response.IsSuccessStatusCode)

{

// The JSON response mapped into respective view model.

responeData = JsonConvert.DeserializeObject<ImageInfoViewModel>(result,

new JsonSerializerSettings

{

NullValueHandling = NullValueHandling.Include,

Error = delegate (object sender, Newtonsoft.Json.Serialization.ErrorEventArgs earg)

{

errors.Add(earg.ErrorContext.Member.ToString());

earg.ErrorContext.Handled = true;

}

}

);

var linesCount = responeData.regions[0].lines.Count;

for (int i = 0; i < linesCount; i++)

{

var wordsCount = responeData.regions[0].lines[i].words.Count;

for (int j = 0; j < wordsCount; j++)

{

//Appending all the lines content into one.

extractedResult += responeData.regions[0].lines[i].words[j].text + " ";

}

extractedResult += Environment.NewLine;

}

}

}

catch (Exception e)

{

Console.WriteLine("\n" + e.Message);

}

return extractedResult;

}

///

<summary>

/// Returns the contents of the specified file as a byte array.

/// </summary>

/// <param name="imageFilePath">The image file to read.</param>

/// <returns>The byte array of the image data.</returns>

static byte[] GetImageAsByteArray(string imageFilePath)

{

using (FileStream fileStream =

new FileStream(imageFilePath, FileMode.Open, FileAccess.Read))

{

BinaryReader binaryReader = new BinaryReader(fileStream);

return binaryReader.ReadBytes((int)fileStream.Length);

}

}

}

}

API Response – Based on the given Image

The successful JSON response.

{

"language": "en",

"orientation": "Up",

"textAngle": 0,

"regions": [

{

"boundingBox": "306,69,292,206",

"lines": [

{

"boundingBox": "306,69,292,24",

"words": [

{

"boundingBox": "306,69,17,19",

"text": "\"I"

},

{

"boundingBox": "332,69,45,19",

"text": "Will"

},

{

"boundingBox": "385,69,88,24",

"text": "Always"

},

{

"boundingBox": "482,69,94,19",

"text": "Choose"

},

{

"boundingBox": "585,74,13,14",

"text": "a"

}

]

},

{

"boundingBox": "329,100,246,24",

"words": [

{

"boundingBox": "329,100,56,24",

"text": "Lazy"

},

{

"boundingBox": "394,100,85,19",

"text": "Person"

},

{

"boundingBox": "488,100,24,19",

"text": "to"

},

{

"boundingBox": "521,100,32,19",

"text": "Do"

},

{

"boundingBox": "562,105,13,14",

"text": "a"

}

]

},

{

"boundingBox": "310,131,284,19",

"words": [

{

"boundingBox": "310,131,95,19",

"text": "Difficult"

},

{

"boundingBox": "412,131,182,19",

"text": "Job....Because"

}

]

},

{

"boundingBox": "326,162,252,24",

"words": [

{

"boundingBox": "326,162,31,19",

"text": "He"

},

{

"boundingBox": "365,162,44,19",

"text": "Will"

},

{

"boundingBox": "420,162,52,19",

"text": "Find"

},

{

"boundingBox": "481,167,28,14",

"text": "an"

},

{

"boundingBox": "520,162,58,24",

"text": "Easy"

}

]

},

{

"boundingBox": "366,193,170,24",

"words": [

{

"boundingBox": "366,193,52,24",

"text": "way"

},

{

"boundingBox": "426,193,24,19",

"text": "to"

},

{

"boundingBox": "459,193,33,19",

"text": "Do"

},

{

"boundingBox": "501,193,35,19",

"text": "It!\""

}

]

},

{

"boundingBox": "462,256,117,19",

"words": [

{

"boundingBox": "462,256,37,19",

"text": "Bill"

},

{

"boundingBox": "509,256,70,19",

"text": "Gates"

}

]

}

]

}

]

}

Download

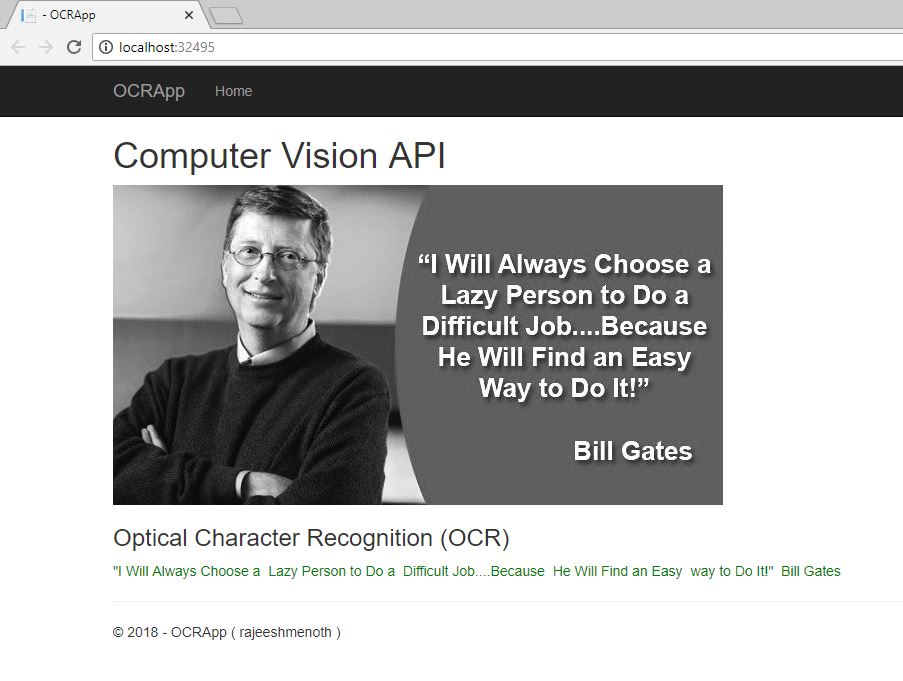

Output

Optical Character Recognition (OCR) from an image using Computer Vision API.

Reference

Summary

From this article we have learned Optical Character Recognition (OCR) from an image using One of the important Cognitive Services API ( Computer Vision API ). I hope this article is useful for all Azure Cognitive Services API beginners.

See Also

It's recommended to read more articles related to ASP.NET Core & Azure App Service.

- ASP.NET CORE 1.0: Getting Started

- ASP.NET Core 1.0: Project Layout

- ASP.NET Core 1.0: Middleware And Static files (Part 1)

- Middleware And Staticfiles In ASP.NET Core 1.0 - Part Two

- ASP.NET Core 1.0 Configuration: Aurelia Single Page Applications

- ASP.NET Core 1.0: Create An Aurelia Single Page Application

- Create Rest API Or Web API With ASP.NET Core 1.0

- ASP.NET Core 1.0: Adding A Configuration Source File

- Code First Migration - ASP.NET Core MVC With EntityFrameWork Core

- Building ASP.NET Core MVC Application Using EF Core and ASP.NET Core 1.0

- Send Email Using ASP.NET CORE 1.1 With MailKit In Visual Studio 2017

- ASP.NET Core And MVC Core: Session State

- Startup Page In ASP.NET Core

- Sending SMS Using ASP.NET Core With Twilio SMS API

- Create And Deploy An ASP.NET Core Web App In Azure

- Chat Bot with Azure Bot Service

- Channel Configuration - Azure Bot Service To Slack Application

- Cognitive Services : Analyze an Image Using Computer Vision API