Get started with Serverless AI Chat with RAG using LangChain.js

Creating AI apps can be complex. With LangChain.js, Azure Functions, and Serverless technologies, you can simplify this process. These tools manage infrastructure and scale automatically, letting you focus on chatbot functionality. The chatbot uses enterprise documents to generate AI responses.

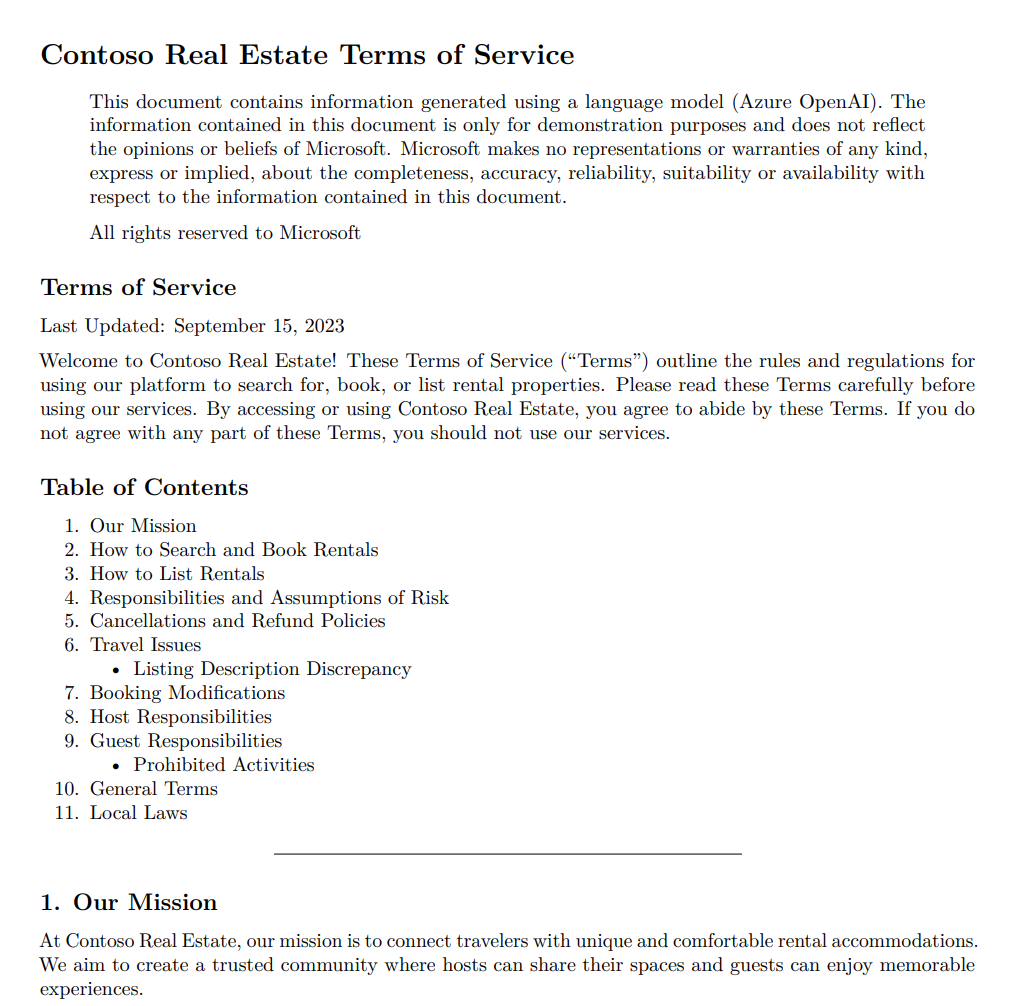

The code includes sample data for a fictitious company. Customers can ask support questions about the company's products. The data includes documents on the company's terms of service, privacy policy, and support guide.

Note

This article uses one or more AI app templates as the basis for the examples and guidance in the article. AI app templates provide you with well-maintained, easy to deploy reference implementations that help to ensure a high-quality starting point for your AI apps.

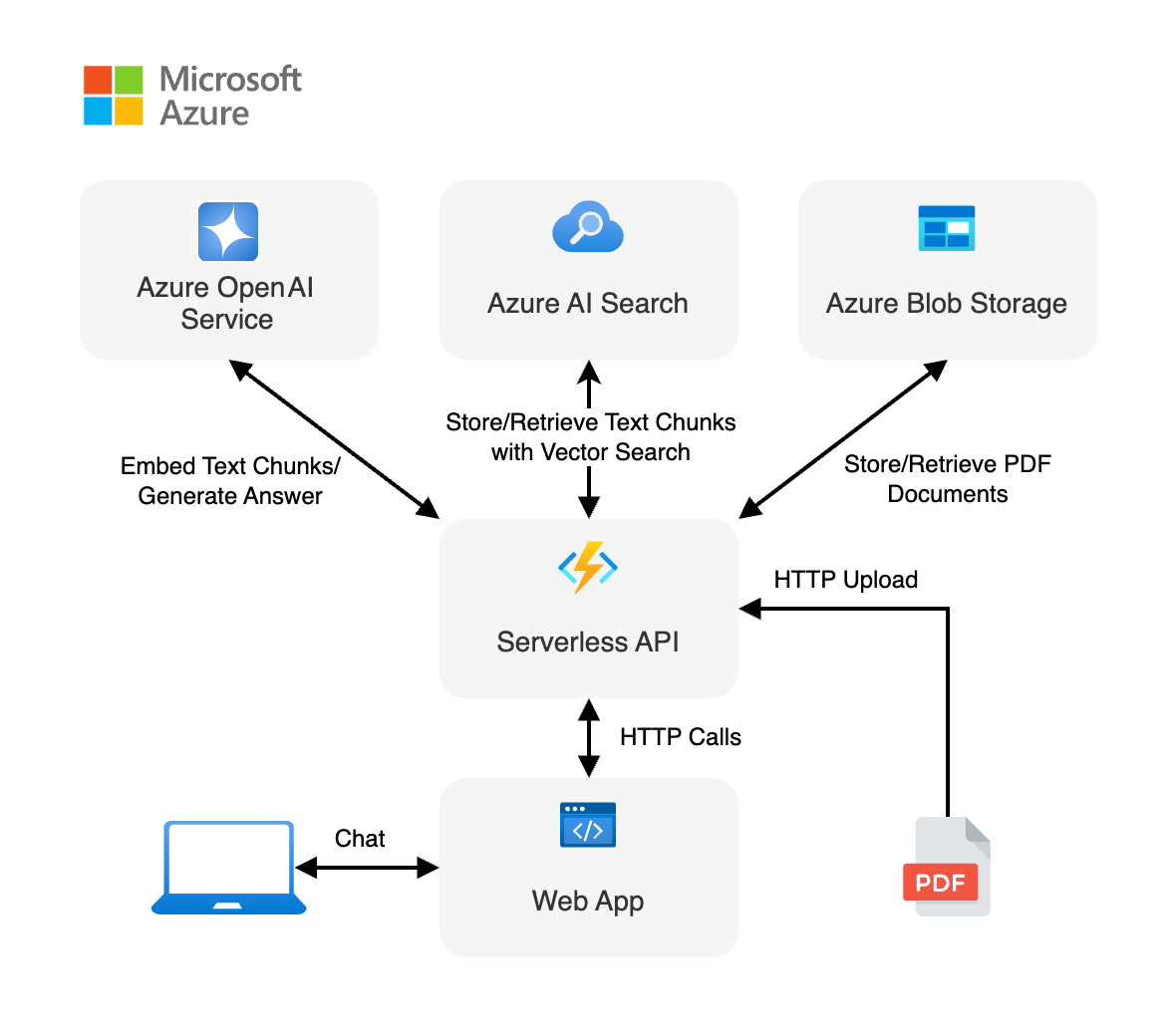

Architectural overview

The chat app

The user interacts with the application:

- The chat interface in the client web app for the conversation.

- The client web app sends the user's query to the Serverless API via HTTP calls.

- The Serverless API creates a chain to coordinate interactions between Azure AI and Azure AI Search to generate an answer.

- The PDF document retrieval by using Azure Blob Storage.

- The generated response is then sent back to the web app and displayed to the user.

A simple architecture of the chat app is shown in the following diagram:

LangChain.js simplifies the complexity between services

The API flow is useful to understand how LangChain.js is helpful in this scenario by abstracting out the interactions. The serverless API endpoint:

- Receives the question from the user.

- Creates client objects:

- Azure OpenAI for embeddings and chat

- Azure AI Search for the vector store

- Creates a document chain with the LLM model, the chat message (system and user prompts), and the document source.

- Creates a retrieval chain from the document chain and the vector store.

- Streams the responses from the retrieval chain.

The developer's work is to correctly configure the dependencies services, such as Azure OpenAI and Azure AI Search and construct the chains correctly. The underlying chain logic knows how to resolve the query. This allows you to construct chains from many different services and configurations as long as they work with the LangChain.js requirements.

Where is Azure in this architecture?

This application is made from multiple components:

A web app made with a single chat web component built with Lit and hosted on Azure Static Web Apps. The code is located in the

packages/webappfolder.A serverless API built with Azure Functions and using LangChain.js to ingest the documents and generate responses to the user chat queries. The code is located in the

packages/apifolder.An Azure OpenAI service to create embeddings and generate an answer.

A database to store the text extracted from the documents and the vectors generated by LangChain.js, using Azure AI Search.

A file storage to store the source documents, using Azure Blob Storage.

Prerequisites

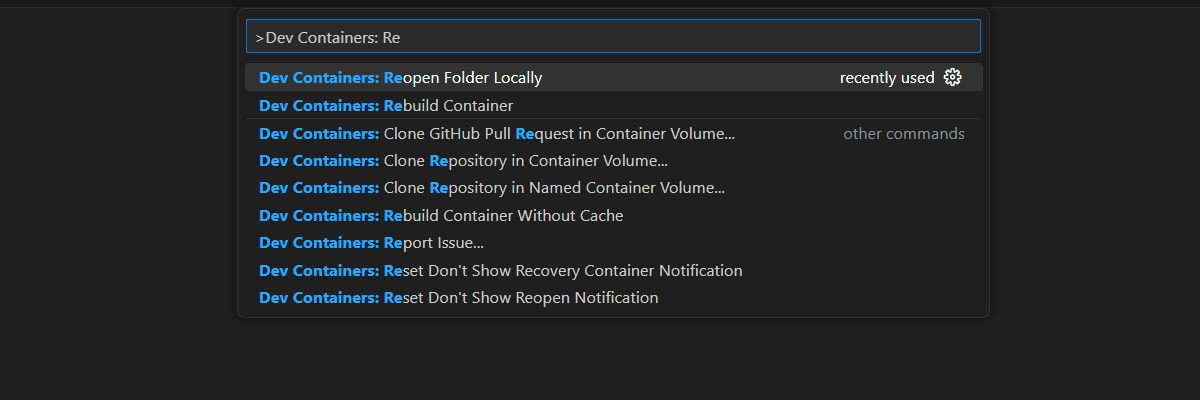

A development container environment is available with all dependencies required to complete this article. You can run the development container in GitHub Codespaces (in a browser) or locally using Visual Studio Code.

To use this article, you need the following prerequisites:

- An Azure subscription - Create one for free

- Azure account permissions - Your Azure Account must have Microsoft.Authorization/roleAssignments/write permissions, such as User Access Administrator or Owner.

- A GitHub account.

Open development environment

Use the following instructions to deploy a preconfigured development environment containing all required dependencies to complete this article.

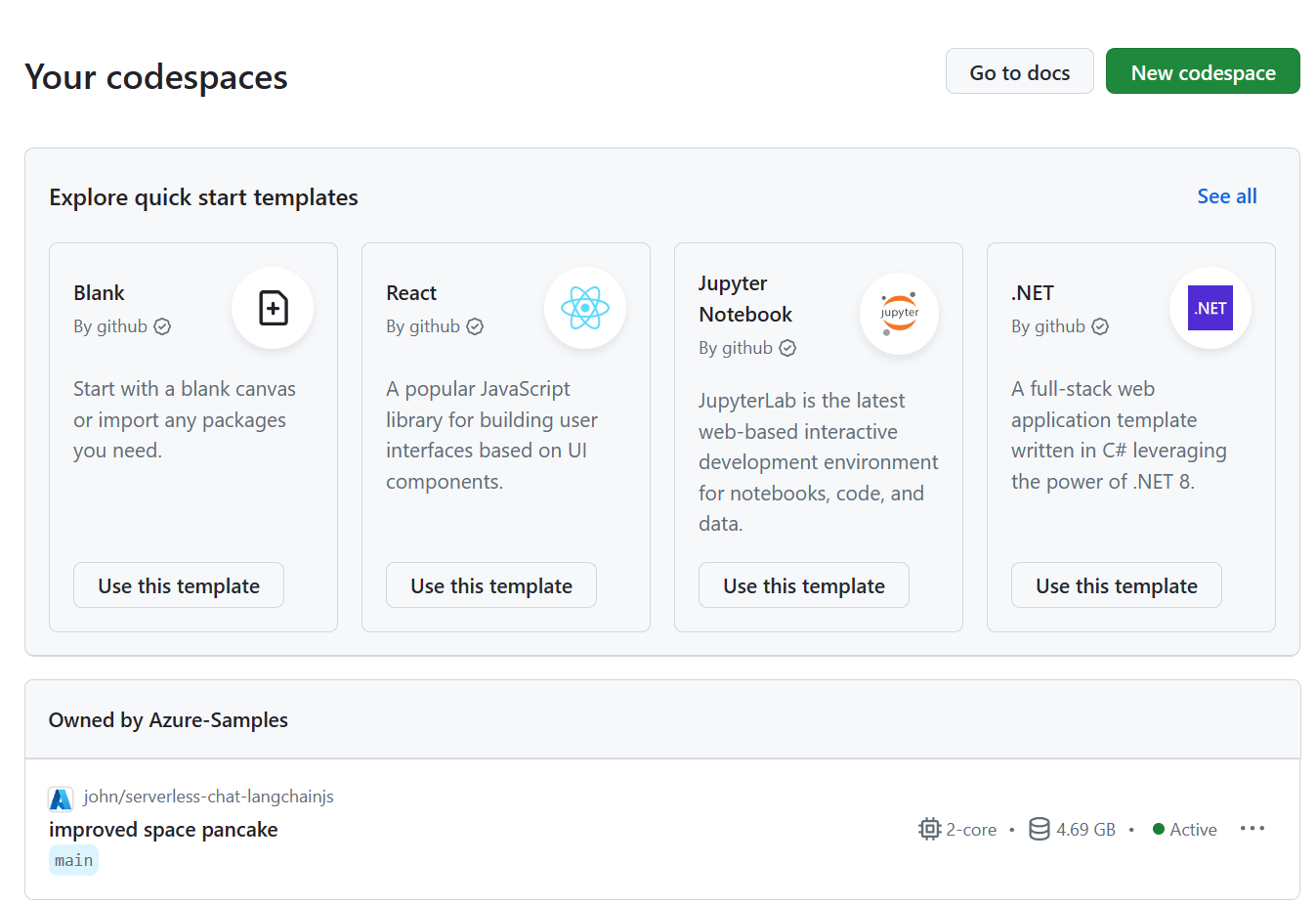

GitHub Codespaces runs a development container managed by GitHub with Visual Studio Code for the Web as the user interface. For the most straightforward development environment, use GitHub Codespaces so that you have the correct developer tools and dependencies preinstalled to complete this article.

Important

All GitHub accounts can use Codespaces for up to 60 hours free each month with 2 core instances. For more information, see GitHub Codespaces monthly included storage and core hours.

Open in codespace.

Wait for the codespace to start. This startup process can take a few minutes.

In the terminal at the bottom of the screen, sign in to Azure with the Azure Developer CLI.

azd auth loginComplete the authentication process.

The remaining tasks in this article take place in the context of this development container.

Deploy and run

The sample repository contains all the code and configuration files you need to deploy the serverless chat app to Azure. The following steps walk you through the process of deploying the sample to Azure.

Deploy chat app to Azure

Important

Azure resources created in this section incur immediate costs, primarily from the Azure AI Search resource. These resources may accrue costs even if you interrupt the command before it is fully executed.

Provision the Azure resources and deploy the source code by using the following Azure Developer CLI command:

azd upAnswer the prompts by using the following table:

Prompt Answer Environment name Keep it short and lowercase. Add your name or alias. For example, john-chat. It's used as part of the resource group name.Subscription Select the subscription for creating resources. Location (for hosting) Select a location near you from the list. Location for the OpenAI model Select a location near you from the list. If the same location is available as your first location, select that. Wait until app is deployed. It might take 5-10 minutes for the deployment to complete.

After the application successfully deploys, you see two URLs displayed in the terminal.

Select that URL labeled

Deploying service webappto open the chat application in a browser.

Use chat app to get answers from PDF files

The chat app is preloaded with rental information from a PDF file catalog. You can use the chat app to ask questions about the rental process. The following steps walk you through the process of using the chat app.

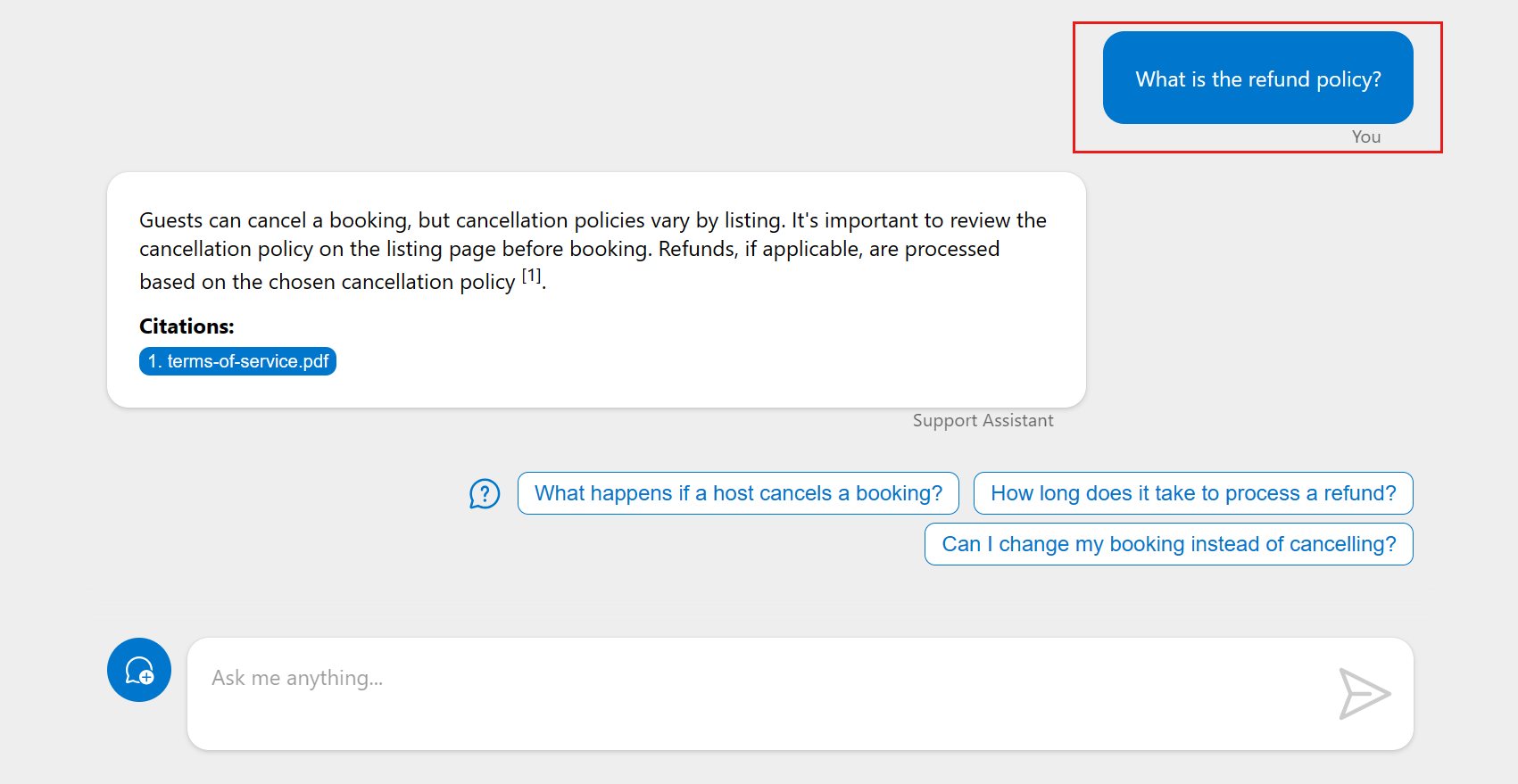

In the browser, select or enter What is the refund policy.

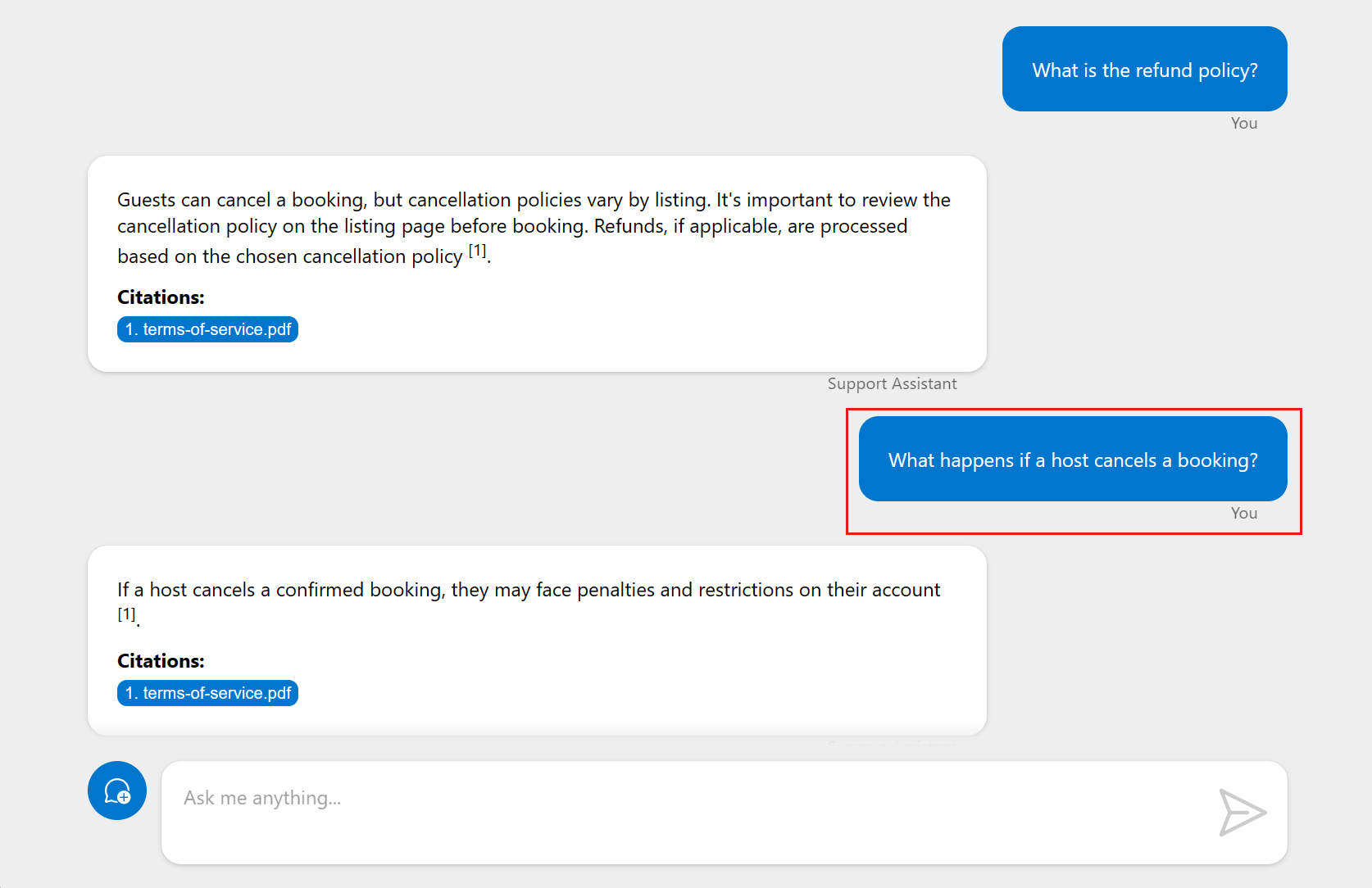

Select a follow-up question.

From the response, select the citation to see the document used to generate the answer. This delivers the document from Azure Storage to the client. When you're done with the new browser tab, close it to return to the serverless chat app.

Clean up resources

Clean up Azure resources

The Azure resources created in this article are billed to your Azure subscription. If you don't expect to need these resources in the future, delete them to avoid incurring more charges.

Delete the Azure resources and remove the source code with the following Azure Developer CLI command:

azd down --purge

Clean up GitHub Codespaces

Deleting the GitHub Codespaces environment ensures that you can maximize the amount of free per-core hours entitlement you get for your account.

Important

For more information about your GitHub account's entitlements, see GitHub Codespaces monthly included storage and core hours.

Sign into the GitHub Codespaces dashboard (https://github.com/codespaces).

Locate your currently running Codespaces sourced from the

Azure-Samples/serverless-chat-langchainjsGitHub repository.

Open the context menu,

..., for the codespace and then select Delete.

Get help

This sample repository offers troubleshooting information.

If your issue isn't addressed, log your issue to the repository's Issues.